TL;DR

- AI agents in production have outpaced the governance, identity, and rollback frameworks designed to manage them — and the cracks are showing across every enterprise vertical.

- Only 23% of organizations have a formal agent governance strategy. The rest are improvising at machine speed.

- Enterprise identity infrastructure wasn’t built for non-human actors. NIST launched an AI Agent Standards Initiative in February 2026 to address this directly.

- Cohesity, ServiceNow, and Datadog just shipped rollback tools because 30% of autonomous agent runs hit exceptions that require recovery.

- Microsoft’s Agent 365 and E7 tier — generally available May 1, 2026 — signals that agent governance is now a product category, not a feature.

AI agents in production have scaled faster than the governance frameworks designed to manage them. According to G2’s August 2025 survey, 57% of companies already have AI agents in production, 22% are in pilot, and 21% are in pre-pilot — and other identity-focused reports put the number significantly higher. The five critical failure points hitting production teams right now are governance gaps, identity management for non-human actors, missing rollback strategies, non-deterministic debugging, and weak observability. This article breaks down each failure in detail and provides a six-step framework to fix them before they compound.

The shift isn’t gradual. Forty percent of enterprise applications will be integrated with task-specific AI agents by the end of 2026, up from less than 5% today, according to Gartner. Gartner’s best case scenario projection predicts that agentic AI could drive approximately 30% of enterprise application software revenue by 2035, surpassing $450 billion, up from 2% in 2025. That’s not a technology trend. That’s a structural transformation of enterprise software — and it’s happening while most organizations are still winging their security and governance posture. 80% of enterprises report their AI agent investments already deliver measurable economic returns. But returns without controls create a false sense of security. The enterprises that will win aren’t the ones deploying the most agents. They’re the ones who can deploy agents *and* recover when those agents break something at 3 a.m.

What Does “AI Agents in Production” Actually Mean in 2026?

An AI agent in production is an autonomous system operating against live enterprise data, making real decisions, and triggering real actions — not a chatbot in a sandbox answering test queries. The distinction matters because production agents carry production consequences.

The Shift from AI Assistants to Autonomous Agents

Gartner outlines five stages in the evolution of enterprise AI. In 2025, nearly every enterprise application included some form of AI assistant — systems that simplify tasks but still depend on human input. By 2026, 40 percent of enterprise applications will integrate agents that act independently, automating development, managing incidents, or resolving support cases.

Agentic AI refers to intelligent systems capable of autonomously pursuing objectives rather than simply generating outputs — an AI agent interprets goals, plans actions, uses tools or APIs, and adapts behavior based on outcomes. For a deeper breakdown of what agentic AI actually is and how it’s revolutionizing business operations, we’ve covered that extensively. This article focuses on what happens after you deploy these systems — and what’s going wrong.

That’s the key distinction. Assistants wait for prompts. Agents pursue objectives. An agentic AI system interprets goals, plans actions, uses tools or APIs, and adapts behavior based on outcomes. When your AI goes from “suggest a response” to “send the response, update the CRM, and escalate the edge case to a human” — that’s an agent in production. Modern AI agents are expected to operate across real enterprise systems — CRMs, ticketing tools, internal APIs, and data platforms. As a result, the hardest part of deploying agentic workflows today is not intelligence, but secure and reliable access to production systems.

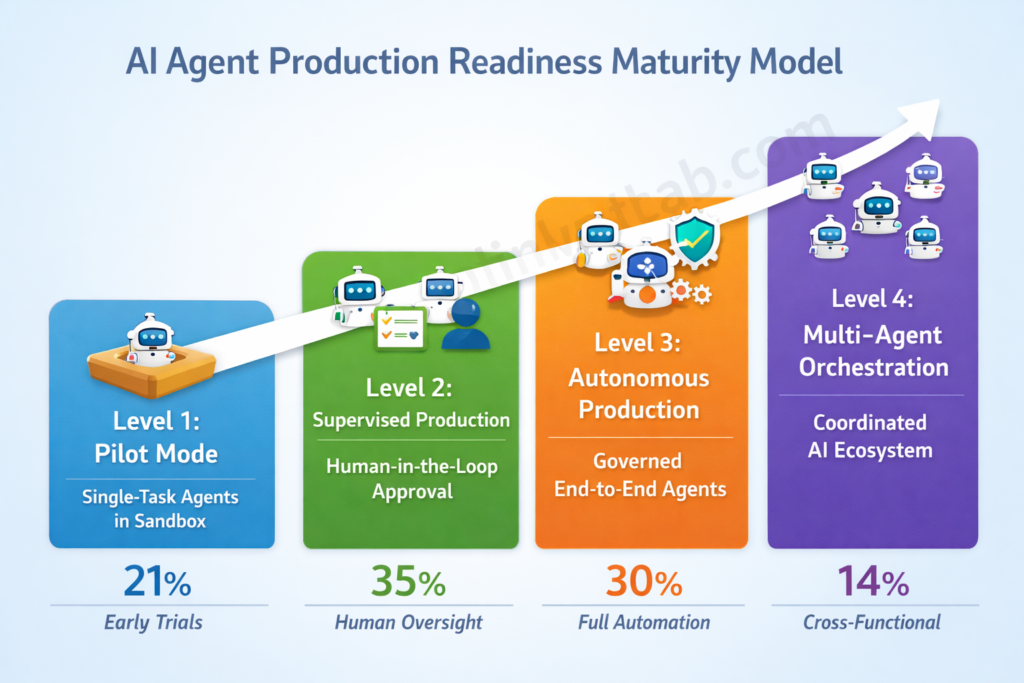

The Production Readiness Spectrum: 4 Levels of Agent Maturity

Not every enterprise running AI agents in production is running them the same way. Based on a synthesis of data from G2, Deloitte, and Gartner, here’s a maturity model that maps where organizations actually sit:

| Level | Name | Description | Approximate % of Enterprises |

|---|---|---|---|

| Level 1 | Pilot Mode | Single-task agents in sandbox environments | ~21% |

| Level 2 | Supervised Production | Agents in production with human-in-the-loop approval | ~35% |

| Level 3 | Autonomous Production | End-to-end agents with governance guardrails | ~30% |

| Level 4 | Multi-Agent Orchestration | Coordinated agent ecosystems across departments | ~14% |

57% of organizations already deploy multi-step agent workflows, while 16% have progressed to cross-functional AI agents spanning multiple teams. But even among enterprises at Level 3 or 4, most remain early in capability and control. Agentic AI usage is poised to rise sharply in the next two years, but oversight is lagging: only one in five companies has a mature model for governance.

Key takeaway: Most enterprises are somewhere between Level 2 and Level 3 — agents are live and doing real work, but governance, identity, and recovery infrastructure haven’t caught up.

5 Critical Things Breaking With AI Agents in Production (And Why)

1. Governance Frameworks Can’t Keep Up With Machine-Speed Decisions

Here’s the core problem: your governance model was built for humans who make decisions over hours or days. AI agents make decisions in milliseconds. The policies, approval workflows, and audit mechanisms designed for human-speed operations simply don’t function when an agent can execute hundreds of actions before anyone notices something went wrong. AI agents are no longer a future concept; they are a present-day reality integrated into core enterprise workflows. While offering unprecedented speed and efficiency, this new digital workforce introduces a unique and escalating class of security risks. For security leaders, the challenge is not whether to adopt AI but how to govern it, as ungoverned agents can quickly become a significant liability.

Only 23% of organizations have a formal, enterprise-wide strategy for agent identity management. Another 37% rely on informal practices — essentially figuring it out as incidents arise. The remaining 40%? They’re flying blind.

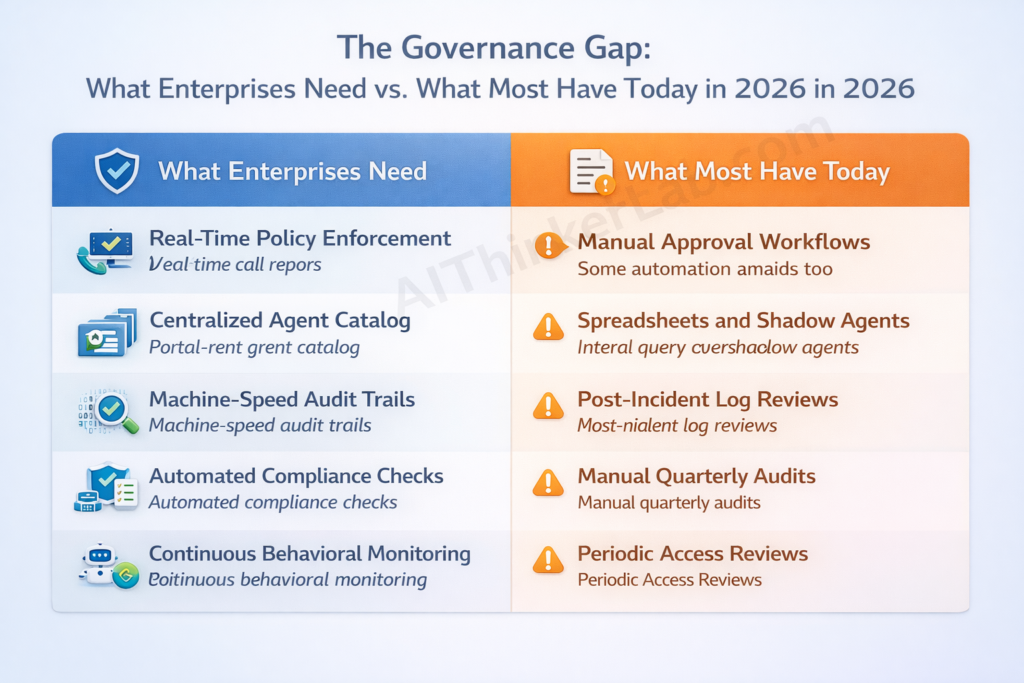

The Governance Maturity Gap

| What Enterprises Need | What Most Have Today |

|---|---|

| Real-time policy enforcement | Manual approval workflows |

| Centralized agent catalog | Spreadsheets and shadow agents |

| Machine-speed audit trails | Post-incident log reviews |

| Automated compliance checks | Manual quarterly audits |

| Continuous behavioral monitoring | Periodic access reviews |

Research shows that 80% of organisations have experienced unintended actions from their AI agents, from accessing unauthorised systems to sharing sensitive data. These aren’t theoretical risks. They’re happening now.

If your organization is earlier in its AI journey and still navigating the pilot-to-production transition, start with building a structured AI adoption roadmap for SMEs before tackling agent-level governance.

How to Build an AI Agent Governance Framework

Step 1: Create a centralized AI agent catalog. Know every agent in your environment — official, shadow, and third-party. You can’t govern what you can’t see. The first step is visibility. If you cannot see every AI agent operating in your environment, you cannot secure it. This requires automated discovery and a clear lifecycle management process.

Step 2: Implement bounded autonomy. Define explicit boundaries for what each agent can and cannot do. High-risk actions (financial transactions, data deletion, customer-facing communications) should require human approval gates.

Step 3: Enforce real-time policy gates. Static rules checked quarterly won’t cut it. Policies need to be evaluated at the point of each action, not after the fact.

Step 4: Build immutable audit trails. Every agent decision, every tool call, every data access event needs to be logged in a tamper-proof system. These patterns work best as a layered defense: just-in-time privileges minimize access, bounded autonomy limits unsupervised actions, AI firewalls reduce risk at the interaction boundary, execution sandboxing contains blast radius, and immutable reasoning traces provide the audit trail for detecting drift.

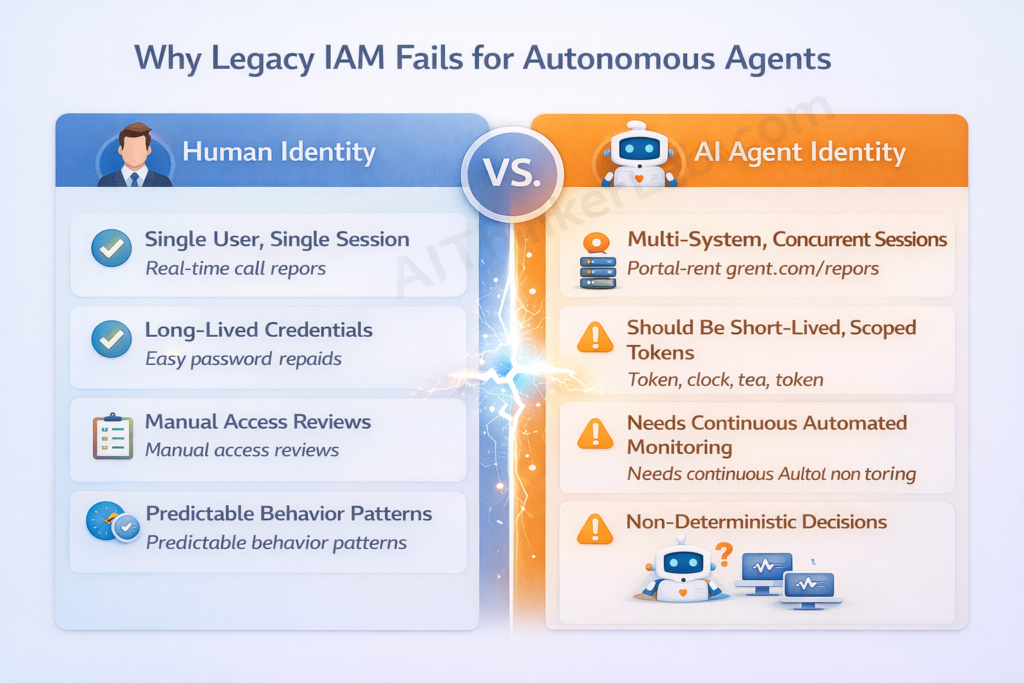

2. Enterprise Identity Was Built for Humans — Not AI Agents

This might be the most underappreciated crisis in enterprise IT right now. Every identity and access management (IAM) system in your organization was designed with an assumption: the entity requesting access is a human being with one session, predictable behavior, and long-lived credentials.

AI agents violate every one of those assumptions. AI agents represent a fundamentally different type of identity compared to their human and machine counterparts. Unlike human users, whose access needs are typically predictable and role-based, AI agents are designed to be goal-oriented and autonomous. This means they will seek out the data and systems required to complete a task, often requiring broader privileges across more applications than a typical employee. This autonomy leads to a significant governance gap.

Machine identities now outnumber human ones by more than 80 to one, driven by cloud expansion, AI deployments, and automation. And agents — the newest category — are the hardest to manage because they’re non-deterministic, frequently spawning sub-tasks with their own access requirements.

Why Legacy IAM Fails for AI Agents

| Human Identity | AI Agent Identity |

|---|---|

| Single user, single session | Multi-system, concurrent sessions |

| Long-lived credentials | Should be short-lived, scoped tokens |

| Manual access reviews | Needs continuous automated monitoring |

| Clear accountability chain | Delegated authority with shifting ownership |

| Predictable behavior patterns | Non-deterministic decisions |

NIST’s Center for AI Standards and Innovation (CAISI) announced the launch of the AI Agent Standards Initiative. The Initiative will ensure that the next generation of AI — AI agents capable of autonomous actions — is widely adopted with confidence, can function securely on behalf of its users, and can interoperate smoothly across the digital ecosystem. NIST’s National Cybersecurity Center of Excellence (NCCoE) has released a concept paper titled “Accelerating the Adoption of Software and AI Agent Identity and Authorization.” The project is intended to explore practical, standards-based approaches for authenticating software and AI agents, defining permissions and implementing authorization controls in enterprise environments.

This isn’t theoretical guidance. NIST’s AI Risk Management Framework, released in January 2023, was explicitly voluntary. Within 18 months, it appeared in executive orders, state AI laws, and federal procurement requirements. The Colorado AI Act references the AI RMF. The EU AI Act’s implementing guidance cites it. Federal contractors are asked to demonstrate AI RMF alignment in proposals. The AI Agent Standards Initiative will likely follow the same trajectory.

The AI Agent Identity Framework

Each AI agent should exist as its own managed identity, with a unique identifier, owner, business purpose, and risk classification — using the same create/change/retire workflows you’d expect for high-risk human users. Specifically:

- Treat every agent as a first-class managed identity with lifecycle controls.

- Implement just-in-time, scoped access tokens — no more static API keys that live forever.

- Build delegated authority chains with human sponsors who are accountable.

- Require agent registration before production deployment — no shadow agents.

SailPoint’s new connectors for Agent Identity Security can discover and govern AI agents from platforms like Microsoft 365 Co-Pilot and Databricks, in addition to Amazon Bedrock, Google Vertex AI, Microsoft Foundry, Salesforce Agentforce, ServiceNow AI Platform, and Snowflake Cortex AI. The tooling is catching up. The question is whether your team is implementing it.

3. No Rollback Strategy When AI Agents Make Mistakes

Cohesity, ServiceNow, and Datadog teamed on a recoverability service that will hunt down all the files and data corrupted by bad AI actors and restore systems to a “trusted state.” The three companies believe that enterprises will use agentic AI to operate some of their systems but also expect the software could botch the job or fall victim to malicious attacks. They therefore think organizations will need tools to recover from errors introduced by AI. Vendors aren’t making such caution easy, by adding agentic automation to their products.

Data shows 30% of autonomous agent runs hit exceptions that need recovery — not just code errors, but model hallucinations, context window overflows, and API rate limits. Without a rollback strategy, these failures leave systems in a broken, partially-updated state that’s often harder to fix than the original problem.

5 AI Agent Rollback Patterns

- Atomic Transactions — All-or-nothing execution. The agent’s entire action chain either completes successfully or rolls back completely. No partial states.

- Compensating Actions — For each action the agent can take, define a corresponding “undo.” If step 4 of 6 fails, execute compensating actions for steps 1 through 3 in reverse.

- Checkpoint-Based Recovery — Take periodic state snapshots during complex workflows. When failure occurs, restore to the most recent valid checkpoint.

- Shadow Deployment — Route live traffic to both current and new agent versions, but only return the current agent’s response. The new version processes requests silently, logging its reasoning for offline comparison. You validate on real-world data without risking production.

- Dead Letter Queues — Failed tasks get routed to a review queue rather than retried or dropped. A human (or a separate agent with different permissions) evaluates and either reruns or discards them.

Building Your AI Agent Rollback Strategy

ServiceNow and Datadog are helping Cohesity by providing control and observability platforms that monitor for anomalies. If the vendors’ tools spot a problem, Cohesity’s tools can trigger API-driven restorations across an IT estate, recovering AI agents and agent memory, vector databases, model configurations, training and fine tuning data, and enterprise data stores.

The practical steps: implement state checkpointing before every critical action. Define compensating actions for all external API calls. Build human-in-the-loop escalation paths for critical failures. Agents with rollback can cut recovery time by 80%, letting engineering teams improve logic rather than spend hours cleaning up data spills. There may be room for many players in this market, as Gartner predicts up to 40 percent of enterprise applications will include integrated task-specific agents in 2026. Rubrik introduced a similar rollback tool in August 2025, and Cisco has built native rollback capabilities into its own agentic tools. This is a market forming in real time.

4. Non-Determinism Makes Debugging a Nightmare

Here’s something most AI agent deployment guides don’t warn you about: you can’t reliably reproduce bugs.

A customer complains that an agent gave them wrong information. Your team tests the same input. The agent gives the correct answer. You close the ticket. But the customer was right — the agent’s behavior just changed between runs because LLMs are inherently non-deterministic.

For production systems, this is a fundamental debugging challenge. Regulated industries face it hardest because they often need audit trails showing consistent decision-making processes. When your agent handles a financial transaction differently each time it encounters the same input conditions, how do you demonstrate compliance?

The problem isn’t only in the models themselves. The AI stack keeps shifting beneath production teams as new frameworks, APIs, and orchestration layers emerge faster than organizations can standardize or validate them. 70% of regulated enterprises update their AI agent stack every three months or faster, compared to 41% of unregulated enterprises. With every rebuild, teams lose continuity in how systems behave.

Taming Non-Determinism in Production

Run evaluation suites across hundreds of test runs to measure outcome distributions instead of testing once and calling it good. Tools like Promptfoo, LangSmith, and Arize Phoenix let you run evaluations across hundreds or thousands of runs. Instead of testing a prompt once, you run it 500 times and measure the distribution of outcomes — revealing variance and making the range of possible behaviors visible.

Additionally: build immutable reasoning traces for every agent decision (not just inputs and outputs, but the full chain-of-thought). Implement canary deployments with automated quality gates so that behavioral drift gets caught before it reaches all users.

Key takeaway: Non-determinism isn’t a bug to fix — it’s a property to manage. Treat agent behavior as a distribution, not a deterministic function, and build your testing and monitoring accordingly.

5. Observability and Monitoring Are the Weakest Link

Production teams consistently report dissatisfaction with current observability and guardrail solutions. 46% of respondents cite integration with existing systems as their primary challenge. And 48% identify operational complexity from orchestrating multiple components as the top barrier in building agents. Integrating models, connectors, and runtime environments creates monitoring requirements that existing tools weren’t designed for.

The majority of enterprises plan to improve observability and evaluation in the next year, making visibility the top investment priority. But “plan to improve” and “actually improved” are separated by budget cycles, vendor evaluations, and organizational inertia.

The AI Agent Observability Stack

A production-grade observability stack for AI agents needs five layers:

- Token usage monitoring — Track consumption per agent, per task, per hour. Sudden spikes signal runaway reasoning loops or compromised agents.

- Latency tracking per agent step — Identify which tool calls, API integrations, or reasoning steps are bottlenecks.

- Reasoning trace logging — Capture the full chain of thought, not just inputs and outputs.

- Drift detection — Monitor for behavioral changes over time. An agent that starts giving different answers to similar queries is drifting, and you need to know before customers do.

- Business outcome correlation — Connect agent actions to business metrics. An agent that processes tickets faster but increases escalation rates isn’t actually helping.

The Hidden Cost Crisis: Token Economics at Scale

During pilot testing, token costs seem manageable. Production is a different universe.

Claude Sonnet 4.5 costs $3 per million input tokens and $15 per million output tokens. Extended reasoning multiplies this significantly. A customer service agent processing 10,000 queries daily might use 50 million tokens. But that simplified math misses the reality of agentic systems.

Agents don’t just read and respond. They reason, plan, and iterate. A single user query triggers an internal loop: the agent reads the question, searches a knowledge base, evaluates results, formulates a response, validates against policies, checks for compliance, and potentially revises. Each loop multiplies token consumption.

| Metric | Pilot Phase | Production Reality |

|---|---|---|

| Queries/day | 100 | 10,000+ |

| Tokens per query | 2,000 | 15,000–50,000 (with reasoning loops) |

| Daily token cost | ~$0.60 | $150–$750+ |

| Monthly cost | ~$18 | $4,500–$22,500+ |

| Hidden multiplier | 1x | 5–10x (tool calls, retries, multi-agent handoffs) |

The 2026 trend is treating agent cost optimization as a first-class architectural concern — similar to how cloud cost optimization (FinOps) became essential during the microservices era. Organizations are building economic models into their agent design rather than retrofitting cost controls after the bill arrives. Caching strategies, prompt compression, model routing (sending simple tasks to cheaper models), and aggressive timeout policies are all becoming standard.

Key takeaway: If your pilot cost $18/month and you’re budgeting linearly for production, multiply by at least 25x. Then add a buffer for the multi-agent handoffs and retry loops your pilot didn’t encounter.

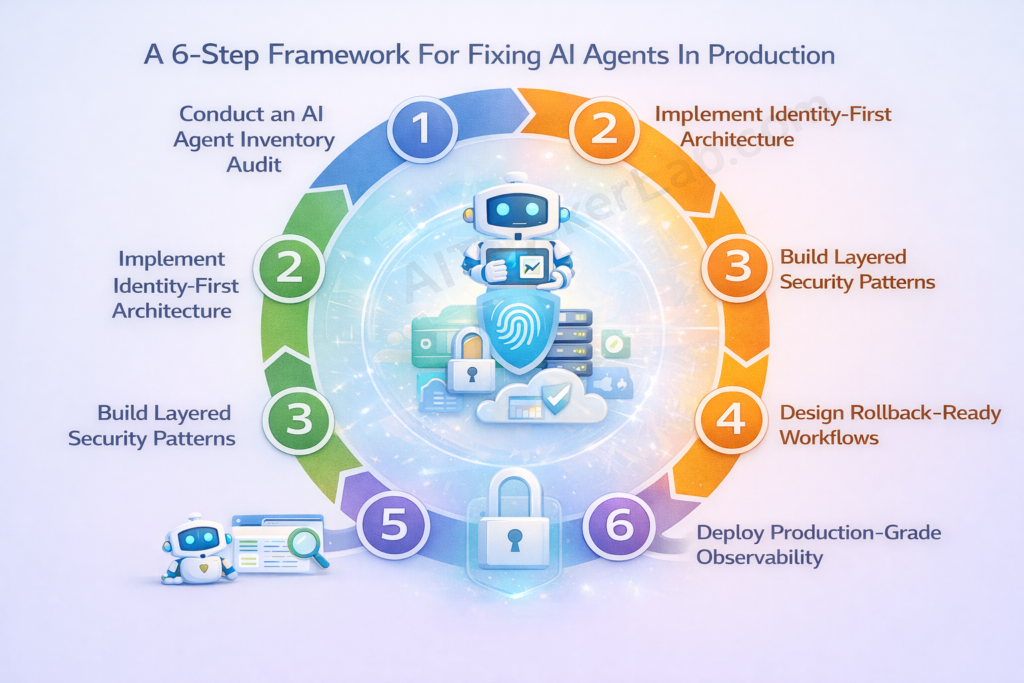

How Leading Enterprises Are Fixing AI Agents in Production: A 6-Step Framework

Step 1: Conduct an AI Agent Inventory Audit

Catalog every agent in your environment: official, shadow, and third-party. A critical, and often overlooked, aspect is ownership. An AI agent’s ownership can change multiple times in its first year alone — from executive sponsorship to AI development, then to cloud operations for deployment, and finally to security teams for compliance. Without a formal process to track these transitions, agents can become “orphaned,” operating without accountability or oversight. Centralised governance through a unified identity security platform is the solution.

Step 2: Implement Identity-First Agent Architecture

Every agent gets a managed identity with lifecycle controls. Enforce least-privilege, short-lived tokens. No static API keys. No shared credentials. SailPoint is “moving toward a new, AI-powered adaptive approach to provide continuous visibility and real-time governance for all identity types, including AI identities, machines, agents, and credentials. This year, we aim to help our customers move to least privilege or zero standing privilege.”

Step 3: Build Layered Security Patterns

Don’t rely on a single control. Layer just-in-time privileges, bounded autonomy, AI firewalls, execution sandboxing, and immutable audit traces. Each layer addresses a different failure mode.

Step 4: Design Rollback-Ready Workflows

Checkpoint before every critical action. Define compensating actions for all external calls. Budget for the rollback infrastructure now — it’s cheaper than the incident response later.

Step 5: Deploy Production-Grade Observability

Token monitoring, latency tracking, drift detection, reasoning traces, and business outcome correlation. If you can’t measure it, you can’t manage it. And you definitely can’t debug it at 3 a.m.

Step 6: Establish Continuous Governance Loops

Automated compliance checks. Regular agent access reviews. Behavioral audits that compare current agent behavior against baselines. Governance isn’t a one-time setup — it’s a continuous operational function.

AI Agents in Production: Architecture Approaches Compared

The quality of a production agent depends more on how well its components are integrated than on the intelligence of the underlying LLM. A mediocre model with excellent tooling outperforms a brilliant model with poor orchestration. Here’s how the major approaches compare:

| Approach | Best For | Key Strength | Key Weakness |

|---|---|---|---|

| Stateless Request-Response | Document analysis, classification | Simple horizontal scaling | No memory or context between calls |

| Persistent State Agents | Customer service, multi-step workflows | Conversation continuity | State management complexity at scale |

| Multi-Agent Orchestration | Complex cross-functional workflows | Specialized agent collaboration | Debugging nightmare, cost explosion |

| Human-in-the-Loop Hybrid | Regulated industries, high-stakes decisions | Compliance-friendly, auditable | Slower throughput, human bottleneck risk |

Single agents hit a ceiling. Complex workflows require coordination. The shift toward multi-agent systems, where specialized agents collaborate on broader tasks, is accelerating. But multi-agent systems also multiply every problem discussed above — governance, identity, observability, and cost all scale non-linearly with agent count.

Before scaling your agent fleet, make sure you understand how enterprises are actually making money with agentic AI in 2026 — because production without ROI clarity is how 40% of agentic projects end up canceled.

What’s Coming Next: The Emerging AI Agent Infrastructure Stack for 2026

NIST’s AI Agent Standards Initiative

In January and February 2026, NIST, through its Center for AI Standards and Innovation (CAISI), launched a new AI Agent Standards Initiative to support the development of interoperable and secure AI agent systems, issued a Request for Information (RFI) on securing AI agent systems, and announced a series of virtual listening sessions. NIST conducts fundamental research into agent authentication and identity infrastructure to enable secure human-agent and multi-agent interactions. The initiative underscores NIST’s growing focus on identity governance as a foundational control for autonomous systems.

What NIST publishes as voluntary guidance in 2026 will appear in compliance frameworks, vendor questionnaires, and litigation discovery by 2027-2028 — just as the AI Risk Management Framework did. Get ahead of this now.

Microsoft’s Agent 365 and the Governance Control Plane

Microsoft said that its Agent 365 product — a so-called control plane or “orchestration platform” for AI agents, allowing IT and security leaders to monitor, govern, and secure agents, including those created using other vendors’ software, across an organization — will be generally available from May 1, priced at $15 per user per month. Agent 365 gives IT teams one place to observe, govern, and secure every agent across the organization, and the E7 tier includes the $30 Copilot, $12 Entra identity tools and the new $15 Agent 365 product for managing companies’ AI agents. This signals that agent governance has become a standalone product category — not an afterthought bolted onto existing security tools.

The Three-Tier Agentic AI Ecosystem

A three-tier ecosystem is forming: Tier 1 hyperscalers (Microsoft, Google, AWS) providing foundational infrastructure; Tier 2 established enterprise vendors (Salesforce, ServiceNow, SailPoint) embedding agents into existing platforms; and an emerging Tier 3 of “agent-native” startups building products with agent-first architectures. This third tier is the most disruptive — these companies bypass traditional software paradigms entirely and are often the first to solve the governance, identity, and observability problems that incumbent platforms are still retrofitting.

The Bottom Line

The 95% stat grabs attention, but the real story is the gap between deployment velocity and operational maturity. Enterprises are running AI agents in production at unprecedented scale — and the governance, identity, rollback, and observability infrastructure hasn’t caught up.

This gap won’t close on its own. NIST is formalizing standards. Microsoft is shipping governance tooling. SailPoint, Cohesity, and others are building the recovery and identity layers that production environments demand. But these are vendor responses to a problem that ultimately requires organizational change — new processes, new roles, new ways of thinking about what “identity” and “access” mean when the entity acting on your systems isn’t human.

The enterprises that thrive in 2026 won’t be the ones with the most agents. They’ll be the ones whose agents can break something at 3 a.m. and have the infrastructure to detect, contain, and recover before anyone’s morning coffee.

Start with the six-step framework. Audit your agents. Fix your identity layer. Build your rollback strategy. The time to do this was six months ago. The second-best time is now.

Sources & References

- Pillsbury Law: NIST AI Agent Standards and Industry Input

- G2 Enterprise AI Agents Report (2025)

- Gartner: 40% of Enterprise Apps Will Feature AI Agents by 2026

- NIST AI Agent Standards Initiative (February 2026)

- NCCoE Concept Paper: Software and AI Agent Identity and Authorization

- SailPoint Adaptive Identity Framework (March 2026)

- Microsoft Agent 365 — Official Product Page

- The Register: Vendors Building Tools to Clean Up AI Agent Messes (March 2026)

- Deloitte State of AI in the Enterprise 2026

- SailPoint: Governing AI Agents in the Enterprise (February 2026)