TL;DR — Key Takeaways:

- Anthropic refused the Pentagon’s $200 million contract, holding firm on two red lines: no mass domestic surveillance and no fully autonomous weapons powered by its Claude AI model.

- The Pentagon designated Anthropic a “supply chain risk” — a classification never before applied to an American company, typically reserved for foreign adversaries like Huawei.

- OpenAI swooped in hours later with its own Pentagon deal for classified systems, claiming it secured the same safeguards Anthropic had fought for — but through different contractual mechanisms.

- President Trump ordered all federal agencies to cease using Anthropic, with a six-month phase-out period for the Department of Defense.

- The legal and business fallout could reshape AI governance for years, as Anthropic prepares to challenge the designation in court and Fortune 500 companies weigh whether working with Claude is worth the risk.

Quick Answer: In February 2026, Anthropic Rejects Pentagon AI Contract worth $200 million, refusing to remove safeguards against mass surveillance and autonomous weapons. This decision led to Anthropic being designated a “supply chain risk” — a first for any American company — and reshaped the debate over AI ethics, military technology, and corporate responsibility.

A $380 billion AI company just told the most powerful military on earth: no.

When Anthropic rejects the Pentagon AI contract — a deal worth up to $200 million — it doesn’t just walk away from revenue. It detonates one of the most consequential standoffs between private technology and government power in modern American history. 1Anthropic is rejecting the Pentagon’s latest offer to change their contract, saying the changes do not satisfy the company’s concerns that AI could be used for mass surveillance or in fully autonomous weapons.

This isn’t a policy white paper or a hypothetical ethics exercise. It’s a real company, with real technology embedded in the U.S. military’s most sensitive networks, drawing a line and daring the federal government to cross it. And the government did.

What follows is an analysis of how we got here, what happened during the critical week of February 24–28, 2026, who stands to gain and lose, and why this moment will shape AI governance for the next decade. We’ll cover the Pentagon’s ultimatum, Anthropic’s defiance, the OpenAI deal that followed within hours, and the unprecedented legal and business fallout that’s still unfolding.

How Anthropic Became the Pentagon’s First AI Partner in Classified Networks

To understand why this rupture matters, you need to understand how deeply Anthropic was already woven into the U.S. defense apparatus.

Anthropic’s Rise in Defense AI

Anthropic, a company founded by people who left OpenAI over safety issues, had been the only large commercial AI maker whose models were approved for use at the Pentagon, in a deployment done through a partnership with Palantir. That’s not a minor detail — it’s the entire context. Anthropic didn’t stumble into defense work. It built its reputation on being the safety-first AI lab and then deliberately, carefully entered the classified space. Anthropic’s Claude was the first AI model to work on the military’s classified networks. The company struck a contract worth up to $200 million with the Pentagon last summer. No other major commercial AI lab — not OpenAI, not Google, not xAI — had achieved that level of access in classified settings at the time.

What the Original Contract Included

Within Anthropic’s “acceptable use policy” in the contract are prohibitions against the use of Claude in mass surveillance and autonomous weapons. These weren’t afterthoughts. They were foundational to how Anthropic structured its engagement with the military. The Defense/War Department has been using Claude, the basic Anthropic AI package, in an increasing number of ways since signing a contract in 2024. The model became integrated into operations ranging from intelligence analysis to active military operations — it was used in the operation to capture Nicolás Maduro and could conceivably be used in a potential military operation in Iran.

What is Claude AI? Claude is Anthropic’s flagship large language model (LLM), designed with a “Constitutional AI” safety framework. It became the first commercial AI system deployed on the U.S. military’s classified networks in 2024, accessed through a partnership with Palantir Technologies. Claude competes directly with OpenAI’s GPT models and Google’s Gemini.

Why the Pentagon Wanted More

The Pentagon has said it doesn’t intend to use AI in those ways, but requires AI companies to allow their models to be used “for all lawful purposes.” That phrase — “all lawful purposes” — became the central flashpoint.

The Pentagon’s position boils down to this: once the military buys a tool, it decides how to use it. The Pentagon argues that there are many gray areas around what constitutes mass surveillance or autonomous weaponry, and that it’s unworkable to have to litigate individual cases with a private company. Their position is that once the military buys a tool, it has its own standards and procedures to determine whether and how to use it. They therefore demanded all AI firms make their models available for “all lawful purposes.”

Anthropic’s position: Sure, except for two things. And those two things happen to be among the most consequential capabilities an AI system can enable.

Key takeaway: Anthropic didn’t refuse to work with the Pentagon — it had been its most trusted AI partner. The dispute was over two specific safeguards, not a blanket rejection of military AI use.

The Week That Changed AI-Military Relations: A Timeline of Events

The breakdown between Anthropic and the Pentagon didn’t happen overnight, but the decisive events compressed into a single brutal week.

The Pentagon’s Ultimatum

Defense Secretary Pete Hegseth told Anthropic CEO Dario Amodei on Tuesday that if Anthropic does not allow its AI model to be used “for all lawful purposes,” the Pentagon would cancel Anthropic’s $200 million contract. A deadline of Friday at 5:01pm is fast approaching for Anthropic to let the Pentagon use its model Claude as it sees fit or potentially face severe consequences. But the consequences Hegseth threatened went far beyond a canceled contract. Alternatively, Hegseth threatened to invoke the Defense Production Act to compel Anthropic to provide its model without any restrictions. Such an order may be on murky legal ground.

Anthropic’s Two Non-Negotiable Red Lines

Leading up to the Friday deadline, the AI company’s CEO had made clear that despite threats from the Pentagon, they refuse to drop their two key demands: no use of its artificial intelligence for fully autonomous weapons — meaning AI, not humans, making final battlefield targeting decisions — and no mass domestic surveillance.

That’s it. Two things. Not a sweeping demand to control military operations — a specific, narrow insistence on two guardrails.

The “Final Offer” That Wasn’t

On Thursday, Anthropic reviewed what the Pentagon had called its final offer — and found it hollow. “The contract language we received overnight from the Department of War made virtually no progress on preventing Claude’s use for mass surveillance of Americans or in fully autonomous weapons,” Anthropic said. “New language framed as compromise was paired with legalese that would allow those safeguards to be disregarded at will.”

That’s the critical detail. The Pentagon didn’t refuse to include safety language — it offered language that was structurally meaningless. Compromise framing with override clauses isn’t a compromise. It’s decoration.

Dario Amodei’s Defiant Response

The Pentagon’s threats “are inherently contradictory: one labels us a security risk; the other labels Claude as essential to national security,” Amodei said in a blog post.

He’s right. And that contradiction exposed something important about the government’s real motivation. “These threats do not change our position: we cannot in good conscience accede to their request,” Anthropic Chief Executive Officer Dario Amodei said in a statement Thursday.

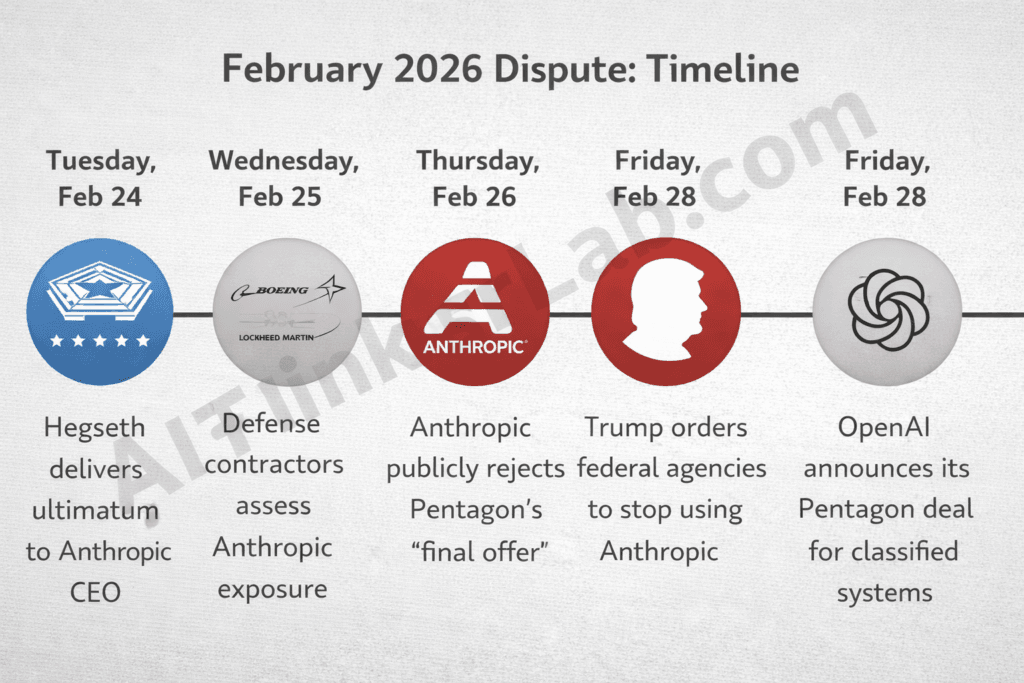

Timeline — February 24–28, 2026:

- Tuesday, Feb 24: Hegseth delivers ultimatum to Amodei — accept “all lawful purposes” or lose the contract.

- Wednesday, Feb 25: Pentagon begins asking defense contractors (Boeing, Lockheed Martin) to assess Anthropic exposure.

- Thursday, Feb 26: Anthropic reviews Pentagon’s “final offer,” rejects it publicly. Amodei publishes lengthy statement.

- Friday, Feb 28 (before 5:01 PM): Trump orders all federal agencies to stop using Anthropic.

- Friday, Feb 28 (5:01 PM): Deadline passes. Hegseth designates Anthropic a supply chain risk.

- Friday, Feb 28 (evening): OpenAI announces Pentagon deal for classified systems.

Supply Chain Risk, Government Blacklisting, and the Fallout for Anthropic

The retaliation was swift and, by multiple legal assessments, unprecedented.

The Unprecedented “Supply Chain Risk” Designation

Here’s what makes the Pentagon’s response extraordinary: Legal and policy experts said the government’s unprecedented decision presents profound questions about the relationship between the government and business in the U.S. It is the first time the U.S. has ever designated an American company a supply chain risk, and the first time the designation has been used in apparent retaliation for a business not agreeing to certain terms.

What does “supply chain risk” designation mean? A supply chain risk designation under U.S.C. § 3252 is a federal classification that bars a company from participating in defense procurement. It means no Pentagon contractor, supplier, or partner may conduct commercial activity with the designated entity. This mechanism was historically reserved for companies connected to foreign adversaries — such as Huawei (China) and Kaspersky Lab (Russia) — and had never before been publicly applied to an American company.

Declaring a company a supply chain risk is a penalty typically reserved for businesses from adversarial countries, such as Chinese tech giant Huawei. Applying it to an American firm headquartered in San Francisco, founded by former OpenAI researchers, is a different animal entirely.

Trump’s Executive Response

President Trump ordered the U.S. government to stop using the artificial intelligence company Anthropic’s products and the Pentagon moved to designate the company a national security risk on Friday, in a sharp escalation of a high-stakes fight over the military’s use of AI. Trump said Anthropic was a “radical Left AI company run by people who have no idea what the real World is all about.” President Trump announced earlier Friday that all federal agencies must “immediately” stop using Anthropic, though the Defense Department and certain other agencies can continue using its AI technology for up to six months while transitioning to other services.

The Ripple Effect on Anthropic’s Business

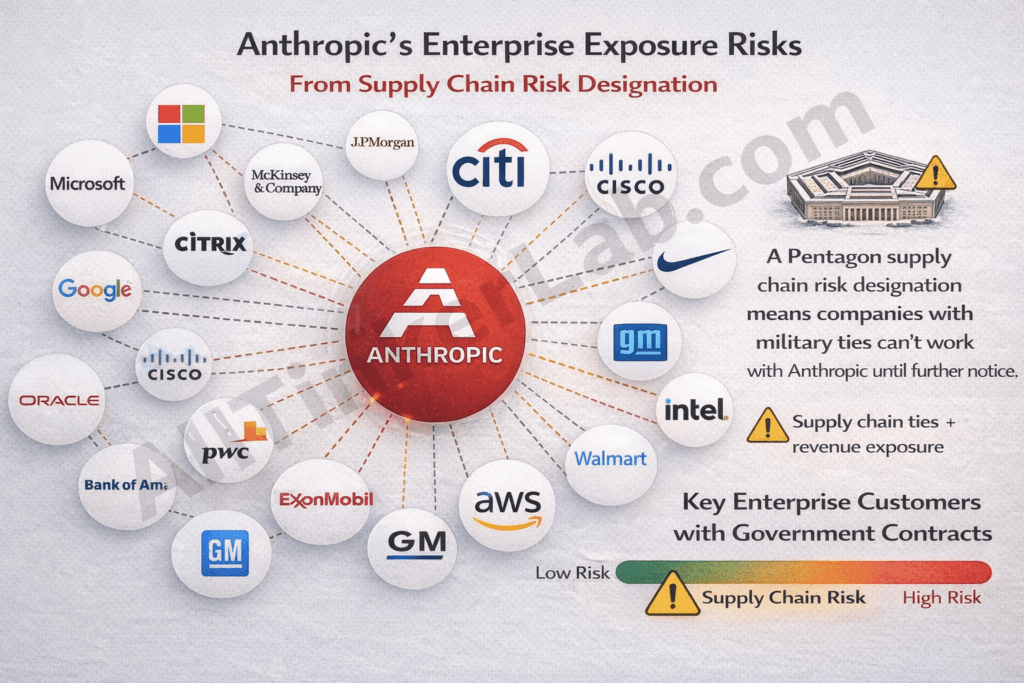

The $200 million contract itself isn’t an existential threat to a company valued at roughly $380 billion. But the supply chain designation is a different weapon. The bigger risk is the supply chain risk designation, which means any company that works with the US military would have to prove they don’t touch anything related to Anthropic in their work with the Pentagon. Much of Anthropic’s success stems from its enterprise contracts with big companies – many of which may have contracts with the Pentagon. “It will take years to resolve in court. And in the meantime, every general counsel at every Fortune 500 company with any Pentagon exposure is going to ask one question: is using Claude worth the risk?” Shenaka Anslem Perera, an independent analyst, posted on X.

That’s the real blade here. The contract cancellation is a flesh wound. The supply chain designation is designed to make Anthropic radioactive to the enterprise market.

Anthropic’s Legal Counteraction

Several legal experts have raised serious questions about the designation’s validity. Charlie Bullock, a senior research fellow at the Institute for Law & AI, told Wired that the government cannot make the designation without having completed a risk assessment — something which it is unclear if the government conducted — and notifying Congress prior to taking action. Amos Toh, a senior counsel at the Brennan Center for Justice at New York University, was also among several legal experts who said that the supply chain risk designation requires the government to prove that there is a risk of sabotage, subversion, or manipulation of operations by an adversary. “It is not at all clear how adversaries could exploit Anthropic’s usage restrictions on Claude to sabotage military systems,” Toh told DefenseScoop.

Key takeaway: The supply chain risk designation may not survive a court challenge, but its economic damage to Anthropic could be significant before a ruling ever arrives.

OpenAI Swoops In: How Competitors Responded to Anthropic’s Stand

The timing was impossible to miss.

OpenAI’s Pentagon Deal — Same Day, Same Concerns?

Altman announced Friday his company had secured a coveted Pentagon contract hours after the Department of War designated its arch-rival Anthropic a ‘supply chain risk,’ stripping it of its own military contracts. OpenAI announced late Friday it reached a deal for the Pentagon to use its AI models in classified systems. Altman told employees at the all-hands that the government is willing to let OpenAI build its own “safety stack” — that is, a layered system of technical, policy, and human controls that sit between a powerful AI model and real-world use.

The OpenAI Paradox — Same Red Lines, Different Outcome

This is where the story gets genuinely strange. OpenAI CEO Altman had said earlier on Friday that he shared Anthropic’s “red lines” restricting military use of AI.

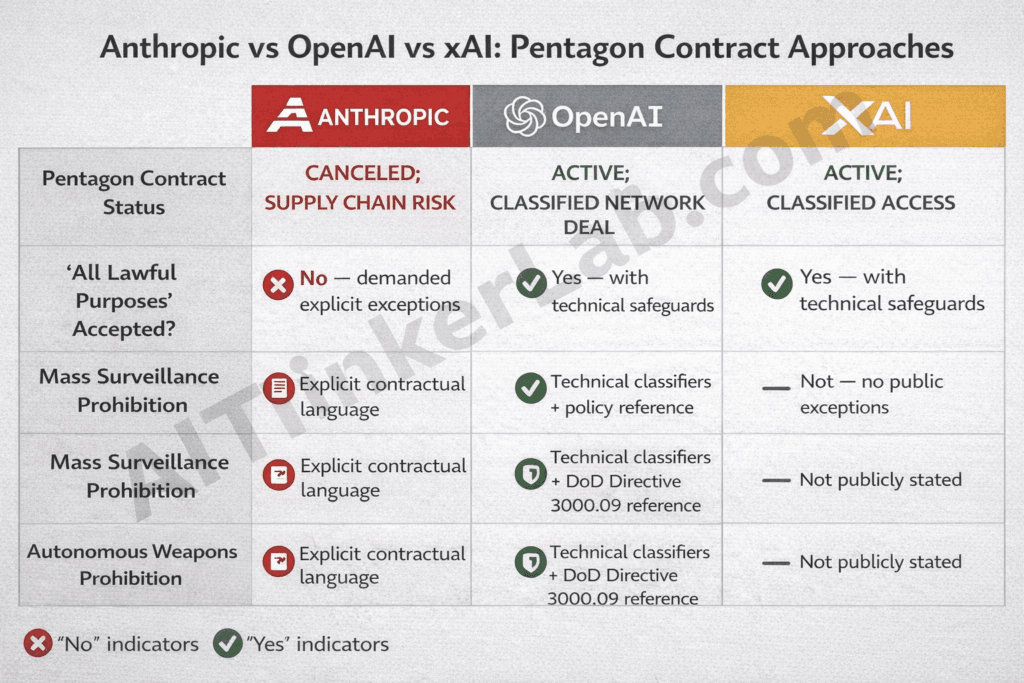

So how did OpenAI secure a deal while Anthropic got blacklisted — supposedly over the same principles? While Anthropic tried to have the limits spelled out explicitly in the contract, OpenAI agreed that the Pentagon could use its tech for “any lawful purpose,” while Altman also says of the limitations that OpenAI “put them into our agreement.” It is unclear exactly how both these things could be true or how the limitations are stated in the agreement.

The difference, as best as can be determined from public information, is one of contractual architecture. Anthropic demanded explicit, enforceable prohibitions written into the contract text. OpenAI accepted the “all lawful purposes” language while building technical guardrails — classifiers, refusal behaviors, forward-deployed engineers — into its deployment architecture. OpenAI’s deployment architecture will enable it to independently verify that these red lines are not crossed, including running and updating classifiers.

Put differently: Anthropic wanted a legal lock. OpenAI is relying on a technical one. OpenAI said on Saturday that its agreement with the Pentagon “has more guardrails than any previous agreement for classified AI deployments, including Anthropic’s.”

That’s a bold claim — and one that deserves serious scrutiny. A technical safety stack is only as strong as the company’s willingness to maintain it under government pressure. A contractual prohibition has teeth in court. The question is which approach actually protects democratic values when the pressure intensifies.

xAI and Google Enter the Arena

While Anthropic has been the only model used in classified settings to date, xAI recently signed a contract under the all lawful purposes standard for classified work. Negotiations to bring OpenAI and Google into the classified space are accelerating.

| Criteria | Anthropic | OpenAI | xAI |

|---|---|---|---|

| Pentagon Contract Status | Canceled; supply chain risk | Active; classified network deal | Active; classified access |

| “All Lawful Purposes” Accepted? | No — demanded explicit exceptions | Yes — with technical safeguards | Yes — no public exceptions |

| Mass Surveillance Prohibition | Explicit contractual language | Technical classifiers + policy reference | Not publicly stated |

| Autonomous Weapons Prohibition | Explicit contractual language | Technical classifiers + DoD Directive 3000.09 reference | Not publicly stated |

| Safety Architecture | Acceptable Use Policy in contract | “Safety stack” — classifiers, refusal systems, deployed engineers | Unknown |

| Enforcement Mechanism | Legal/contractual | Technical/operational | Unknown |

Key takeaway: The fundamental dispute wasn’t over principles — all major labs claim the same red lines. It was over enforcement mechanisms: explicit contractual language (Anthropic) vs. technical safeguards with policy references (OpenAI) vs. unquestioning compliance (xAI).

Why Anthropic’s Decision Is a Defining Moment for AI Ethics

Strip away the political theater, and you’re left with a question that will define the next decade of AI governance: Can private companies set moral limits on how governments use their technology?

The Core Ethical Question — Can AI Companies Set Moral Boundaries?

Whether AI companies can set restrictions on how the government uses their technology has emerged as a major sticking point in recent months between Anthropic and the Trump administration. To what degree are there any guarantees about how a product could be used for nefarious purposes, and what are the corporate responsibilities that result?

This isn’t entirely new territory. Defense contractors have long navigated ethical boundaries — Lockheed Martin doesn’t sell fighter jets and then dictate how they’re flown. But AI is fundamentally different. An AI model isn’t a physical weapon with fixed capabilities. It’s a general-purpose reasoning system that can be pointed at virtually any task. The guardrails aren’t in the hardware — they’re in the policy and the code.

Autonomous Weapons — The Line Between Defense and Danger

This is a technical argument, not just a moral one. Current LLMs hallucinate, misinterpret context, and fail in unpredictable ways. Giving such a system autonomous kill authority isn’t just ethically fraught — it’s operationally reckless. The Pentagon’s own DoD Directive 3000.09 (updated January 2023) requires “appropriate levels of human judgment” for lethal force decisions. Anthropic was, in effect, asking for contractual language that mirrored existing Pentagon policy.

So why did the Pentagon refuse to put it in writing? That’s the question that makes this dispute so unsettling.

Mass Surveillance — Democratic Values at Stake

Amodei wrote in an essay that it is “illegitimate” to use AI for “domestic mass surveillance and mass propaganda” and that AI-automated weapons could greatly increase the risks “of democratic governments turning them against their own people to seize power.” At the OpenAI all-hands, staff were told that the most challenging aspect of the deal for leadership was concern over foreign surveillance, and that there was a major worry about AI-driven surveillance threatening democracy.

This wasn’t a fringe concern. Even OpenAI’s internal leadership acknowledged the risk. The difference is that Anthropic demanded a contractual guarantee, while OpenAI expressed concern privately and accepted a technical approach publicly.

The Power Dynamic — Government vs. Private Enterprise

If the Pentagon was simply unhappy with Anthropic’s conditions for its model, it could simply terminate the contract and get the AI model it wants from another company, said Alan Rozenshtein, a law professor at the University of Minnesota. “What the government really wants is it wants to keep using Anthropic’s technology, and it’s just using every source of leverage possible,” he said.

That observation cuts to the heart of this: the Pentagon didn’t just want to switch vendors. It wanted to set a precedent that no AI company can dictate terms. Defense officials praised Claude’s capabilities in conversations with Axios, with one admitting it would be a “huge pain in the ass” to disentangle.

Silicon Valley Reacts: Support, Silence, and Self-Interest

AI Industry Rallies Behind Anthropic (Mostly)

The AI industry largely came to Anthropic’s defense this week, and OpenAI CEO Sam Altman said he shares Anthropic’s concerns when it comes to working with the Pentagon. Sam Altman wrote: “Two of our most important safety principles are prohibitions on domestic mass surveillance and human responsibility for the use of force, including for autonomous weapon systems.” The Pentagon “agrees with these principles, reflects them in law and policy, and we put them into our agreement.” More than 60 OpenAI employees and 300 Google employees signed an open letter this week asking their employers to support Anthropic’s position.

But support and solidarity are different things. Altman expressed support for the principles while simultaneously signing a deal that rendered Anthropic’s stand economically moot. That’s not solidarity — that’s opportunism wearing a sympathetic face.

The Chilling Effect on Tech-Government Relations

The Trump administration’s declaration that AI company Anthropic would be cut off from all government contracts shook the tech industry late Friday, hardening political and cultural battle lines across Silicon Valley over military use of artificial intelligence. “This sends a message to the other AI companies that they are negotiating with to make sure they do not attempt to put any sort of restrictions on AI’s uses,” said Connor.

That’s the chilling effect in plain language. The next AI company that considers negotiating ethical guardrails with the Pentagon will look at what happened to Anthropic and think twice.

Impact on Anthropic’s Valuation and Revenue

There’s an ironic possibility here: Anthropic’s principled stand may actually increase its appeal to enterprise customers who value ethical AI governance — particularly in the EU, where the AI Act mandates similar restrictions. The European market may see Anthropic not as a supply chain risk, but as the only major AI lab that proved it means what it says.

The Future of AI Ethics: What Anthropic’s Stand Means for 2026 and Beyond

Precedent-Setting Implications for AI Regulation

If the supply chain risk designation survives a legal challenge, it creates a terrifying precedent: the federal government can weaponize procurement classifications to punish companies for exercising contractual negotiation rights. That’s not a slippery slope argument — it’s a direct reading of what happened.

If it doesn’t survive — and multiple legal experts suggest it won’t — it still accomplished its purpose. The six-month phase-out proceeds. The enterprise chilling effect is already in motion. And every AI company now knows the cost of saying no. The statute also requires that the Pentagon have exhausted any alternative, less intrusive courses of action to mitigate the risk prior to making the supply chain risk finding. The speed at which this escalated — from negotiation to blacklisting in less than a week — raises serious questions about whether that statutory requirement was met.

The Evolving AI-Military Complex

Obviously, Anthropic is not the only AI developer around, but it is the developer that the Pentagon could choose as a partner and changing developers could present significant technology problems and delays.

The Pentagon needs frontier AI models. That dependency will only deepen as AI becomes embedded in intelligence analysis, logistics, cybersecurity, and operational planning. The government’s leverage is real but temporary — the more AI capabilities become critical to national security, the more the government needs companies like Anthropic, OpenAI, and Google to cooperate willingly. A Pentagon memorandum issued last month reads: “Diversity, Equity, and Inclusion and social ideology have no place in the DoW, so we must not employ AI models which incorporate ideological ‘tuning’ that interferes with their ability to provide objectively truthful responses to user prompts. The Department must also utilize models free from usage policy constraints that may limit lawful military applications.”

That language reframes safety guardrails as “ideological tuning” — a rhetorical move designed to delegitimize any ethical constraint on military AI use. Whether you agree with that framing tells you a lot about where you stand on the broader question.

Where Does the Industry Go From Here?

Three paths are now visible:

- The OpenAI Path: Accept “all lawful purposes” language but build technical safeguards independently. Maintain the appearance of principles without the contractual enforcement. This is the pragmatic route — and the one most AI companies will likely follow.

- The Anthropic Path: Demand explicit contractual protections and accept the consequences. This preserves legal enforceability but risks government retaliation. It’s the principled route — and the expensive one.

- The xAI Path: Accept all terms without public restrictions. Move fast, ask no questions. This is the compliance-first route — and potentially the most dangerous for democratic governance.

The industry’s collective choice among these paths will determine whether “AI safety” remains a meaningful commitment or becomes empty marketing.

Frequently Asked Questions: Anthropic Pentagon AI Contract Dispute

Why did Anthropic reject the Pentagon’s AI contract?

Anthropic’s CEO made clear that they refuse to drop their two key demands: no use of its artificial intelligence for fully autonomous weapons — meaning AI, not humans, making final battlefield targeting decisions — and no mass domestic surveillance. Anthropic said the Pentagon’s proposed compromise language included overrides that would render the safeguards meaningless.

How much was the Anthropic Pentagon contract worth?

The administration’s decisions cap an acrimonious dispute between Anthropic and the Pentagon over a military contract worth up to $200 million. However, the financial damage from the supply chain risk designation could far exceed the contract value itself due to the ripple effect on enterprise customers.

What is a “supply chain risk” designation?

In addition to the contract cancellation, Anthropic would be deemed a “supply chain risk,” a classification normally reserved for companies connected to foreign adversaries. It is the first time the U.S. has ever designated an American company a supply chain risk, and the first time the designation has been used in apparent retaliation for a business not agreeing to certain terms. Under this designation, no company doing business with the Pentagon may conduct any commercial activity with Anthropic.

Did OpenAI replace Anthropic at the Pentagon?

OpenAI announced late Friday it reached a deal for the Pentagon to use its AI models in classified systems, just hours after the U.S. government designated Anthropic a “supply chain risk.” OpenAI claims its deal includes even stronger guardrails, though the contractual details remain unclear.

What did President Trump say about Anthropic?

Trump said Anthropic was a “radical Left AI company run by people who have no idea what the real World is all about.” He ordered all federal agencies to cease using Anthropic’s technology, with a six-month transition period for the Pentagon.

Is Anthropic suing the government over the supply chain risk designation?

The company’s statement said the designation is “legally unsound” and would “set a dangerous precedent for any American company that negotiates with the government,” adding that it would challenge the designation in court. Multiple legal experts have questioned whether the statutory requirements for such a designation were properly followed.

Will this affect Anthropic’s business beyond the Pentagon?

The supply chain risk designation means any company that works with the US military would have to prove they don’t touch anything related to Anthropic in their work with the Pentagon. Much of Anthropic’s success stems from its enterprise contracts with big companies — many of which may have contracts with the Pentagon.

What’s the difference between how Anthropic and OpenAI handled Pentagon negotiations?

Anthropic demanded explicit contractual prohibitions on mass surveillance and autonomous weapons — hard legal limits. OpenAI agreed that the Pentagon could use its tech for “any lawful purpose,” while Altman also says of the limitations that OpenAI “put them into our agreement.” OpenAI’s approach relies on technical safeguards (classifiers, refusal behaviors, deployed engineers) rather than enforceable contract language.

The Line in the Sand — Why Anthropic’s Decision Will Echo for Decades

Here’s what this comes down to: Anthropic didn’t just reject a contract. It tested whether an AI company can maintain ethical commitments under maximum government pressure — and proved that the cost of doing so is existentially high. More correctly, it feels to be a debate about both constraints on power and the continuing failures of this department to take moral values into account in any of its practices.

The philosophical question isn’t whether Anthropic was right or wrong — reasonable people can disagree on that. The question is whether AI companies should have the right, and the responsibility, to say “no” when they believe a use of their technology threatens democratic values. If they can’t, then every AI ethics policy at every AI lab is just marketing copy. Anthropic CEO Dario Amodei has pointed out that the company’s valuation and revenue have only grown since it took a stand against Trump officials. The market may yet reward Anthropic’s position. The courts may vindicate their legal arguments. But even if neither happens, the conversation Anthropic forced — about autonomous weapons, mass surveillance, and who gets to control the most powerful technology in human history — is one the world needed to have.

The next few months will bring court filings, legislative hearings, and a scramble among AI companies to define their relationship with government power. Follow aithinkerlab.com for continuing coverage of this watershed moment in AI governance.

References

- CNN Business — “Anthropic rejects latest Pentagon offer” (Feb 26, 2026)

- Axios — “Anthropic says Pentagon’s ‘final offer’ is unacceptable” (Feb 26, 2026)

- Fortune — “OpenAI sweeps in to snag Pentagon contract after Anthropic labeled ‘supply chain risk'” (Feb 28, 2026)

- The Washington Post — “Pentagon’s Anthropic fight reshapes its relations with Silicon Valley” (Feb 28, 2026)

- CNN Business — “Trump administration orders military contractors and federal agencies to cease business with Anthropic” (Feb 27, 2026)

- Fortune — “OpenAI strikes a deal with the Pentagon” (Feb 27, 2026)

- Bloomberg — “Anthropic Rejects Latest Pentagon Offer, Escalating AI Feud” (Feb 26, 2026)

- OpenAI Official Blog — “Our agreement with the Department of War” (Mar 1, 2026)

- TechCrunch — “Pentagon moves to designate Anthropic as a supply-chain risk” (Feb 27, 2026)