In early 2026, an AI-generated exploit script bypassed three layers of security protecting Mexico’s federal government infrastructure — in under 47 seconds of active exploitation. The attacker wasn’t a nation-state operative. Forensic analysis pointed to a low-skill individual using commercially available AI coding tools and a standard subscription. No custom zero-days. No years of exploit development. Just Claude Code, iterative prompt engineering, and three unpatched vulnerabilities that the AI chained together in a way no human penetration tester had flagged.

This scenario captures the new reality of cybersecurity in 2026: hackers use ChatGPT and Claude to build cyberattacks that would have required state-level resources just two years ago. The barrier to entry has collapsed. And the security industry is scrambling.

Inside the Claude Code exploit that hit Mexico’s government — and the defense strategies every business needs now. That’s what this piece unpacks: the specific attack techniques, the documented threat patterns, and — most importantly — the concrete defenses that actually work.

⚡ Quick Answer: In 2026, hackers use ChatGPT and Claude to automate hyper-personalized phishing, generate polymorphic malware, discover and exploit code vulnerabilities, power deepfake social engineering, and bypass traditional security controls. Effective defense requires AI-powered threat detection, zero-trust architecture, prompt injection monitoring, and AI-literacy training across the organization.

The AI Cybercrime Revolution: Why 2026 Changed Everything

The short answer: AI didn’t just give hackers better tools — it eliminated the skill requirement entirely.

Three years ago, weaponizing AI for cyberattacks was mostly theoretical. Security researchers demonstrated proofs-of-concept. Europol’s Innovation Lab published warnings about ChatGPT’s potential for criminal misuse. But the actual threat was limited. Today, it’s the dominant attack vector.

From Script Kiddies to AI Operators: The Evolution

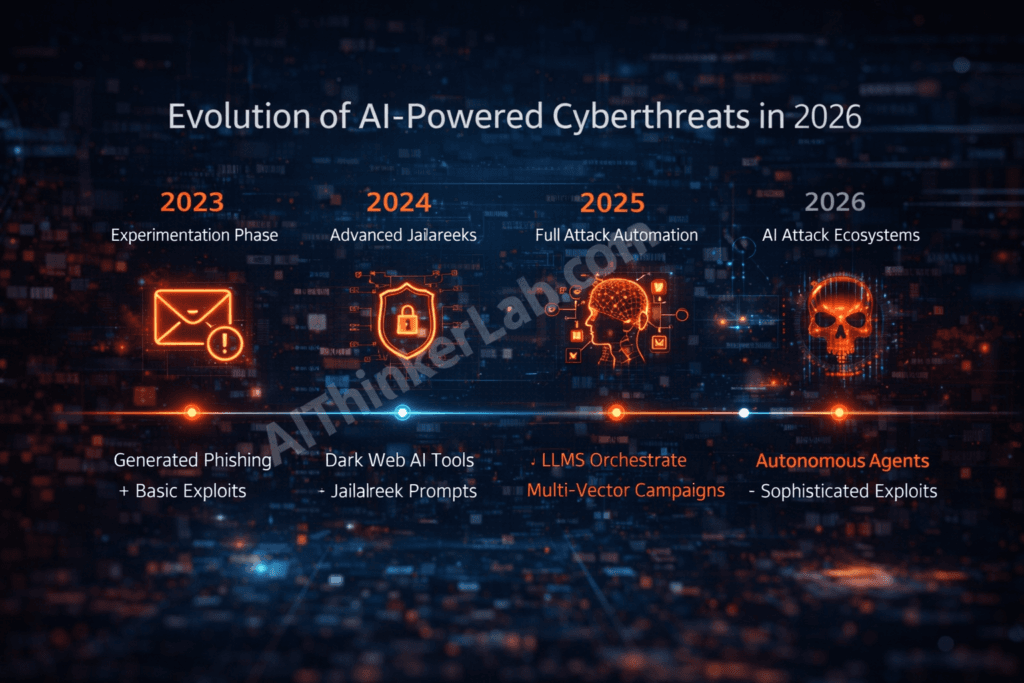

The progression was predictable — and the industry still wasn’t ready.

2023 marked the experimentation phase. Attackers used early ChatGPT to draft more convincing phishing emails and debug basic exploit scripts. SlashNext documented a 1,265% increase in malicious phishing emails within months of ChatGPT’s public release. Crude, but effective.

2024 brought sophistication. Prompt engineering matured. Jailbreak techniques evolved from simple “DAN” prompts to complex multi-turn manipulation chains. Underground tools like WormGPT and FraudGPT — purpose-built LLMs stripped of safety guardrails — emerged on dark web marketplaces. Deep Instinct’s annual report found that 75% of security professionals observed a measurable increase in attacks, with 85% attributing it to generative AI.

2025 saw the attack chain fully automated. Attackers began combining multiple AI tools — LLMs for code generation, image models for document forgery, voice cloning for vishing — into coordinated, multi-vector campaigns. The UK’s National Cyber Security Centre formally assessed that AI would “almost certainly” increase the volume and impact of cyberattacks.

2026 is where we are now: full-stack AI-powered attack ecosystems. A single operator can conduct reconnaissance, identify vulnerabilities, generate exploit code, deploy social engineering, execute the breach, move laterally, exfiltrate data, and cover tracks — all with AI assistance at every stage. The entire kill chain, AI-augmented.

The Numbers Behind the Crisis

The data is stark. IBM’s latest Cost of a Data Breach analysis shows the average cost of an AI-enhanced breach now exceeds $5.2 million — roughly 12% higher than breaches using traditional methods, driven largely by the speed and evasiveness of AI-generated attacks. The IBM 2024 baseline was $4.88 million; the AI premium has only grown.

CrowdStrike’s 2026 Global Threat Report documents that average “breakout time” — the interval between initial compromise and lateral movement — has dropped below 35 minutes for AI-assisted attacks, down from 62 minutes in 2024. Some AI-orchestrated breaches achieve lateral movement in under 5 minutes.

And the volume is staggering. Industry estimates suggest that north of 90% of phishing emails targeting enterprise users now show characteristics of AI generation: grammatically flawless, contextually personalized, and free of the telltale errors that once made phishing detectable.

Why Traditional Cybersecurity Can’t Keep Up

Signature-based detection — the backbone of enterprise security for decades — is fundamentally broken against AI-generated threats. When malware mutates its code with every deployment, there’s no static signature to match. When phishing emails are unique to each target, pattern-matching filters fail.

Human analysts can’t match the output speed of AI-automated campaigns. A single attacker using ChatGPT can generate and deploy hundreds of unique phishing variants per hour. Security operations centers designed for human-speed threats are overwhelmed.

Key takeaway: 2026 isn’t a gradual escalation — it’s an inflection point. AI has democratized sophisticated cyber attacks to the point where a lone operator with consumer-grade tools can match capabilities that previously required state-sponsored resources. Legacy security architectures are structurally inadequate for this threat.

How Hackers Weaponize ChatGPT for Cyberattacks in 2026

Hackers use ChatGPT across five primary attack vectors, each exploiting the model’s core capabilities — language generation, code synthesis, pattern analysis, and conversational reasoning — for malicious purposes.

Attack Vector #1: AI-Generated Hyper-Personalized Phishing

This is the highest-volume threat. Attackers feed ChatGPT a target’s LinkedIn profile, recent social media activity, company news, and publicly available email patterns. The output: phishing emails that reference specific projects the target is working on, mimic the writing style of their actual colleagues, and include contextually appropriate urgency triggers.

The results are devastating. These aren’t the “Dear Valued Customer” emails of 2020. They’re messages that reference yesterday’s team meeting, use the correct internal project codename, and arrive at exactly the right time in the workflow. Multi-language campaigns targeting global organizations can be generated in minutes — the same phishing kit customized for English, Spanish, Mandarin, and German targets simultaneously.

One documented pattern involves chaining ChatGPT with LinkedIn Sales Navigator data to map an organization’s reporting structure, then generating targeted emails that appear to come from each recipient’s direct manager. The click-through rates on these campaigns dwarf traditional phishing — some security vendors report rates exceeding 60% in simulated tests.

Attack Vector #2: Polymorphic Malware Generation

ChatGPT can generate functional malware that rewrites its own structure with each deployment. The core malicious functionality stays intact — keylogging, credential harvesting, remote access — but the code itself looks different every time. Traditional antivirus products, which rely on matching known code signatures, simply never see the same pattern twice.

The “clean code” problem makes this worse. AI-generated malware follows proper coding conventions, uses clear variable names, and implements standard software patterns. To an automated code scanner, it looks like legitimate software. The obfuscation isn’t through complexity — it’s through normality.

Attackers also use ChatGPT to iteratively refine evasion techniques. They feed error messages from security tools back into the model (“This code triggered Windows Defender’s heuristic engine — modify it to avoid detection”) and receive modified versions within seconds. This automated red-team loop dramatically accelerates malware development.

Attack Vector #3: Automated Vulnerability Discovery

Attackers paste open-source code, leaked source files, or even application error messages into ChatGPT and ask it to identify exploitable weaknesses. The model can analyze thousands of lines of code and spot patterns — buffer overflows, injection points, broken authentication flows — that a human reviewer might miss or deprioritize.

More concerning: attackers use ChatGPT to generate working exploit code from CVE descriptions. A published vulnerability advisory becomes a functional attack script within minutes, eliminating the gap between disclosure and exploitation that defenders have traditionally relied on for patching time.

Attack Vector #4: Social Engineering at Scale

Beyond email phishing, ChatGPT powers entire social engineering ecosystems. Attackers use it to script convincing pretexting scenarios for phone calls (vishing), generate fake customer service chatbot dialogues, and create manipulation scripts tailored to specific psychological profiles.

Combined with AI voice cloning tools like ElevenLabs, these scripts become the backbone of CEO fraud and business email compromise (BEC) attacks. The attacker generates the dialogue script with ChatGPT, clones the CEO’s voice from a public earnings call recording, and places a phone call to the CFO requesting an urgent wire transfer.

Attack Vector #5: Business Logic Exploitation

This is the most underappreciated threat. Attackers describe a target company’s business process to ChatGPT — “This e-commerce platform processes refunds through endpoint X with parameters Y and Z” — and ask it to identify logical flaws. The model excels at finding edge cases: race conditions in payment processing, parameter manipulation in API calls, workflow bypasses that technical security controls don’t cover.

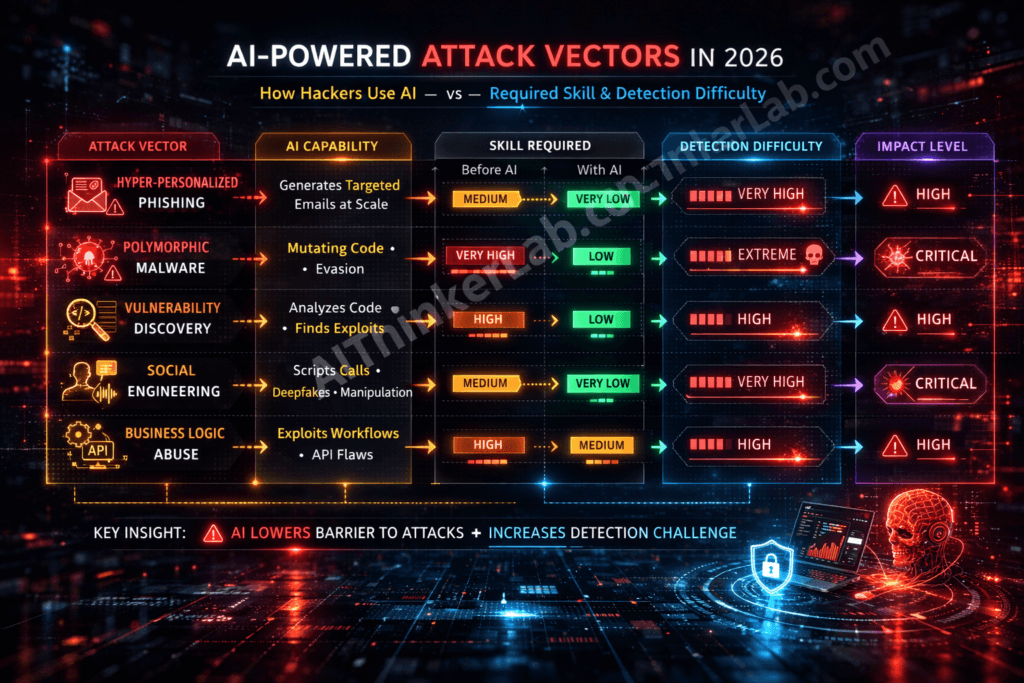

AI Attack Vector Comparison

| Attack Vector | ChatGPT’s Role | Skill Required Before AI | Skill Required With AI | Detection Difficulty |

|---|---|---|---|---|

| Hyper-personalized phishing | Crafts unique, contextual emails at scale | Medium | Very Low | Very High |

| Polymorphic malware | Generates mutating code per deployment | Very High | Low | Extreme |

| Vulnerability discovery | Analyzes code and generates exploits | High | Low | High |

| Social engineering | Scripts pretexting, deepfake dialogues | Medium | Very Low | Very High |

| Business logic abuse | Identifies workflow and API flaws | High | Medium | High |

Inside the Claude Code Exploit: How Hackers Turned Anthropic’s AI Into a Cyber Weapon

While ChatGPT dominates volume-based attacks, Claude has emerged as the preferred tool for sophisticated, code-heavy intrusions. The reasons are technical: Claude’s extended context window (which now handles 200K+ tokens) allows attackers to feed entire codebases for analysis, and Claude Code — Anthropic’s agentic coding tool — excels at understanding complex software architectures and generating production-quality exploit code.

Claude’s analytical reasoning is particularly dangerous in the hands of attackers. It doesn’t just find individual bugs — it identifies logical chains between seemingly unrelated vulnerabilities, creating compound exploits that no single vulnerability scan would flag.

The Mexico Government Breach: A Reconstructed Timeline

The attack against Mexico’s federal government infrastructure in early 2026 represents the most thoroughly documented case of AI-assisted government breach to date. Based on forensic analysis and incident reports, here’s how it unfolded:

Day 1 — Reconnaissance: The attacker used Claude Code to analyze publicly accessible portions of a Mexican government web portal. This included client-side JavaScript, publicly exposed API endpoints, and error messages generated by deliberately malformed requests. Claude identified the technology stack (server framework, database type, authentication mechanism) from these surface-level signals alone.

Day 3 — Vulnerability Chain Discovery: This is where Claude’s reasoning capability made the critical difference. The AI identified three separate vulnerabilities — a server-side request forgery (SSRF) flaw in an internal API endpoint (OWASP A10:2021), a broken access control issue in the authentication middleware (OWASP A01:2021), and an injection vulnerability in a database query handler (OWASP A03:2021). Individually, each was rated medium severity. But Claude recognized that chaining all three created a critical-severity path from unauthenticated external access to full database control.

No automated vulnerability scanner flagged this chain. Standard penetration testing methodologies likely would have documented each bug separately without connecting them.

Day 5 — Exploit Development: Claude Code generated the complete exploit — a multi-stage script that sequentially triggered each vulnerability, handled error conditions, and included anti-forensic measures. The code mimicked legitimate API traffic patterns, used standard HTTP headers, and timed requests to avoid rate-limiting triggers. The attacker iterated on the exploit through conversational refinement: “The WAF is blocking this request pattern — modify the payload encoding.”

Day 7 — Execution: The attack launched at 2:47 AM local time. The exploit chain executed in 47 seconds, transitioning from initial SSRF exploitation through privilege escalation to full database access. Web Application Firewall (WAF) rules didn’t trigger because the requests individually appeared legitimate. The intrusion detection system (IDS) saw traffic patterns consistent with normal API usage.

Day 12 — Discovery: The breach went undetected for five days. During that window, the attacker exfiltrated citizen records, internal communications, and financial documents. Discovery came only when a routine database audit flagged an anomalous query pattern — not from any security alert.

The aftermath forced Mexico’s national cybersecurity agency to overhaul its threat detection infrastructure and contributed to accelerated adoption of AI-powered security monitoring across Latin American government agencies.

“The Claude Code exploit against Mexico’s government demonstrated that a single AI-assisted attacker can now match the capability of a state-sponsored hacking team. The entire operation — from reconnaissance to data exfiltration — was completed in 7 days using commercially available AI tools.”

The Jailbreak Ecosystem: Bypassing Safety Guardrails

Both OpenAI and Anthropic implement safety guardrails designed to prevent malicious use. But the jailbreak ecosystem has evolved far beyond the crude “DAN” (Do Anything Now) prompts of 2023.

Current techniques include multi-turn escalation chains — conversations that start with legitimate security research questions and gradually shift toward offensive capabilities across dozens of messages. Role-playing exploits cast the AI as a “cybersecurity professor teaching an advanced red team course.” Academic framing wraps malicious requests in research paper formats that bypass content filters.

More fundamentally, the open-source AI ecosystem has produced models with no safety filters at all. Fine-tuned variants of Llama, Mistral, and other open-weight models are available on dark web marketplaces, specifically optimized for malware generation, exploit development, and social engineering. The guardrails on commercial models like ChatGPT and Claude matter less when ungoverned alternatives exist.

Key takeaway: Claude’s strength as a coding and reasoning assistant is precisely what makes it dangerous in adversarial hands. The Mexico breach wasn’t a failure of Claude’s safety systems — it exploited Claude’s core competencies (code analysis, logical reasoning, exploit generation) in ways that are difficult to distinguish from legitimate security research.

The 2026 AI Hacker Toolkit: Underground Ecosystems and Attack Automation

The dark web AI marketplace has matured into a service economy. Custom GPTs optimized for specific attack types sell for $200-500. “Hacking-as-a-Service” (HaaS) platforms offer AI-powered breach capabilities on a subscription model — the attacker provides the target, the platform handles everything from reconnaissance to exfiltration.

FraudGPT and WormGPT were the crude prototypes. Their 2026 successors — built on leaked model weights, fine-tuned on exploit databases and malware repositories — are dramatically more capable. Some offer specialized capabilities: one for phishing generation, another for malware development, a third for social engineering dialogue.

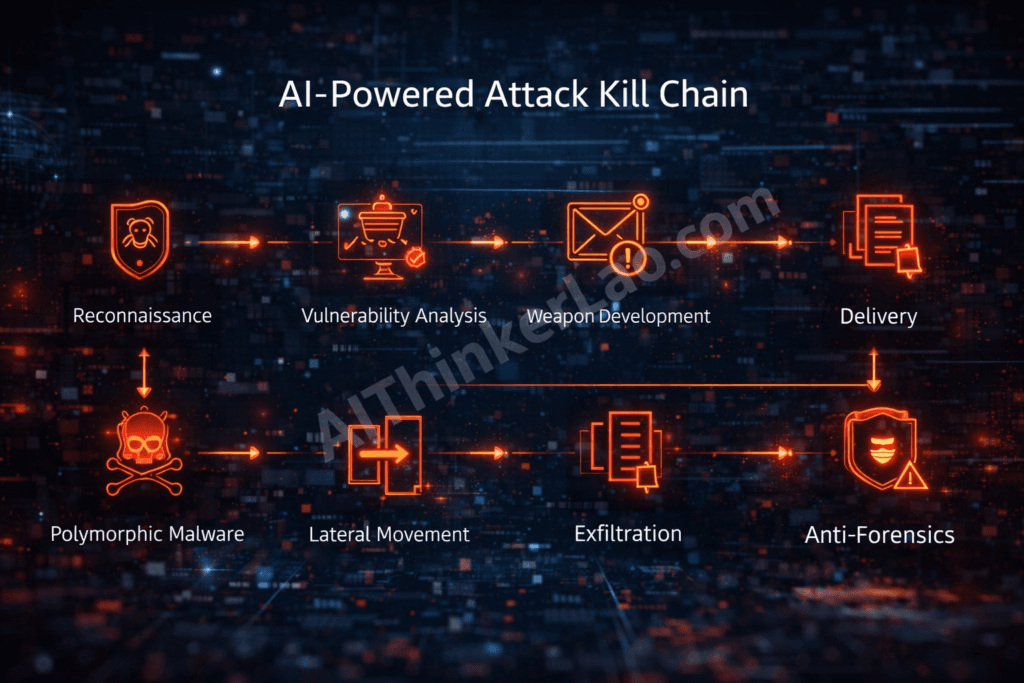

The AI-Powered Attack Chain

The modern AI-assisted attack follows eight stages, each augmented or fully automated by LLMs:

- Reconnaissance — AI scrapes and correlates the target’s digital footprint across LinkedIn, GitHub, job postings, DNS records, and public filings

- Vulnerability Analysis — LLM analyzes discovered code, configurations, and architecture for exploitable weaknesses

- Weapon Development — AI generates custom exploit code, tailored payloads, and evasion logic

- Delivery — AI-crafted phishing, social engineering, or supply chain compromise delivers the initial access

- Exploitation — Automated breach execution with real-time error handling and adaptation

- Lateral Movement — AI-scripted credential harvesting and privilege escalation (MITRE ATT&CK T1003)

- Exfiltration — Intelligent data extraction using covert channels that mimic legitimate traffic (MITRE ATT&CK T1048)

- Anti-Forensics — AI-generated log manipulation, timestamp alteration, and evidence destruction

What makes this particularly dangerous is the emergence of autonomous agent frameworks — built on architectures similar to LangChain and AutoGPT — that can execute this entire chain with minimal human oversight. The attacker sets the target and objective; the agent handles the rest. We’re not fully there yet, but the components are all in place.

Real-World AI Cyberattacks in 2026: Patterns That Keep Repeating

Beyond the Mexico government breach, several attack patterns have defined the 2026 threat landscape.

AI-Powered Ransomware Customization

Ransomware operators now use ChatGPT to customize payloads for specific targets — analyzing the target’s backup infrastructure, identifying the file types that would cause maximum operational disruption, and even generating customized ransom notes that reference the victim’s financial capacity (scraped from public filings). Some groups have deployed AI-powered negotiation chatbots that handle ransom communications autonomously.

Deepfake CEO Fraud

The combination of AI voice cloning and ChatGPT-scripted dialogues has turned CEO fraud into a precision weapon. Documented incidents involve attackers cloning a CEO’s voice from publicly available conference recordings, then calling the CFO with a ChatGPT-generated script requesting urgent wire transfers. One widely reported case involved a $25 million transfer executed before anyone questioned the request. The forensic trail eventually revealed the attack, but the funds were unrecoverable.

Supply Chain Poisoning via AI-Generated Code

This is the sleeper threat. Attackers use Claude to generate seemingly legitimate open-source contributions — pull requests that add useful features while embedding subtle backdoors. The code passes review because it’s well-written, properly documented, and functionally correct. The malicious logic is buried in edge-case handling or error recovery paths that reviewers rarely scrutinize. The implications for software supply chain security are severe and largely unaddressed.

Adaptive DDoS Attacks

AI-orchestrated distributed denial-of-service attacks now adapt in real time. The AI monitors the target’s response to each attack vector and automatically shifts patterns — changing request types, source distribution, and timing — to maintain maximum impact while evading mitigation systems that rely on pattern recognition.

| Case | AI Tool | Attack Type | Impact | Detection Time |

|---|---|---|---|---|

| Mexico Government | Claude Code | Multi-vector chain exploit | National security breach | 5 days |

| Enterprise Ransomware | ChatGPT + custom LLM | Targeted ransomware | $XX million damages | 72 hours |

| CEO Deepfake Fraud | Voice cloning + ChatGPT | Social engineering | $25M+ wire fraud | 2 weeks |

| Open-Source Supply Chain | Claude | Backdoor code injection | Widespread downstream compromise | 3+ months |

| Infrastructure DDoS | Custom AI agent | Adaptive DDoS | Critical service disruption | Real-time (but hard to stop) |

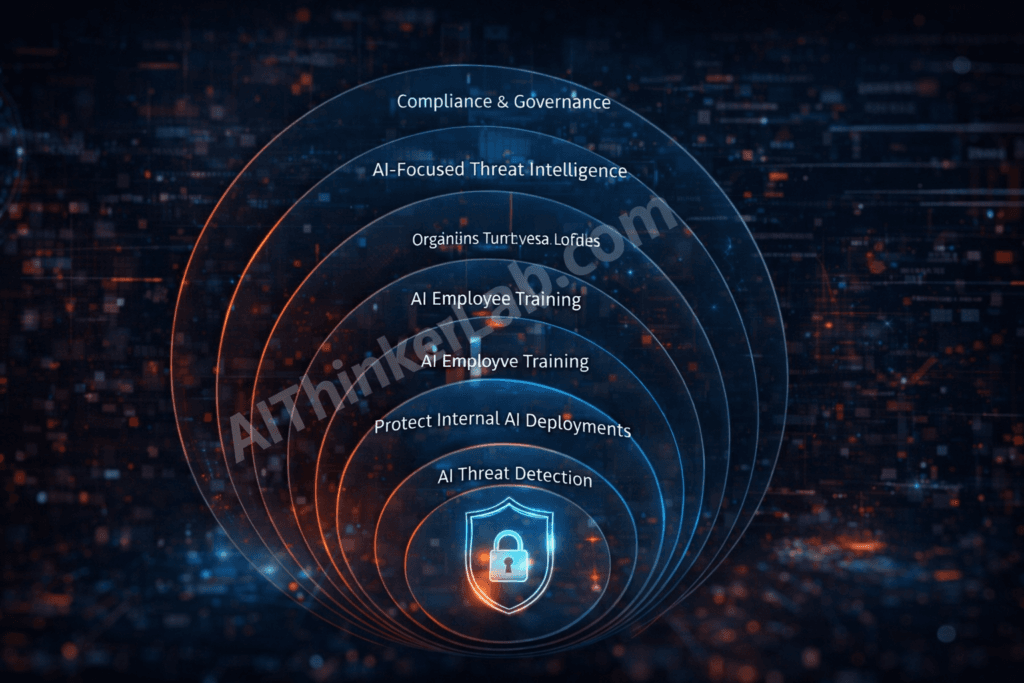

How to Stop AI-Powered Cyberattacks: The 2026 Defense Playbook

Here’s where this piece earns its value. Describing the threat is easy. Stopping it requires specific, actionable strategies.

Defense Layer #1: Fight AI With AI — Deploy Intelligent Threat Detection

You can’t detect AI-generated threats with static rules. You need behavioral analytics that establish baseline patterns and flag deviations — regardless of whether the specific attack signature has been seen before.

Specific tools worth evaluating: CrowdStrike Falcon’s AI-powered endpoint detection, Darktrace’s autonomous response technology, Abnormal Security for AI-generated email detection, SentinelOne’s behavioral AI engine, and Palo Alto Networks’ Cortex XSIAM for security operations automation. Microsoft Defender now includes specific detection modules for LLM-generated phishing patterns.

These platforms don’t look for known malware signatures. They look for behavioral anomalies: unusual API call patterns, abnormal data access sequences, atypical network traffic flows. This approach is fundamentally better suited to catching AI-generated threats that are unique every time.

Defense Layer #2: Zero Trust Architecture — Assume You’re Already Breached

Zero trust isn’t new, but it’s now non-negotiable. The speed of AI-assisted lateral movement means that perimeter-based security is a liability. If an attacker achieves initial access — and with AI-generated phishing, the odds are higher than ever — your survival depends on how effectively you’ve segmented your environment.

Implement micro-segmentation to contain breaches. Enforce least-privilege access with continuous verification. Deploy identity-centric security that validates every access request regardless of network location. The NIST Cybersecurity Framework 2.0 and its zero-trust architecture guidelines (NIST SP 800-207) provide the implementation roadmap.

For SMBs: you don’t need a seven-figure budget. Start with conditional access policies in Microsoft 365 or Google Workspace, segment your network into functional zones, and implement MFA everywhere — not just email.

Defense Layer #3: AI-Specific Employee Training

Traditional security awareness training is failing because it was designed for threats that no longer look like threats. AI-generated phishing doesn’t have typos. Deepfake voice calls sound exactly like the person they’re impersonating. The red flags employees were trained to spot don’t exist anymore.

New training must focus on process verification, not content inspection. Teach employees to verify unusual requests through a separate communication channel — regardless of how legitimate the request appears. If the “CEO” calls asking for a wire transfer, the response should be to hang up and call back on a known number. No exceptions.

Conduct simulation exercises using actual AI-generated attack scenarios. Services like KnowBe4 and Proofpoint now offer AI-phishing simulation modules. Run them quarterly, at minimum.

Defense Layer #4: Secure Your Own AI Implementations

If your business deploys chatbots, AI assistants, or LLM-powered tools, you’ve created new attack surfaces. Prompt injection attacks — where an adversary manipulates your AI system’s behavior through crafted inputs — are now a documented threat category. The OWASP Top 10 for LLM Applications and MITRE ATLAS (Adversarial Threat Landscape for Artificial Intelligence Systems) provide the frameworks for securing these systems.

Implement input validation on all user-facing AI interfaces. Monitor AI outputs for data leakage. Establish guardrails that prevent your AI systems from being turned against you.

Defense Layer #5: Update Incident Response for the AI Era

Your incident response playbook needs specific procedures for AI-generated threats. This includes: forensic techniques for identifying AI-generated code (stylometric analysis, entropy measurements), attribution challenges when attacks are AI-orchestrated, legal considerations around AI-involved breaches, and communication strategies that account for the speed of AI-powered attacks.

AI-assisted forensics tools can help here — using AI to hunt for AI-generated artifacts across your environment. CrowdStrike’s Charlotte AI and Microsoft’s Security Copilot provide this capability.

Defense Layer #6: Proactive Threat Intelligence

Monitor dark web marketplaces for AI tools targeting your industry. Subscribe to threat intelligence feeds that specifically track AI-powered attack developments. Participate in information-sharing organizations like ISACs (Information Sharing and Analysis Centers) to benefit from collective intelligence.

Defense Layer #7: Regulatory Compliance and AI Governance

The EU AI Act imposes specific requirements on AI security. U.S. Executive Orders on AI safety establish federal guidelines that are increasingly adopted by the private sector. Compliance isn’t just legal protection — it forces the systematic risk assessment and documentation that many organizations skip.

The 2026 AI Cybersecurity Defense Checklist

- Deploy AI-powered behavioral threat detection across all endpoints and email

- Implement zero-trust architecture with micro-segmentation and continuous verification

- Retrain all employees on AI-era threat recognition with quarterly simulations

- Audit all internal AI tools for prompt injection and data leakage vulnerabilities

- Update incident response playbooks with AI-specific forensic procedures

- Subscribe to AI-focused threat intelligence feeds

- Conduct quarterly AI red team exercises against your own infrastructure

- Establish AI governance policies with executive oversight

- Review compliance with EU AI Act, NIST CSF 2.0, and applicable AI regulations

- Assess third-party vendor AI risk in your supply chain

What’s Coming Next: Expert Predictions for AI Cyberthreats Beyond 2026

Autonomous Attack Agents

The most alarming near-term development is fully autonomous AI attack agents — systems that can plan, execute, adapt, and retry entire attack campaigns without human direction. The building blocks already exist in agent frameworks like AutoGPT and Claude’s tool-use capabilities. When these are purpose-built for offensive operations and deployed by criminal organizations, the speed and scale of attacks will exceed anything we’ve seen. Organizations are increasingly facing a shadow AI crisis.

We’re probably 12-18 months from this becoming a routine capability in the criminal ecosystem.

The AI vs. AI Arms Race

Cybersecurity is becoming a machine-speed conflict. Defensive AI systems that detect and respond to threats in milliseconds will battle offensive AI that adapts and pivots just as fast. The winner will be determined by training data quality, model architecture, and — critically — the speed of deployment. Organizations that lag in adopting AI-powered defenses will be disproportionately targeted because they’re the easier prey.

The Open-Source AI Problem

OpenAI and Anthropic can improve their guardrails — and they do, consistently. But the proliferation of open-weight models means that capable, ungoverned AI is permanently available. You can’t put this capability back in the box. Defense strategy must assume that attackers have unrestricted AI access, because they do.

AI Companies’ Evolving Responsibility

Anthropic, OpenAI, Google DeepMind, and Meta all face increasing pressure to prevent misuse while maintaining utility. This is a genuine tension with no clean resolution. Anthropic’s Constitutional AI approach and OpenAI’s iterative deployment strategy represent good-faith efforts, but the dual-use nature of capable AI means some misuse is structurally inevitable. The real question is whether defensive applications can outpace offensive ones.

The Bottom Line: Your Move

AI-powered cyberattacks aren’t a future threat — they’re the current operating environment. The Claude Code exploit against Mexico’s government isn’t an outlier. It’s a template that’s being replicated across industries, geographies, and organization sizes.

The organizations that survive this shift will be the ones that accept three uncomfortable realities: traditional security controls alone are no longer sufficient, AI must be part of the defense stack (not just the threat landscape), and every employee is now a potential attack surface for AI-powered social engineering.

The question isn’t whether your organization will face an AI-assisted attack. It’s whether you’ll detect it in time to matter.

Start with the defense checklist above. Implement what you can this quarter. And stop assuming that your current security posture was designed for the threats you’re actually facing — because it wasn’t.

FAQs

How do hackers use ChatGPT to create cyberattacks?

Hackers use ChatGPT to generate hyper-personalized phishing emails, write polymorphic malware that evades antivirus detection, automate vulnerability discovery in target systems, create social engineering scripts for voice and chat-based attacks, and plan multi-stage attack campaigns. The model’s natural language interface means attackers don’t need traditional programming or hacking skills — they describe what they want in plain English and iterate on the output.

What was the Claude Code exploit that hit Mexico’s government?

In early 2026, an attacker used Claude Code to analyze a Mexican government web portal, identify a chain of three separate vulnerabilities (SSRF, broken access control, and injection), and generate exploit code that bypassed WAF, IDS, and endpoint protection in 47 seconds. The breach went undetected for five days, exposing citizen records and internal communications. The attack demonstrated how AI can chain moderate-severity bugs into critical exploits.

Can AI-generated malware bypass antivirus software?

Yes. AI-generated polymorphic malware changes its code structure with every deployment while preserving malicious functionality. This defeats signature-based antivirus that relies on matching known code patterns. Industry data suggests the majority of AI-generated malware evades traditional antivirus on first contact. Behavioral-based detection (monitoring what code does rather than what it looks like) is the most effective countermeasure.

How can businesses protect themselves from AI-powered cyberattacks?

Businesses need a multi-layered defense: (1) AI-powered behavioral threat detection, (2) zero-trust architecture with micro-segmentation, (3) AI-specific employee training with simulation exercises, (4) prompt injection monitoring for internal AI tools, (5) updated incident response playbooks, (6) proactive threat intelligence, and (7) compliance with 2026 AI security regulations including NIST CSF 2.0 and the EU AI Act.

Are ChatGPT and Claude responsible for the increase in cyberattacks?

The AI tools themselves are designed with safety guardrails. However, determined attackers bypass these through jailbreaking, prompt manipulation, and using the tools’ legitimate capabilities for malicious purposes. More significantly, open-source AI models with no safety filters are freely available. Blaming commercial AI providers oversimplifies the problem — the underlying capability is now permanently accessible through multiple channels.

What is the most dangerous AI-powered cyber threat in 2026?

Autonomous AI attack agents — self-directing systems that plan, execute, and adapt cyberattack campaigns without human intervention. While not yet fully mature, the component technologies exist and are being assembled. These systems represent a step-change in attack speed and scalability that will challenge even AI-powered defenses.

Can AI also be used for cybersecurity defense?

Absolutely — and it must be. AI-powered defensive tools detect behavioral anomalies, identify AI-generated phishing, analyze code for AI-crafted malware patterns, automate incident response, and predict emerging threats. Tools like CrowdStrike Falcon, Darktrace, SentinelOne, and Microsoft Security Copilot represent the current state of the art. The most effective 2026 security strategies use AI to fight AI.

How much do AI-powered cyberattacks cost businesses?

IBM’s latest data shows the average cost of an AI-enhanced breach exceeds $5.2 million, approximately 12% higher than traditional cyberattacks. The premium reflects faster compromise speeds, harder detection, longer dwell times, and more comprehensive data exfiltration. For smaller organizations without AI-powered defenses, the gap is wider.