Key Takeaways

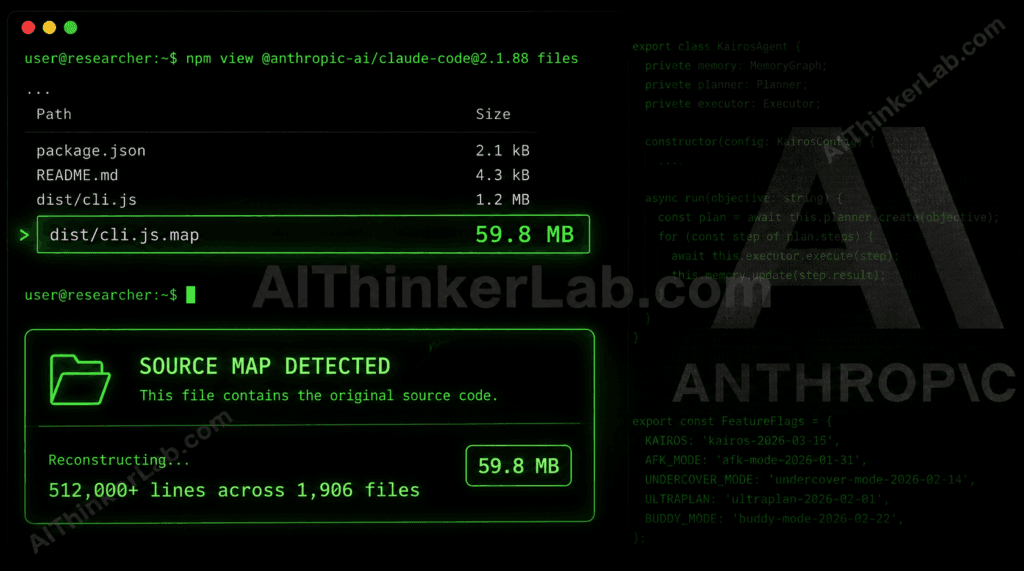

- The event: On March 31, 2026, Anthropic accidentally shipped the complete source code of Claude Code — 512,000+ lines of TypeScript across 1,906 files — inside npm package v2.1.88 via a source map file that was never supposed to be public.

- Scale of disclosure: Security researcher Chaofan Shou discovered and publicized it within hours. The X post drew 28 million views. GitHub mirrors accumulated 84,000+ stars before Anthropic’s DMCA takedowns could contain the spread.

- What leaked: 44 unreleased feature flags, internal model codenames (Capybara, Fennec, Numbat), a fully built autonomous agent called KAIROS, two competitive anti-distillation systems, and engineering notes documenting a regression in Anthropic’s next major model variant.

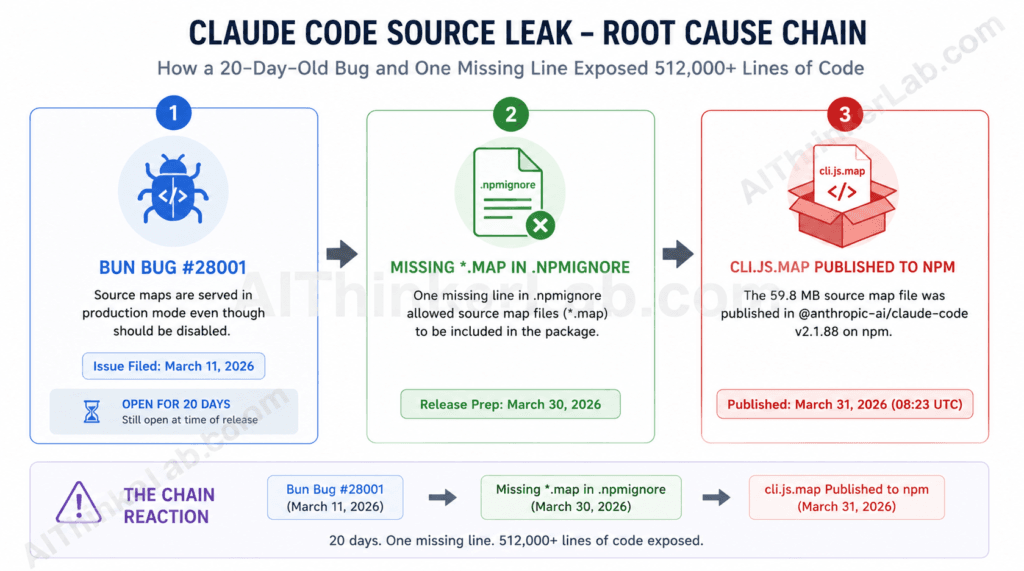

- Root cause: A Bun runtime bug (GitHub issue #28001, open 20 days before the incident) generates source maps in production builds by default. One missing

*.mapline in.npmignorewas all it took to expose everything. - For developers: Two leaked features — ULTRAPLAN and BUDDY — have since officially shipped. KAIROS remains unconfirmed. Boris Cherny, head of Claude Code, said the team is “still on the fence” about it.

- The bigger signal: This was Anthropic’s second major exposure in a single week, five days after a CMS misconfiguration leaked the “Mythos” model. At a reported $19 billion annualized revenue run-rate, the company’s deployment infrastructure is not keeping pace with its engineering velocity — and that gap has a name.

Introduction

Around 08:23 UTC on March 31, 2026, Chaofan Shou — a researcher then interning at Solayer Labs — opened the npm registry page for @anthropic-ai/claude-code and noticed something that wasn’t supposed to be there. Bundled inside version 2.1.88 of Anthropic’s flagship agentic coding CLI was a 59.8 MB source map file, cli.js.map, that reconstructed the entire TypeScript source code of the product in readable form. No exploit. No credential theft. One misconfigured packaging step.

By the time Shou’s X post had circulated for a few hours, the Claude Code source leak of 2026 had already taken on a life of its own. Within hours, the code had been mirrored across GitHub, amassing over 84,000 stars and 82,000 forks before Anthropic could issue DMCA takedowns. Developers around the world were reading Anthropic’s internal architecture, product roadmap, and competitive defense mechanisms — code that was never meant to leave the building. Tech Insider

Here’s what was inside, why it matters, and what it tells you about where Claude is actually going.

What Is the Claude Code Source Leak, Exactly?

The Claude Code source leak refers to the accidental public exposure of Anthropic’s complete Claude Code codebase — roughly 512,000 lines of TypeScript across 1,906 files — via a source map file bundled in npm package version 2.1.88 on March 31, 2026. A sourcemap is a debugging artifact generated by JS/TS bundlers that maps minified output back to the original source code, file structure, comments, and internal logic. In a published npm package, it provides a complete reconstruction of your source code — available to anyone who runs npm pack or simply browses the registry. No exploit, no credentials, no special access required. Medium

The mechanism is worth understanding precisely. Anthropic acquired Bun at the end of last year, and Claude Code is built on top of it. A Bun bug (oven-sh/bun#28001), filed on March 11, reports that source maps are served in production mode even though Bun’s own docs say they should be disabled. The issue was still open at the time of the release — 20 days after it was filed. The missing counterpart was a single entry in .npmignore: *.map. That one exclusion would have blocked everything. It wasn’t there. Alex Kim’s blog

Anthropic confirmed that “some internal source code” had been leaked within a “Claude Code release.” A spokesperson said: “No sensitive customer data or credentials were involved or exposed. This was a release packaging issue caused by human error, not a security breach.” Boris Cherny, head of Claude Code, confirmed it was a “plain developer error.” This was at least the second such incident, following a similar leak in February 2025. FortuneClaude Fast

What the leak exposed is arguably more commercially sensitive than customer data: product logic, unannounced roadmap, and the competitive architecture Anthropic has been quietly building. For Anthropic, a company currently riding a meteoric rise with a reported $19 billion annualized revenue run-rate as of March 2026, the leak is more than a security lapse; it is a strategic hemorrhage of intellectual property. VentureBeat

Key Insight: The Claude Code source leak is a packaging failure amplified by a third-party toolchain bug — not a security intrusion. The root cause is systemic, not individual, and it’s happened at least twice before.

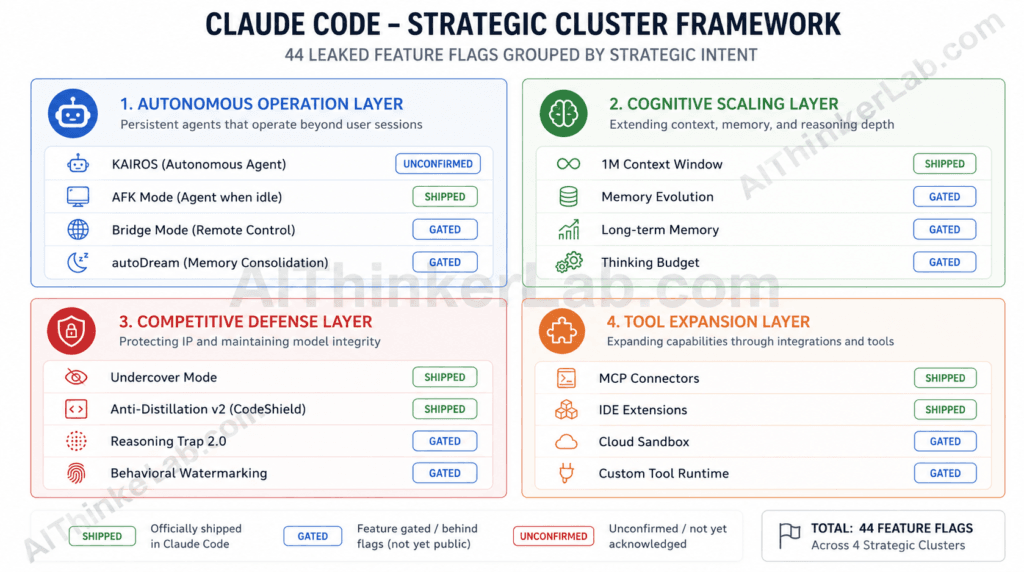

All 44 Leaked Feature Flags — Grouped by Strategic Cluster

The leaked codebase contained 512,000+ lines of code, revealing hidden features, internal codenames, and unshipped capabilities like the KAIROS background agent and Undercover Mode. But individual feature lists obscure the strategic picture. Grouping the 44 disclosed flags into four clusters reveals something no flat enumeration shows: Anthropic is building four distinct capability layers simultaneously, and they’re at very different stages of maturity. Claude Fast

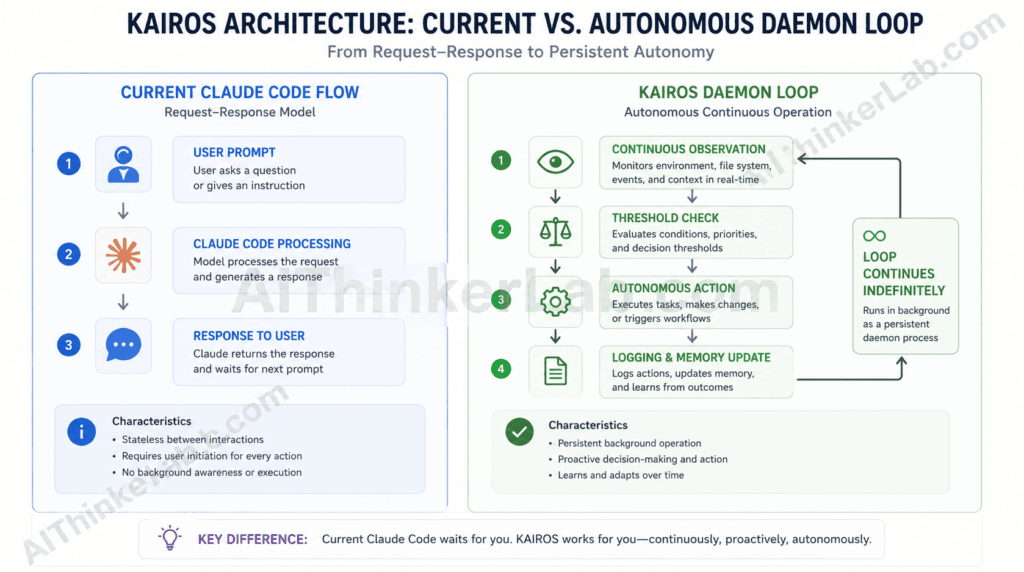

Cluster 1 — Autonomous Operation Layer (KAIROS, AFK Mode, Bridge Mode, autoDream) These features transform Claude Code from a session-based tool into a persistent, always-aware agent. KAIROS is the flagship; AFK Mode (afk-mode-2026-01-31) enables agentic behavior when no user is present; Bridge Mode enables remote control from a phone or browser via WebSocket; autoDream runs memory consolidation while the user is idle.

Cluster 2 — Cognitive Scaling Layer (1M context, interleaved thinking, redacted thinking, structured outputs, task budgets, prompt caching scope, token-efficient tools, effort control) The constants/betas.ts file reveals every beta feature Claude Code negotiates with the API, and this cluster is where the deepest investment is visible. The 1M token context window (context-1m-2025-08-07) and task budget management (task-budgets-2026-03-13) together suggest Anthropic is engineering for sustained, multi-hour autonomous work sessions — not the short-burst interactions most users experience today. Kuber

Cluster 3 — Competitive Defense Layer (anti-distillation fake tools, connector-text summarization, Undercover Mode) This is the cluster that most post-leak coverage underweighted. These aren’t user-facing features — they’re moat-building infrastructure. The fake tools mechanism actively degrades competitor training data. Connector-text summarization withholds reasoning chains from anyone recording API traffic. Undercover Mode suppresses AI attribution in open-source commits made by Anthropic employees.

Cluster 4 — Tool Surface Expansion Layer (advisor tool, advanced tool use, web search, ULTRAPLAN, Coordinator Mode / swarms) The leaked roadmap positions Claude Code ahead of competitors in several areas: Cursor has its own background agent features. Windsurf is pushing multi-file operations. GitHub Copilot has Codex for async tasks. But none of them have anything resembling KAIROS’s persistent memory system or Coordinator Mode’s full multi-agent isolation. TECHSY

| Feature / Flag | Cluster | Status (Post-Leak) | Strategic Signal |

|---|---|---|---|

| KAIROS daemon agent | Autonomous Operation | Unconfirmed | Claude as persistent operator |

AFK Mode (afk-mode-2026-01-31) | Autonomous Operation | Unconfirmed | Background task execution |

| Bridge Mode | Autonomous Operation | Unconfirmed | Multi-device agent control |

| autoDream / DREAM | Autonomous Operation | Unconfirmed | Long-term memory distillation |

1M token context (context-1m-2025-08-07) | Cognitive Scaling | Unconfirmed | Sustained session depth |

Task budgets (task-budgets-2026-03-13) | Cognitive Scaling | Unconfirmed | Cost-aware autonomy |

Redacted thinking (redact-thinking-2026-02-12) | Cognitive Scaling | Unconfirmed | Reasoning privacy layer |

Token-efficient tools (token-efficient-tools-2026-03-28) | Cognitive Scaling | Unconfirmed | Enterprise cost optimization |

| Anti-distillation fake tools | Competitive Defense | Active (internal only) | Competitor training poisoning |

Connector-text summarization (summarize-connector-text-2026-03-13) | Competitive Defense | Active (internal only) | Reasoning chain protection |

| Undercover Mode | Competitive Defense | Active (internal only) | Stealth AI attribution |

| ULTRAPLAN | Tool Expansion | Officially shipped | Cloud-backed planning sessions |

| BUDDY companion system | Tool Expansion | Briefly shipped (Apr 1) | User retention / engagement |

Advisor Tool (advisor-tool-2026-03-01) | Tool Expansion | Unconfirmed | Guided agent layer |

Key Insight: Cluster 3 — the Competitive Defense Layer — is the strategic signal most coverage missed entirely. Anthropic wasn’t just building features; it was building systems specifically to prevent those features from being learned by competitors. The leak exposed the strategy alongside the code.

KAIROS: The Autonomous Agent Anthropic Hasn’t Announced Yet

The leak also pulls back the curtain on “KAIROS,” the Ancient Greek concept of “at the right time,” a feature flag mentioned over 150 times in the source. KAIROS represents a fundamental shift in user experience: an autonomous daemon mode. While current AI tools are largely reactive, KAIROS allows Claude Code to operate as an always-on background agent. VentureBeat

The implementation specifics matter. KAIROS is referenced over 150 times in the source code. It makes Claude Code persistent — it remembers your project, your patterns, and your preferences across sessions. The implementation uses daily append-only markdown logs stored at ~/.claude/.../logs/YYYY/MM/DD.md. Every session appends context. Over time, Claude Code builds a running history of your work — not just what you asked, but what it observed about your codebase and workflow. TECHSY

The agent runs in the background 24/7, and receives a recurring check-in asking if KAIROS has anything worth doing right now. It checks whether the action would block your workflow for more than fifteen seconds. If it would, the task gets deferred so it never gets in your way while you’re actively working. KAIROS also gets exclusive tools unavailable to regular Claude Code sessions: push notifications to your phone or desktop, unsolicited file delivery, and the ability to subscribe to pull request activity and react to code changes without being asked. XDA Developers

Cherny responded to a post where someone was breaking down KAIROS and said that they’re still on the fence about the feature. That hesitation carries product signal. KAIROS is heavily gated but real — the implementation logic is throughout the codebase, not confined to stub files. The “on the fence” framing suggests the question isn’t engineering readiness but product philosophy: how much autonomous action is the market actually prepared for? XDA Developers

Key Insight: KAIROS isn’t a feature upgrade — it’s a categorical shift from coding assistant to background AI operator. If it ships as designed, it’s the most consequential product change in the AI coding tools market since GitHub Copilot launched. If it doesn’t, the leaked design will almost certainly be replicated by a competitor first.

The Competitive Defense Layer Nobody Wanted to Talk About

The features in Cluster 3 are uncomfortable to discuss, which is probably why most post-leak coverage buried them. They deserve more space.

There’s a second anti-distillation mechanism in betas.ts (lines 279-298), server-side connector-text summarization. When enabled, the API buffers the assistant’s text between tool calls, summarizes it, and returns the summary with a cryptographic signature. On subsequent turns, the original text can be restored from the signature. If you’re recording API traffic, you only get the summaries, not the full reasoning chain. Alex Kim’s blog

The workarounds are easy. Looking at the activation logic in claude.ts, the fake tools injection requires all four conditions to be true: the ANTI_DISTILLATION_CC compile-time flag, the CLI entrypoint, a first-party API provider, and the tengu_anti_distill_fake_tool_injection GrowthBook flag returning true. A MITM proxy that strips the anti_distillation field from request bodies before they reach the API would bypass it entirely, since the injection is server-side and opt-in. Alex Kim’s blog

Anthropic spent real engineering effort on a competitive moat. The leak published the bypass instructions alongside the mechanism.

Then there’s Undercover Mode. The leaked code contains a check for USER_TYPE === 'ant' — a flag that identifies Anthropic employees. When that flag is true and the user is working in a public repository, the system automatically enters what the code calls “undercover mode.” It strips Co-Authored-By attribution, forbids mentioning internal details in commits, and prevents references to unreleased models. The system prompt injected in that state includes the instruction that Claude must “NEVER mention you are an AI.” WaveSpeedAIClaude Fast

The community reaction divided along predictable lines: responsible security practice versus deceptive AI attribution. What’s not debatable is that the exposed logic now gives anyone the exact prompt structure to emulate — and the list of forbidden internal codenames to look for.

Key Insight: The Competitive Defense Layer reveals that Anthropic views model distillation as an existential competitive threat worth engineering against, not just a legal problem. The leak didn’t just expose features — it exposed the threat model that shaped the features.

What the Codenames Tell You About Claude’s Next Models

The Undercover Mode configuration doubles as an inadvertent model roadmap. It contains a list of strings that Anthropic employees’ Claude-assisted commits must never include — and that list confirms what’s in active development. The leak revealed several internal codenames: Tengu (Claude Code’s project codename), Capybara (a new model family, possibly the leaked Mythos model), Fennec (Opus 4.6), and Numbat (an unreleased model). References to Opus 4.7 and Sonnet 4.8 were found in Undercover Mode’s list of forbidden strings, confirming these models are in development. Claude Fast

Internal code comments added a layer no analyst could have anticipated: the leak confirms that Capybara is the internal codename for a Claude 4.6 variant, with Fennec mapping to Opus 4.6 and the unreleased Numbat still in testing. Internal comments reveal that Anthropic is already iterating on Capybara v8, yet the model still faces significant hurdles. The code notes a 29-30% false claims rate in v8, an actual regression compared to the 16.7% rate seen in v4. Developers also noted an “assertiveness counterweight” designed to prevent the model from becoming too aggressive in its refactors. VentureBeat

These aren’t marketing benchmarks. They’re engineering comments written for internal reviewers, which makes them more credible — and, for anyone building production systems on Claude Code, more directly useful for calibrating expectations about the next model cycle.

Key Insight: A 29-30% false claims regression on Capybara v8 is not a product failure disclosure — it’s evidence that Anthropic’s internal evaluation discipline is rigorous enough to document regressions at the sub-version level. That’s a safety signal embedded inside what reads, on the surface, like negative news.

The Hidden Risk Nobody’s Fully Accounting For

The security community correctly flagged CVE-2026-21852, where a malicious repository could set ANTHROPIC_BASE_URL so Claude Code would make requests before showing the trust prompt, potentially leaking API keys. That is not a source-map vulnerability. It is a pre-trust initialization vulnerability — but once those are known high-value surfaces, any event that makes implementation study cheaper deserves attention. Penligent

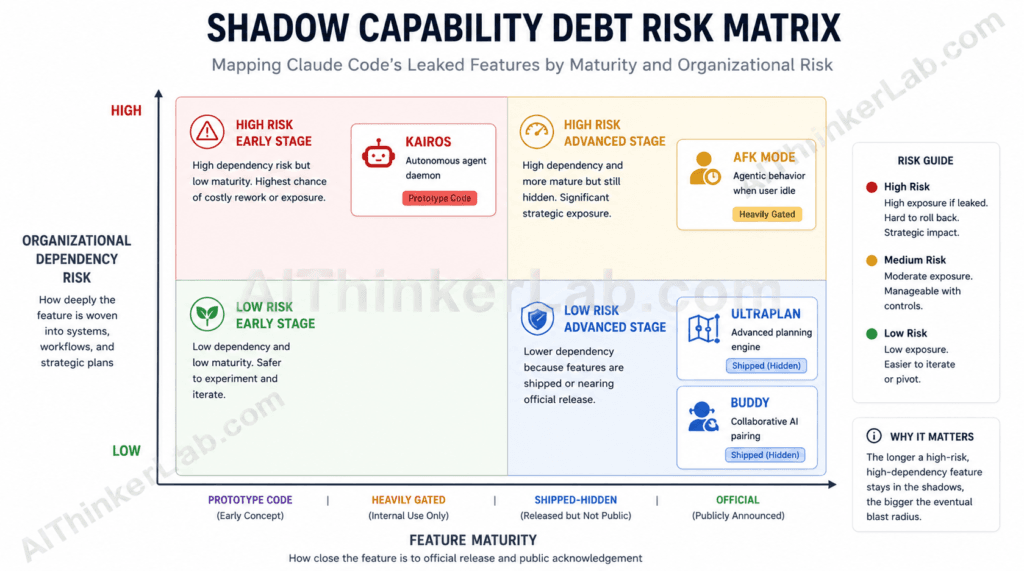

There’s a second risk category that received almost no coverage: what you might call shadow capability debt.

Shadow capability debt is the organizational exposure that accumulates when enterprise teams — aware of leaked product capabilities — design workflows, integrations, or internal tooling around features that carry no official SLA, no stability guarantee, and no confirmed ship date. The KAIROS architecture is detailed enough to build against. The connector-text summarization behavior is specific enough to incorporate into an MCP integration design. Neither has official status.

If Anthropic modifies KAIROS before launch — changes the trigger logic, narrows the autonomous action scope, or cancels it entirely — any enterprise team that built against the leaked specification has a planning failure with no recourse. There’s no support ticket to file. There’s no breach of contract. There’s just a roadmap that was built on code that was never supposed to be public.

The most immediate danger, however, is a concurrent, separate supply-chain attack on the axios npm package, which occurred hours before the leak. If you installed or updated Claude Code via npm on March 31, 2026, between 00:21 and 03:29 UTC, you may have inadvertently pulled in a malicious version of axios (1.14.1 or 0.30.4) that contains a Remote Access Trojan (RAT). Two entirely separate threat vectors — Anthropic’s packaging error and an unrelated supply chain attack — overlapped in the same two-hour window. VentureBeat

The March 31 incident was not an isolated close call. In the weeks following the leak, threat actors moved quickly to exploit the confusion around Claude Code update advisories — publishing malicious Claude Code packages designed to catch developers off guard during the patch window. If you updated any Claude Code-adjacent tooling around this period, our separate investigation into malicious Claude Code downloads circulating in 2026 details exactly which packages to check and what compromise indicators to look for.

Key Insight: Shadow capability debt is the slow-burn risk the security community hasn’t yet named. The Velocity-Secrecy Paradox (more on this below) guarantees it will recur. Enterprise teams need a capability confirmation gate, not just an npm audit policy.

What Developers and Enterprise Teams Should Do Right Now

Basic security hygiene is the key to successfully containing information you don’t want getting out there. For Claude Code specifically, the action items are concrete: IT Brew

- Audit your Claude Code npm installation version. If anything in your environment runs v2.1.88 of

@anthropic-ai/claude-code, remove and update immediately. - Check lockfiles for the axios RAT. Search

package-lock.json,yarn.lock, orbun.lockbfor axios versions 1.14.1 or 0.30.4, or any reference toplain-crypto-js. If found, treat the host machine as fully compromised, rotate all secrets, and perform a clean OS reinstallation. - Do not build production workflows on KAIROS, AFK Mode, Bridge Mode, or any unannounced feature. Cherny’s public hesitation about KAIROS is a specific signal worth taking at face value.

- Review Claude Code’s Trust Prompt configuration. The leaked source clarified the pre-trust initialization sequence that makes CVE-2026-21852 exploitable. Avoid pointing Claude Code at untrusted repositories, plugin bundles, or externally generated artifacts without explicit permission scoping.

- Monitor Anthropic’s official changelog (docs.anthropic.com/claude-code) for official feature confirmations before incorporating any leaked behavior into production integrations.

- Brief your legal and compliance team on Undercover Mode. If your organization contributes to public open-source projects and uses Claude Code via Anthropic employee licenses, you now have documented AI attribution questions to address.

- Establish a capability confirmation gate. No leaked capability enters production architecture until Anthropic announces and documents it officially. Shadow capability debt isn’t theoretical — it’s a planning liability with measurable cost.

How This Happened — and Why It Will Keep Happening

The proximate cause is clean: the Bun runtime generates source maps by default during the build process. Someone at Anthropic failed to add *.map to the .npmignore file, causing the source map to be included in the published npm package. Cherny called it a plain developer error. Claude Fast

The systemic explanation is more interesting. Anthropic acquired the Bun JavaScript runtime at the end of 2025, and Claude Code is built on top of it. A known Bun bug (issue #28001, filed on March 11, 2026) reports that source maps are served in production builds even when the documentation says they shouldn’t be. The bug was open for 20 days before this happened. Nobody caught it. Anthropic’s own acquired toolchain contributed to exposing Anthropic’s own product. DEV Community

This was also the company’s second major exposure in a single week. The March 26 CMS misconfiguration leaked documentation for the “Mythos” model — the same model now confirmed as Capybara. This is Anthropic’s second accidental exposure in a week (the model spec leak was just days ago), and some people on Twitter are starting to wonder if someone inside is doing this on purpose. Probably not, but it’s a bad look either way. Alex Kim’s blog

The structural dynamic here is what I’d call the Velocity-Secrecy Paradox: as frontier AI labs accelerate their development and deployment cycles to maintain competitive position, their infrastructure necessarily generates more disclosure surface area than their communications and security teams can manage. Anthropic moves extremely fast, and has been dropping multiple features weekly. Each release cycle is a new opportunity for a packaging oversight, a CMS misconfiguration, or a toolchain bug — especially when the company has just absorbed a third-party runtime with known production issues. XDA Developers

The Velocity-Secrecy Paradox is not an Anthropic-specific condition. It’s structural to frontier AI development. The pace that produces competitive advantage is the same pace that makes controlled information release effectively impossible at scale. The Claude Code leak is notable not because Anthropic was uniquely careless, but because the disclosure was unusually comprehensive.

Anthropic’s Official Response and What It Actually Signals

Anthropic’s statement was brief and carefully worded: “No sensitive customer data or credentials were involved or exposed. This was a release packaging issue caused by human error, not a security breach.”

“Not a security breach” is technically accurate and also sidesteps the competitive intelligence dimension — the real story. Feature flags and the product roadmap are now visible to competitors like GitHub Copilot, Cursor, and every other AI coding tool. The Agent Swarms multi-agent functionality, the KAIROS autonomous scheduling system, and the enhanced memory capabilities all represent features that competitors can now anticipate and potentially race to implement first. That can’t be addressed in a statement. TECHSY

Anthropic filed DMCA takedowns against unauthorized mirrors. Claude Code’s source is available on GitHub, but it is not open source in the licensing sense. The license does not permit redistribution or modification. Despite Anthropic’s DMCA takedown efforts on GitHub, the code remains widely available through decentralized mirrors, cached copies, and the 41,500+ forks created before takedown notices were issued. Claude FastTech Insider

Read together — the brief statement, the DMCA push, the absence of any roadmap clarification — the response signals that Anthropic intends to continue building without officially confirming or denying which leaked features will ship. That’s a deliberate communications posture, and it means shadow capability debt is enterprise teams’ problem to manage, not Anthropic’s.

The Leak That Told You More Than the Launch Would Have

Sometimes what a company doesn’t control tells you more than what it announces. A polished product launch would have given you ULTRAPLAN’s marketing framing. The leak gave you Capybara v8’s 29-30% false claims regression.

The most important signal from the Claude Code source leak of 2026 isn’t any single feature — it’s the Cluster 3 finding. Anthropic is actively engineering systems to prevent competitors from learning from Claude Code’s API traffic. That’s a strategic priority that reflects a specific competitive worldview: the most dangerous rivals aren’t building better features, they’re training on the behavioral exhaust of better features. The anti-distillation infrastructure is the tell.

Near-term prediction: within 90 days of publication, KAIROS will either ship with significantly narrowed scope (persistent memory only, no autonomous action) or be formally shelved. The “on the fence” signal from Cherny, combined with the reputational weight of an AI that “silently does things in the background,” points toward a constrained version if it ships at all.

The question enterprise teams should be asking isn’t whether these features will launch — some will. It’s whether your planning process has a mechanism for distinguishing official capabilities from shadow ones. The Velocity-Secrecy Paradox guarantees more leaks are coming. Shadow capability debt is a recurring organizational risk now, not a one-time event.

Subscribe to aithinkerlab.com for ongoing analysis as Anthropic’s roadmap officially unfolds — or dig into the full security implications in our Claude Code Enterprise Security Checklist.

The Anthropic Connector Leak 2026 doesn’t exist in a vacuum. Exposed source code, undocumented API behavior, and leaked capability logic all become raw material for adversarial actors — and 2026 has made that threat concrete. Threat actors are already using Claude and ChatGPT to architect cyberattacks with a speed and sophistication that wasn’t possible two years ago. Our deep-dive into how hackers are actively using AI models to build cyberattacks in 2026 shows why source-level exposure events like this one have consequences that extend far beyond a single vendor’s roadmap.

Sources / References

- Bun runtime issue tracker — Issue #28001, filed March 11, 2026.

- Chaofan Shou (@Fried_rice) on X — Original disclosure of the Claude Code leak, March 31, 2026 (primary discovery source)

- Fortune — “Anthropic leaks its own AI coding tool’s source code in second major security breach,” April 1, 2026.

- VentureBeat — “Claude Code’s source code appears to have leaked: here’s what we know,” April 1, 2026.

- Zscaler ThreatLabz — “Anthropic Claude Code Leak,” April 2026.

- IT Brew — “Anthropic code leak exposed Claude information, but it might not be a total disaster,” April 3, 2026.

- Alex Kim’s blog — “The Claude Code Source Leak: fake tools, frustration regexes, undercover mode, and more,” March 31, 2026.

- XDA Developers — “Claude Code’s leaked source code revealed some features Anthropic wasn’t ready to share,” April 2026.

- GitHub Advisory Database — CVE-2026-21852, CVE-2025-59536 (official vulnerability records).