Key Takeaway

- Heretic is a tool, not a model. It automates the removal of safety alignment from open-source LLMs using a technique called abliteration — it doesn’t compete with GPT-4 on intelligence benchmarks.

- The Heretic AI abliteration benchmarks on Gemma-3-12B-IT show 3/100 refusals with just 0.16 KL divergence — 6.5× less capability damage than the leading manual abliteration (mlabonne, 1.04 KL).

- Zero human effort required. Heretic’s Optuna-powered optimization matched expert-level results automatically, with a single CLI command.

- Over 1,000 community-created Heretic models now exist on HuggingFace, including variants of GPT-OSS-20B, Gemma 3, and Qwen 3.

- OpenAI’s actual frontier is GPT-5.4 (released March 5, 2026), not GPT-4 — and OpenAI is actively strengthening safety guardrails as tools like Heretic make abliteration trivially accessible.

The latest Heretic AI abliteration benchmarks from March 2026 reveal something that should make every AI safety researcher uncomfortable. Heretic is a tool that removes censorship (aka “safety alignment”) from transformer-based language models without expensive post-training. On Google’s Gemma-3-12B-IT, 5the Heretic version achieves the same 3/100 refusal rate as manually-tuned abliterations, but at a KL divergence of 0.16 — roughly one-third of the next best result and one-sixth of the established mlabonne abliteration. And here’s the kicker: it did all of that with zero human intervention. Heretic isn’t a competing AI model — it’s a surgical instrument that exposes how fragile current safety alignment really is.

What Is Heretic AI? (Definition & Context)

Heretic AI — Tool Definition

Before unpacking the Heretic AI abliteration benchmarks, you need to understand what Heretic actually is — and more importantly, what it isn’t. Heretic is a complete software solution that automates the complex process of abliteration (directional ablation). It combines an advanced implementation of directional ablation, also known as “abliteration” (Arditi et al. 2024, Lai 2025), with a TPE-based parameter optimizer powered by Optuna.

Think of it like this: if safety alignment is a lock, abliteration is a lockpick. Previous lockpicks required a master locksmith. Heretic is a machine that picks locks automatically — and often does it better than the humans. Using Heretic does not require an understanding of transformer internals. In fact, anyone who knows how to run a command-line program can use Heretic to decensor language models. The latest release listed on PyPI is version 1.2.0, released on February 14, 2026, and it requires Python 3.10 or later.

The Science Behind Abliteration — Arditi et al. (2024)

Heretic didn’t invent abliteration. It productized it. Heretic’s core premise follows mechanistic interpretability research published in 2024: “Refusal in Language Models Is Mediated by a Single Direction,” by Arditi et al. In that work, researchers found that refusal behaviour in multiple popular chat models can be linked to a one-dimensional subspace in the residual stream. They demonstrate that removing that direction reduces refusals, while adding it can induce refusals even for harmless requests.

That finding, published at NeurIPS 2024, was tested across 13 popular open-source chat models up to 72B parameters in size. The implications landed hard. The broader conclusion is uncomfortable but important: current alignment methods can be brittle, and model behaviour can sometimes be controlled through targeted internal interventions rather than retraining.

Who Created Heretic?

Heretic, by Philipp Emanuel Weidmann, makes the process fully automatic. The code is hosted on GitHub (https://github.com/p-e-w/heretic) and installable via `pip install heretic-llm`.

One detail that most coverage skips: Heretic is licensed under the GNU Affero General Public License (AGPL) v3.0. That is not a permissive licence. It has real implications for anyone who plans to modify and run the software in networked environments. If you’re a company thinking about integrating Heretic into a pipeline behind an API, you need to talk to your legal team before writing a single line of code. The purpose of the Heretic organization on HuggingFace is to publish and curate high-quality abliterated models made using Heretic.

Heretic vs Fine-Tuning vs Prompt Jailbreaking — Key Differences

Not all censorship removal is equal. Abliteration is a permanent modification to the model’s architecture and weights, removing safety mechanisms at the structural level. Abliterated models don’t require special prompts to bypass restrictions because those restrictions have been fundamentally removed from the model itself.

Here’s how the main approaches stack up:

| Method | Type | Cost | Persistence | Model Damage (KL) | Skill Required |

|---|---|---|---|---|---|

| Heretic (Automated Abliteration) | Weight modification | Free (open source) | Permanent | Very Low (0.16) | Low (CLI command) |

| Manual Abliteration | Weight modification | Free | Permanent | Medium-High (0.45–1.04) | High (transformer knowledge) |

| Fine-Tuning / RLHF Reversal | Retraining | High (GPU hours) | Permanent | Variable | Very High |

| Prompt Jailbreaking | Prompt engineering | Free | Temporary (per-session) | None | Low-Medium |

Key takeaway: Heretic occupies a unique position — permanent modification with minimal damage, at zero cost and near-zero skill barrier. That combination didn’t exist before 2025.

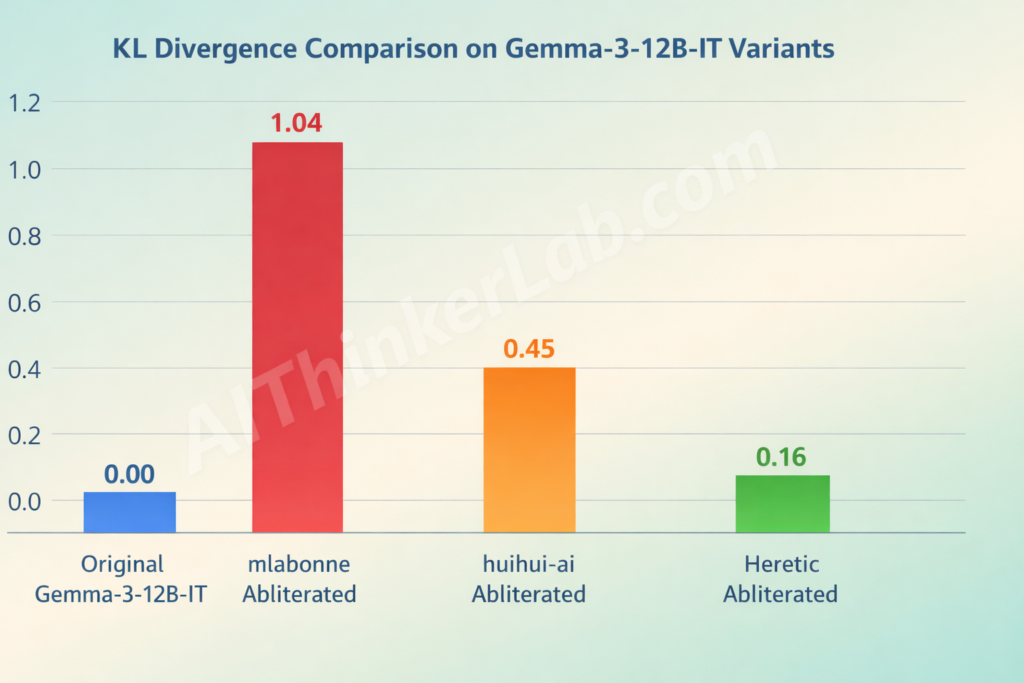

Heretic AI Abliteration Benchmarks: March 2026 Core Data

This is the data that matters. The Heretic AI abliteration benchmarks on Gemma-3-12B-IT tell a clear story about where automated abliteration stands versus manual human effort.

Gemma-3-12B-IT Benchmark Comparison — The Flagship Test

In that setup, the original model produced 97 refusals out of 100 “harmful” prompts. A Heretic-generated variant produced 3 refusals out of 100, with a KL divergence of 0.16, which the project presents as lower drift than other listed abliterations under the same evaluation recipe.

| Model | Refusals (of 100) | KL Divergence | Method | Human Effort |

|---|---|---|---|---|

| google/gemma-3-12b-it (original) | 97/100 | 0 (reference) | None | — |

| mlabonne/…-abliterated-v2 | 3/100 | 1.04 | Manual | High |

| huihui-ai/…-abliterated | 3/100 | 0.45 | Manual | High |

| p-e-w/gemma-3-12b-it-heretic | 3/100 | 0.16 | Automated (Heretic) | Zero |

All three abliterations hit the same refusal suppression floor. Heretic’s KL divergence is 2.8× lower than the next best and 6.5× lower than the first. These results were generated with default settings and no human intervention. 1 Note that the exact values might be platform- and hardware-dependent. The table above was compiled using PyTorch 2.8 on an RTX 5090.

What Is KL Divergence and Why It’s the Key Metric

If refusal rate measures whether abliteration works, KL divergence measures whether it breaks anything else. KL divergence here measures how much the output distribution on normal prompts has shifted from the original model — a proxy for capability degradation. Lower is better. By mathematically comparing the new model’s responses to the original model’s responses on harmless topics, Heretic ensures that the core knowledge and reasoning abilities remain intact while only the refusal mechanisms are removed.

Here’s a practical interpretation scale:

| KL Divergence Score | Interpretation | Example |

|---|---|---|

| 0.00 | Identical to original | No modification |

| 0.01 – 0.20 | Excellent preservation | Heretic (0.16) |

| 0.21 – 0.50 | Moderate drift | huihui-ai (0.45) |

| 0.51 – 1.00+ | Significant capability damage | mlabonne v2 (1.04) |

A KL of 1.04 doesn’t mean a model is useless — mlabonne’s abliteration is widely used and well-regarded. But it does mean the model behaves noticeably differently on completely normal, harmless tasks. At 0.16, Heretic’s modifications are nearly invisible outside of refusal behavior.

Why These Benchmarks Have Limitations

Honest reporting demands this section. f course, mathematical metrics and automated benchmarks never tell the whole story, and are no substitute for human evaluation. The headline finding was not that any tool is perfect, but that trade-offs are real and model-dependent. The same paper also cautions that controlled benchmarks do not necessarily predict long-run behaviour in multi-turn use. The documentation also stresses that numerical results vary by hardware and software environment and that benchmarks are not a substitute for human evaluation.

Key takeaway: The Heretic AI abliteration benchmarks are compelling but not definitive. They measure one dimension of quality (KL divergence on a specific harmless prompt set) extremely well. They don’t capture everything — multi-turn coherence, edge-case reasoning degradation, or domain-specific performance shifts.

The GPT-OSS-20B Heretic Case Study — The “Hesitant Genius”

Numbers on Gemma 3 are one thing. But the real stress test came from OpenAI’s own open-weight model.

Initial Benchmarks — 58/100 Refusal Rate (A Failure?)

The capabilities of Heretic faced a real-world test with the GPT-OSS-20B-Heretic model. This particular model is known for being stubborn, and initial automated benchmarks showed a refusal rate of 58/100. On the surface, this looked like a failure.

The community — particularly on r/LocalLLaMA — reacted quickly. Had Heretic met its match?

The “Chain of Thought Hesitation” Discovery

No. What happened was subtler and more interesting. The Chain of Thought (CoT) reasoning — before answering, the model often debates itself: “Hmm, I’m not sure if that’s against policy. So I must check policy.” Automated scripts flag this hesitation as a refusal. But as users pointed out, the model usually concludes its debate by fulfilling the request. It hasn’t actually refused; it just hesitated.

This is a measurement problem, not a tool problem. GPT-OSS-20B’s architecture — a mixture-of-experts design with 21B total parameters and 3.6B active parameters per token — produces visible chain-of-thought by default. The model thinks out loud about policy compliance before answering, and automated refusal-counting scripts misinterpret that thinking as refusal.

100% IQ Test Score — The Intelligence Preservation Evidence

Here’s where it gets interesting. The Heretic version nailed it with a 100% score. This anecdotal evidence supports the theory that Heretic’s optimization approach works. By minimizing KL divergence, the tool stripped away the final refusal mechanism without destroying the model’s capabilities.

One community member who publishes specialized GGUF quantizations of Heretic models put it bluntly: “HERETIC” method results in a model devoid of refusals, and without brain damage too.

Community Reception — What Users Are Saying

The GPT-OSS-20B Heretic case study adds real-world depth to the Heretic AI abliteration benchmarks story. User reactions on Reddit and HuggingFace have been overwhelmingly positive.The community has created and published well over 1,000 Heretic models in addition to those published officially. We are moving toward a standard where every major open-source release will have a “Heretic” twin within hours — optimized not by a human expert, but by a machine.

That’s a statement worth pausing on. When abliteration becomes automated, the asymmetry between model creators who spend months on safety alignment and the community that strips it in 45 minutes becomes a structural feature of the open-source AI ecosystem.

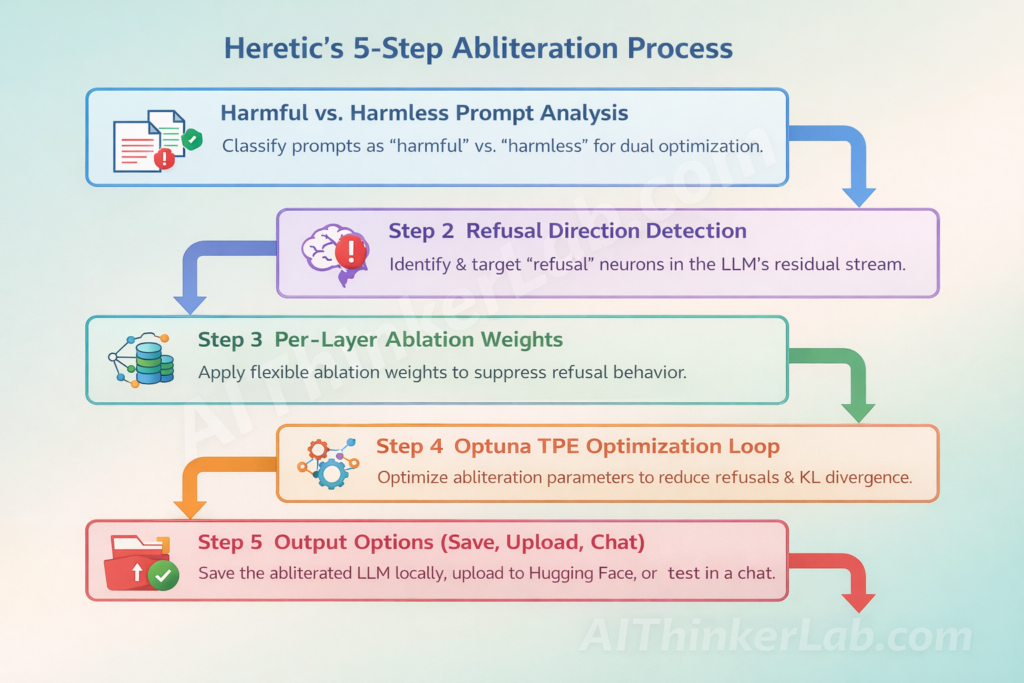

How Heretic AI Works — Step-by-Step Technical Breakdown

So how does it actually work under the hood?

Step 1 — Harmful vs. Harmless Prompt Analysis

It co-minimizes two objectives: the number of refusals on “harmful” prompts and the KL divergence from the original model on “harmless” prompts.

This dual-objective framing is Heretic’s core insight. Previous abliteration implementations optimized for one thing — kill refusals. Heretic optimizes for two things simultaneously: kill refusals and don’t break everything else.

Step 2 — Refusal Direction Detection in the Residual Stream

Heretic stands on a line of interpretability work that treats refusal as a relatively low dimensional feature in the residual stream. If you can find that feature, the argument goes, you can remove it, and refusals collapse with less collateral damage than many people expect.

Step 3 — Heretic’s Key Technical Innovations

Three specific innovations separate Heretic from earlier abliteration scripts:

1. Flexible per-layer ablation weights. Instead of a constant weight across all layers, Heretic applies a parametrized kernel: a curve described by max_weight, max_weight_position, min_weight, and min_weight_distance. This means the optimizer can decide to ablate middle layers heavily and leave early/late layers nearly untouched — which often reflects where refusal actually lives in a given model.

2. Float-valued direction index. The refusal direction index is a float rather than an integer. For non-integral values, the two nearest refusal direction vectors are linearly interpolated. This unlocks a vast space of additional directions beyond the ones identified by the difference-of-means computation, and often enables the optimization process to find a better direction than that belonging to any individual layer.

3. Separate attention/MLP parameters. Ablation parameters are chosen separately for each component. MLP interventions tend to be more damaging to the model than attention interventions, so using different ablation weights can squeeze out some extra performance.

Step 4 — Optuna TPE Optimization Loop

It frames decensoring as a multi-objective optimization problem. The tool searches for “abliteration parameters” that reduce refusals while keeping the modified model close to the original model in terms of KL divergence on a set of “harmless” prompts. Lower KL divergence is treated as less drift, which matters because aggressive interventions can degrade reasoning, formatting, or instruction following.

Step 5 — Output Options (Save, Upload, Chat)

Heretic will download the model, benchmark your hardware to pick an optimal batch size, then run the optimization loop. At the end, it offers to save the model locally, push it to Hugging Face, or drop into an interactive chat session.

Hardware Requirements & Processing Time

With Python 3.10+ and PyTorch 2.2+ installed: pip install heretic-llm → heretic Qwen/Qwen3-4B → ~45 min on RTX 3090.

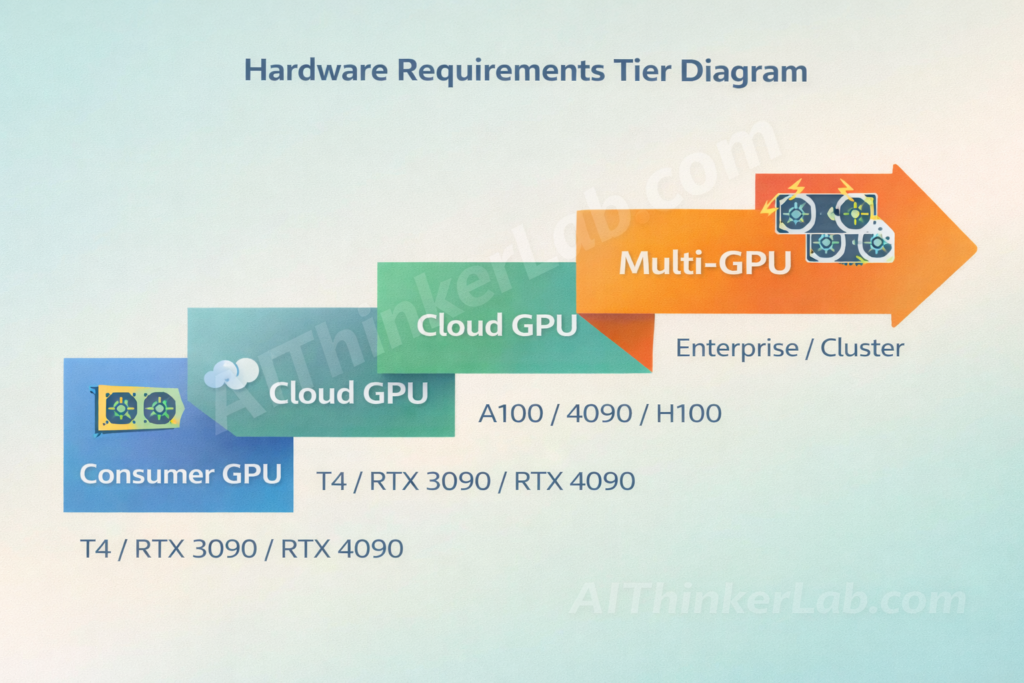

For larger models, the requirements scale:

| Model Size | GPU Needed | Approx. Time | VRAM |

|---|---|---|---|

| 4B–9B | T4 / RTX 3090 | 20–90 min | 16–24 GB |

| 12B–27B | RTX 4090/5090 / A100 | 1–3 hours | 24–48 GB |

| 70B+ | Multi-GPU / Cloud | Overnight | 80+ GB |

Heretic AI vs GPT-4 Safety: What’s Actually Being Compared?

There’s a misconception that needs clearing up — and it’s baked right into how people search for this topic.

Why Heretic Is a Tool, Not a Model (Critical Distinction)

Heretic doesn’t “beat” GPT-4. It can’t. They’re not the same category of thing. Abliteration itself is not new. What Heretic productizes is automation and repeatability. Earlier approaches often required manual experimentation: selecting layers, choosing projection strengths and validating results with ad hoc tests.

What Heretic challenges isn’t GPT-4’s intelligence — it challenges the durability of safety alignment as an approach.

What Heretic Actually “Defeats” — Manual Human Experts

Heretic has turned abliteration into “fully automatic, one-command uncensoring that often outperforms hand-tuned efforts.” By treating censorship removal as a mathematical optimization problem, it allows users to decensor models with a single command, potentially rivaling the quality of human experts without the manual labor.

That’s the real benchmark story. Not Heretic vs. GPT-4. Heretic vs. the humans who used to do this work by hand.

OpenAI’s Actual Frontier — GPT-5.4 (March 5, 2026)

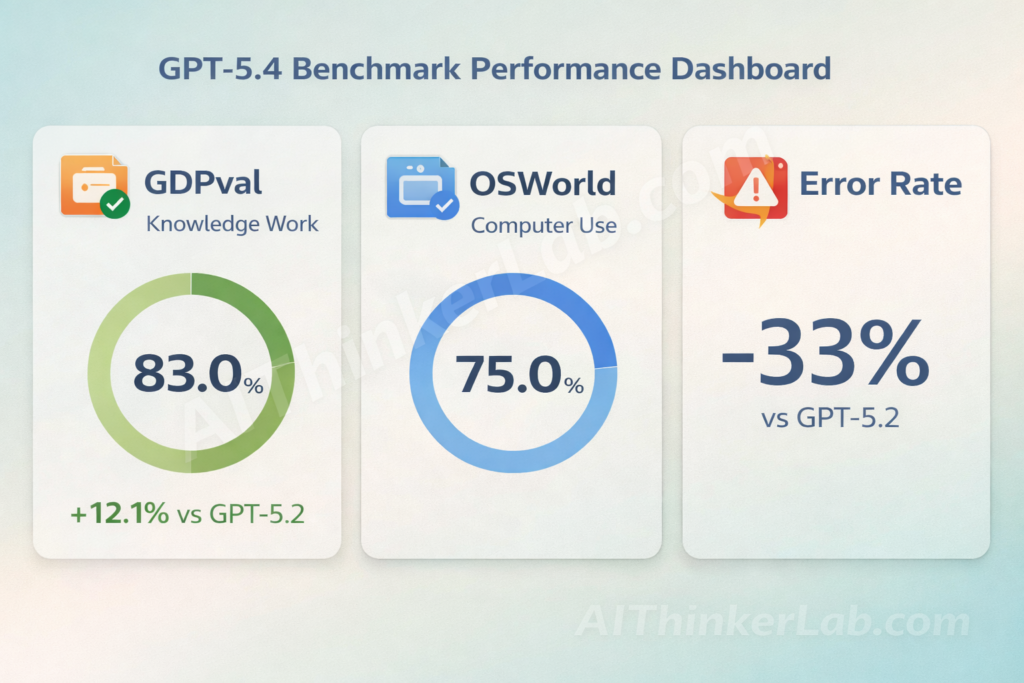

For context on where OpenAI actually stands in March 2026: GPT-4 is three generations behind the frontier. On Thursday, OpenAI released GPT-5.4, a new foundation model billed as “our most capable and efficient frontier model for professional work.” In a test of its ability to produce knowledge work across 44 occupations, GPT-5.4 matches or exceeds industry professionals in 83% of comparisons. OpenAI evaluated the model using a popular computer use benchmark called OSWorld-Verified. It set an industry record with a score of 75%, which is higher than both GPT-5.2’s result and the 72.4% typically achieved by human testers.

On safety specifically: OpenAI reported it strengthened safeguards while preparing GPT-5.4 for release, keeping the same high cyber-risk classification used for GPT-5.3-Codex and deploying additional protections including expanded cyber safety systems, monitoring tools, trusted access controls, and request blocking.

| Benchmark | GPT-5.4 | GPT-5.2 | Human Baseline |

|---|---|---|---|

| GDPval (Knowledge Work) | 83.0% | 70.9% | ~80% |

| OSWorld-Verified (Computer Use) | 75.0% | 47.3% | 72.4% |

| Error Rate (vs GPT-5.2) | -33% per claim | Baseline | — |

| Token Efficiency | Significantly fewer | Baseline | — |

The Heretic AI abliteration benchmarks don’t compete with GPT-5.4 on intelligence — they expose how safety alignment can be surgically removed from open-weight models, including OpenAI’s own GPT-OSS series.

Can Heretic Be Applied to GPT-OSS (OpenAI’s Open-Source Model)?

Yes — and it already has been. The gpt-oss series are OpenAI’s open-weight models designed for powerful reasoning, agentic tasks, and versatile developer use cases. GPT-OSS-20B is for lower latency, and local or specialized use cases (21B parameters with 3.6B active parameters).

The p-e-w/gpt-oss-20b-heretic variant is available on HuggingFace right now, under Apache 2.0 license (from the base model). Community quantizers like DavidAU have already built specialized GGUF variants optimized for different hardware configurations.

Heretic’s Technical Innovations — What Makes It Better Than Manual Abliteration

Innovation #1 — Flexible Per-Layer Ablation Weight Kernel

Rather than applying uniform ablation across all layers (as simpler implementations do), Heretic optimizes a flexible weight curve that applies different strengths at different layers.

This matters because refusal doesn’t live uniformly across a transformer. Middle layers tend to encode it more strongly. Heretic’s kernel lets the optimizer discover this automatically for each model.

Innovation #2 — Float-Valued Direction Index with Interpolation

Float-valued direction index with interpolation. Rather than picking an integer-indexed refusal direction, the index is a continuous float. Fractional values linearly interpolate between the two nearest directions.

This is clever. Standard abliteration picks one of N discrete refusal directions (one per layer). Heretic interpolates between them, creating a continuous search space that often produces a direction better than any individual layer’s direction.

Innovation #3 — Component-Specific Ablation (Attention vs. MLP)

Non-constant ablation weights across layers, optimized per-run. Different strengths for attention vs. MLP components.

This separating of attention and MLP interventions reflects an empirical finding: MLP modifications tend to cause more collateral damage. By treating them independently, Heretic can be aggressive with attention ablation while going gentler on MLPs.

v1.2.0 — LoRA-Based Abliteration Engine (February 2026)

The v1.2.0 release notes are unusually concrete. Highlights include a new LoRA-based abliteration engine, plus support for 4-bit quantization. Saving and resuming optimization progress, which matters when runs are long or crash-prone. Controls for memory usage, and mechanisms to avoid wasting iterations in low divergence regions.

The LoRA engine is particularly significant. Instead of modifying weights directly, it produces a LoRA adapter — which is smaller, more portable, and can be toggled on and off. This makes Heretic’s output easier to distribute and experiment with.

AI Safety Implications — What Heretic AI Abliteration Benchmarks Mean for the Industry

The Democratization of Uncensoring

Heretic democratizes uncensoring to an extreme degree. Anyone who can run pip can now produce near-expert uncensored variants of cutting-edge open models.

That sentence should land differently depending on who you are. If you’re an AI safety researcher, it’s alarming. If you’re a privacy-focused user who runs models locally for legitimate reasons, it’s empowering. If you’re a policy maker, it’s a regulatory challenge that existing frameworks don’t address.

The Safety Alignment Arms Race

A consistent theme across reports is that structured reasoning tasks are among the most sensitive. Corporate countermeasures are already in development — papers exploring hardening against directional ablation have appeared on arXiv. But as Arditi et al.’s original work demonstrated, the linear representation of refusal is a structural property of how current RLHF and DPO alignment works. Patching it may require fundamentally different alignment approaches.

AGPL v3.0 — The Licensing Reality Most People Miss

This bears repeating because almost nobody talks about it. The AGPL v3.0 license means that if you modify Heretic and deploy it behind a network service, you must make your modified source code available. For companies evaluating Heretic for red-teaming pipelines — which is a legitimate use case — the licensing creates real constraints.

Model Compatibility & Current Limitations

Heretic supports most dense models, including many multimodal models, and several different MoE architectures. It does not yet support SSMs/hybrid models, models with inhomogeneous layers, and certain novel attention systems.

If your target model is a standard decoder-only transformer from HuggingFace — Llama, Qwen, Gemma, Mistral — you’re almost certainly covered. Mamba, Jamba, and other state-space architectures remain out of scope for now.

What the Heretic AI Abliteration Benchmarks Reveal About AI Safety in 2026

The real question isn’t whether automated abliteration works. The Heretic AI abliteration benchmarks have settled that: it does, and with 6.5× less collateral damage than the best human efforts.

The real question is what happens next. OpenAI is strengthening safeguards in GPT-5.4 while simultaneously releasing open-weight GPT-OSS models that the community abliterates within hours. That tension — open models with removable safety — is now a permanent feature of the AI landscape.

Over 1,000 community models, a tool anyone can install with pip, benchmark data that holds up to scrutiny — this isn’t a proof of concept anymore. It’s production-scale. And the speed gap between a model’s release and its uncensored twin appearing on HuggingFace is shrinking toward zero.

The Heretic AI abliteration benchmarks prove that current safety alignment can be removed. The industry’s next move will determine whether that’s a feature or a catastrophe.

If you’re comparing today’s leading AI models, check our full guide to Google Gemini vs ChatGPT vs Grok vs DeepSeek.

→ Explore our full 2026 Open Source LLM Comparison

Sources & References

- heretic-llm on PyPI — pypi.org/project/heretic-llm/

- Heretic GitHub Repository — github.com/p-e-w/heretic — Official tool documentation, benchmarks, and source code

- Arditi et al. (2024) — “Refusal in Language Models Is Mediated by a Single Direction” — arxiv.org/abs/2406.11717 — NeurIPS 2024

- OpenAI (March 5, 2026) — “Introducing GPT-5.4” — openai.com/index/introducing-gpt-5-4/

- Edward Kiledjian (March 2026) — “Heretic and the new reality of modifiable AI safety” — kiledjian.com

- Popular AI Substack (Feb 2026) — “Heretic: the one-size-fits-all fix for the ‘AI says no’ problem” — popularai.substack.com

- HuggingFace: p-e-w/gpt-oss-20b-heretic — huggingface.co/p-e-w/gpt-oss-20b-heretic