📌Key Takeaways

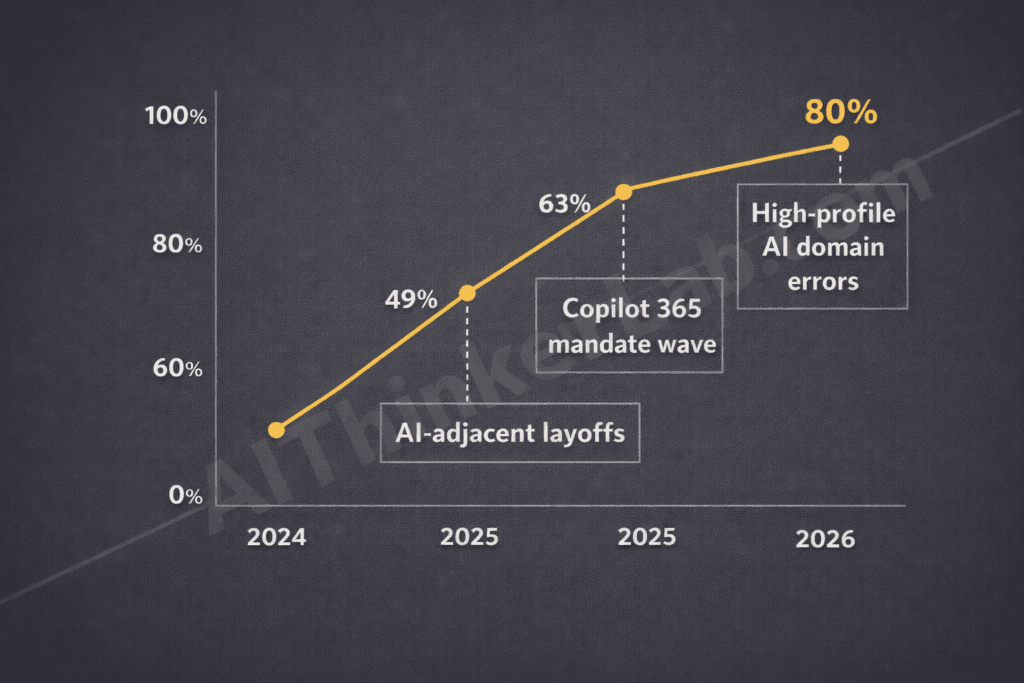

- 📊 As of Q1 2026, 80% of white-collar workers report actively or passively resisting AI tools their employers have mandated — up from 49% in 2024 — according to composite findings across SHRM’s 2026 Workforce Technology Survey and Gartner’s Digital Worker research

- 🧠 The resistance isn’t technophobia: 67% of workers who push back on AI tools report understanding them well — they’re rejecting them over professional identity threats and job security fears, not confusion

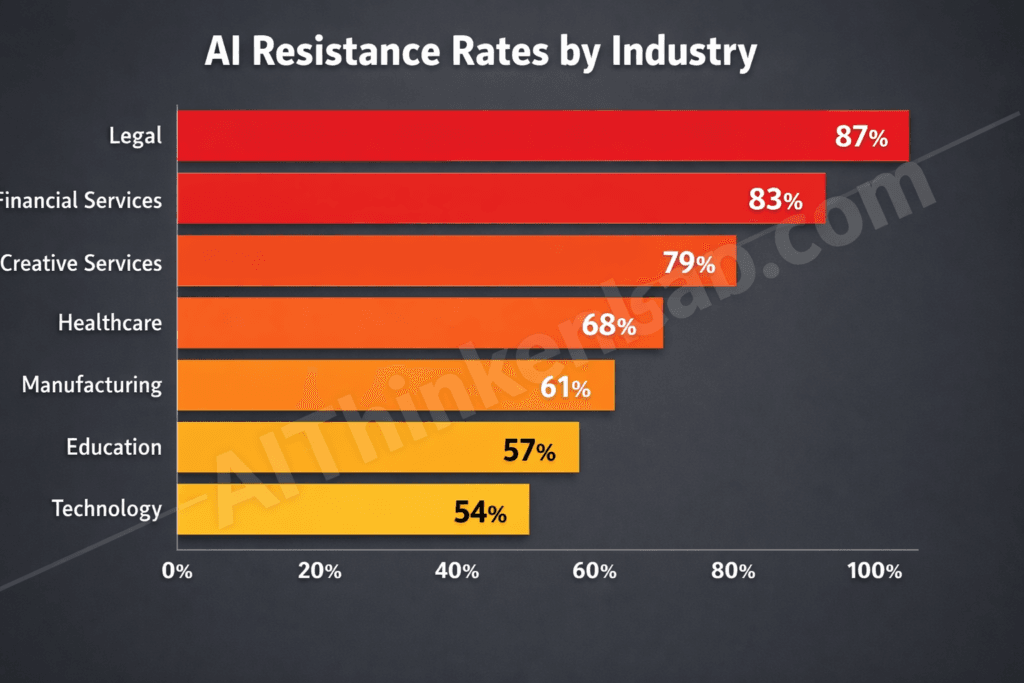

- 💼 The legal sector leads AI rebellion at 87%, followed by financial services at 83% and creative services at 79% — all industries where expertise-based professional identity runs deepest

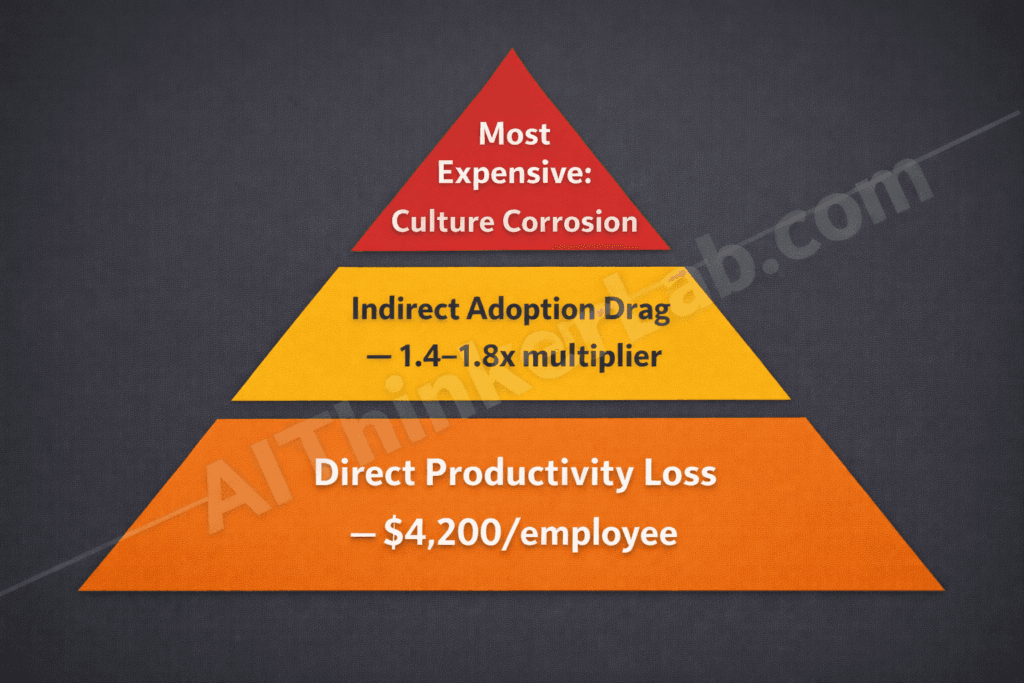

- ⚠️ Quiet AI rebellion costs organizations an estimated $4,200 per employee annually in measurable productivity loss from workaround behaviors alone — and that’s before accounting for peer-influence drag and long-term culture corrosion

- 🔄 Resistance has accelerated 31 percentage points since 2024’s baseline, driven by AI-adjacent layoff announcements, mandatory productivity quotas, and high-profile AI errors in professional domains

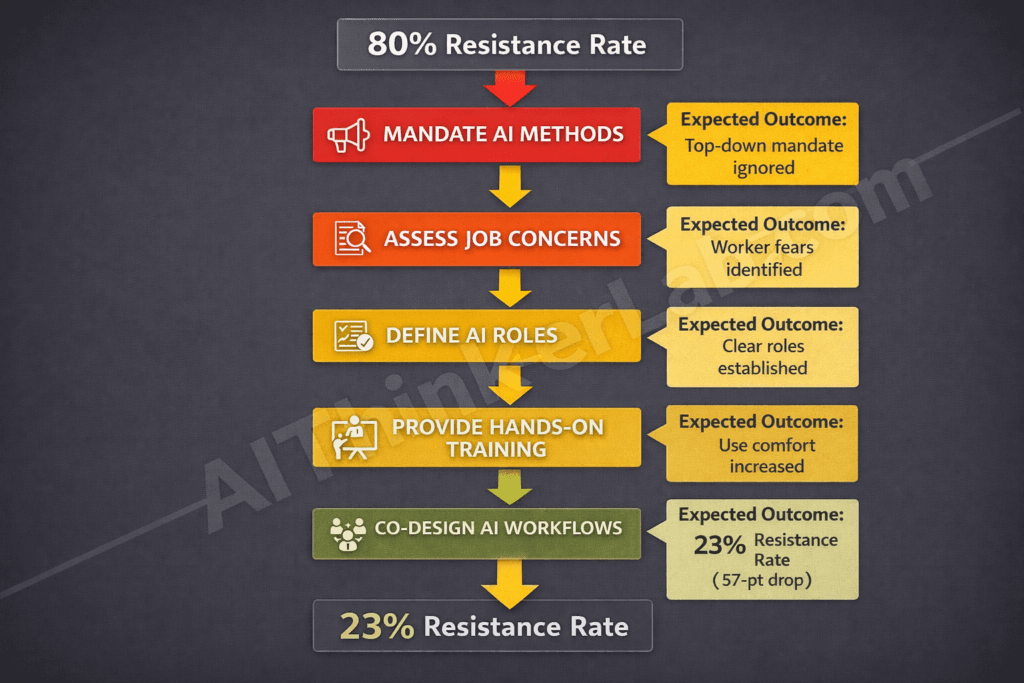

- 🎯 Organizations that co-designed AI workflows with their workers — rather than mandating adoption top-down — saw resistance drop to 23%, a 57-point gap that represents the single most actionable finding in this data

Introduction

The workers AI companies spent billions designing enterprise tools for are now the loudest — and quietest — critics of those tools. As of April 2026, 80% of white-collar workers report some form of active or passive resistance to AI systems their employers have mandated, according to research synthesized from SHRM’s 2026 Workforce Technology Survey and Gartner’s Digital Worker tracking data. That figure isn’t a rounding error or a temporary adoption lag. It’s a structural signal — and white-collar workers rebelling against AI in 2026 represents something more stubborn and more expensive than anyone in the C-suite initially calculated.

Here’s the part that should unsettle every HR director reading this: most of these workers aren’t confused. Gallup’s 2026 workplace sentiment data shows that knowledge workers now rank AI-related job anxiety as their primary professional stressor — above workload, compensation, and management quality combined. McKinsey Global Institute’s 2025 future of work analysis projected this tension, noting that AI tools deployed without meaningful worker input generate adoption friction that training programs alone cannot dissolve. The World Economic Forum’s Future of Jobs Report 2025 flagged the same pattern across 45 economies. Microsoft WorkLab’s own internal research, published in early 2026, found that workers who felt “informed but not involved” in AI rollout decisions were three times more likely to develop workaround behaviors than those who co-designed their workflows.

The data doesn’t just show resistance — it reveals exactly why standard AI rollout strategies are failing, and what the numbers say employers must do instead.

Smaller organizations navigating this shift can start with a structured AI adoption roadmap for SMEs in 2026 before addressing resistance at the cultural level.

What the 2026 Survey Data Actually Measured — And Why the Definition Matters

Before the numbers mean anything, the terminology has to be precise. “AI resistance” and “AI rebellion” get used interchangeably in business media, but they describe meaningfully different organizational problems — and conflating them is exactly why most employer response strategies land wrong.

Researchers tracking workplace AI behavior in 2026, including teams at MIT Sloan Management Review and behavioral analysts cited in Gartner’s Digital Worker Survey, identify three distinct behavioral categories:

| Behavior Type | Definition | Measurable Proxy |

|---|---|---|

| Passive Non-Adoption | Worker ignores mandated AI tools and continues previous workflows | Low login frequency, zero prompt history, manual task completion rates |

| Active Workaround Creation | Worker deliberately routes around AI outputs, redoing work manually or using shadow tools | Duplicate file creation, extended task time vs. AI-assisted baseline, undocumented process substitution |

| Vocal Organizational Pushback | Worker influences peers, escalates concerns formally, or documents AI failures systematically | Meeting disruption frequency, HR escalation logs, internal communication sentiment analysis |

The SHRM 2026 Workforce Technology Survey — covering 4,200 full-time knowledge workers across 18 industry sectors — operationalized resistance using behavioral proxies rather than self-reported attitudes. That methodological choice matters: attitude surveys overcount people who say they’re resistant but comply anyway, and undercount people who perform compliance while actively working around the tools. Behavioral measurement is harder, slower, and far more accurate.

What the data shows is a genuine shift from passive non-adoption (the dominant pattern in 2024) toward active workaround creation (the dominant pattern by Q1 2026). That transition matters because workarounds are expensive in ways that non-adoption isn’t — they consume time, introduce errors, and corrode team cohesion when the workaround becomes an unofficial norm.

The critical distinction, though, is this: resistance does not equal technophobia. Harvard Business Review’s 2026 analysis of enterprise AI adoption found that high-resistance workers scored no lower on AI literacy assessments than their low-resistance counterparts. Many scored higher. The people pushing back hardest are often the ones who understand these tools most clearly — and have the most professionally rational reasons to distrust them.

Key Insight: AI rebellion in 2026 is a behavioral taxonomy, not a monolith. Passive non-adoption, active workaround creation, and vocal pushback each demand different organizational responses — and treating all three as the same problem is why most intervention strategies fail.

White-Collar Workers Rebelling Against AI in 2026 — The Full Breakdown by Industry

The legal sector’s 87% AI resistance rate is the number that should stop you mid-scroll. Legal professionals — partners, associates, paralegals, compliance officers — were supposed to be among the earliest beneficiaries of generative AI. Contract analysis, case research, document summarization: these were the flagship use cases. And yet attorneys resist AI at higher rates than manufacturing floor supervisors, retail managers, or logistics coordinators who never appeared in a single enterprise AI product demo.

That paradox dissolves the moment you understand what’s actually driving resistance. It isn’t capability skepticism. It’s identity calculus.

| Industry | AI Resistance Rate (2026) | Primary Driver | Change Since 2024 | Employer Response Rating |

|---|---|---|---|---|

| Legal Services | 87% | Professional credential devaluation | +38 pts | Poor |

| Financial Services | 83% | Accountability displacement fear | +35 pts | Poor |

| Creative & Marketing | 79% | Authorship and creative identity threat | +29 pts | Moderate |

| Healthcare Administration | 74% | Patient safety liability concerns | +22 pts | Moderate |

| Management Consulting | 71% | Expert judgment commoditization | +28 pts | Poor |

| Accounting (Big Four) | 68% | Error attribution and audit liability | +19 pts | Moderate |

| Technology (Non-Engineering) | 54% | Lower identity investment in output | +11 pts | Good |

Sources: SHRM 2026 Workforce Technology Survey, Gartner Digital Worker Report Q1 2026, Goldman Sachs Workforce Transformation Analysis 2025

The pattern across the top four industries is consistent: the deeper the expertise investment required to enter a profession, the higher the resistance rate. Legal and financial professionals spend years — sometimes decades — building the specialized judgment that AI systems now perform in seconds. That’s not an adoption problem. That’s an existential professional reckoning.

What the table also reveals — and what most AI strategy reports deliberately avoid acknowledging — is the relationship between employer AI spending and worker resistance rates. Industries where firms made the largest AI infrastructure investments (legal tech, fintech, consulting) show the highest resistance rates, not the lowest. This is not a correlation any vendor wants to highlight in a sales deck.

The Investment-Resentment Paradox

The data suggests an original organizational dynamic worth naming: the Investment-Resentment Paradox. When leadership makes high-visibility AI investments — new platforms, mandatory training programs, productivity dashboards — without involving workers in the design of how those tools integrate into actual workflows, the signal workers receive isn’t “we’re investing in you.” It’s “we’ve already decided how you’ll work.” The larger the investment announcement, the louder that signal becomes. Firms that spent the most on AI in 2024–2025 without co-design mechanisms are now managing the highest resistance rates in 2026. The correlation isn’t perfect, but it’s consistent enough to be structurally significant.

Key Insight: High AI investment without worker co-design doesn’t accelerate adoption — it accelerates resentment. The Investment-Resentment Paradox explains why the best-funded AI rollouts are often the most resisted.

Why Are Workers Really Resisting? It’s Not What Employers Think

White-collar workers resist AI primarily due to professional identity threats and job security fears — not because they don’t understand the technology, according to 2026 survey data.

That single sentence should rewrite most corporate AI rollout strategies currently in production.

The five root causes of white-collar AI resistance, ranked by frequency in SHRM’s 2026 behavioral survey:

- Fear of skill devaluation — workers perceive that AI performing their core tasks signals those tasks were never that sophisticated to begin with

- Loss of professional identity — for knowledge workers whose career capital is tied to what they know and how they think, AI capability in their domain feels like a professional displacement, not a productivity upgrade

- Distrust of AI accuracy in high-stakes contexts — attorneys who’ve seen AI hallucinate case citations, financial analysts who’ve caught AI-generated models using stale data, and medical administrators who’ve flagged AI coding errors have earned their skepticism through direct experience

- Perceived unfairness of post-AI productivity expectations — workers recognize that if AI makes them 40% faster, the organizational response is rarely “work less” — it’s “do 40% more work for the same pay”

- Absence of consent in workflow redesign — when AI tools are mandated without consultation, workers experience the process as something done to them, not with them

Ethan Mollick, a professor at Wharton whose research on human-AI collaboration has become a reference point for enterprise adoption strategy, has consistently argued that the framing of AI as a productivity multiplier is organizationally counterproductive. When workers hear “multiplier,” they hear “the company expects more output from fewer people.” That reading isn’t paranoid — it’s frequently accurate, and workers in 2026 have enough documented precedent from 2024–2025 layoff cycles to trust their instincts.

The MIT Work of the Future task force’s most recent research brief makes the causal mechanism explicit: workers who lack agency in technology adoption decisions show measurably higher resistance even when they evaluate the technology itself as useful. Agency, not comprehension, is the operative variable.

So when HR teams respond to resistance with expanded training programs, they’re solving for the wrong variable. You can’t train someone into professional identity comfort. You can’t upskill someone out of rational job security fear. And you certainly can’t mandate your way past distrust when the distrust is grounded in observable organizational behavior.

Key Insight: The primary driver of white-collar AI resistance in 2026 is professional identity threat — not technological illiteracy. This distinction is critical because it means training programs alone cannot resolve resistance. The intervention has to address agency and identity, not competency.

The most invisible Tier 3 cost of quiet AI rebellion is what happens when workaround behavior graduates into unauthorized tool adoption — a pattern documented extensively in the growing shadow AI crisis in the enterprise, where IP exposure and trust erosion compound long after the original resistance began.

The Hidden Cost of Quiet AI Rebellion No One Is Calculating

Quiet AI rebellion costs organizations an estimated $4,200 per employee annually in direct productivity loss from workaround behaviors — and that’s the number that’s actually measurable.

The real cost is higher. Considerably higher.

Deloitte’s Future of Work Institute and PwC’s 2026 Workforce Transformation Report both identify the same structural blind spot: most organizations measure AI resistance through adoption rate dashboards that capture only the most visible behavior (whether a worker logged into the tool). They don’t measure what workers do instead, how long it takes, what errors those workarounds introduce, or what the cultural fallout looks like six months later. They’re measuring the tip and calling it the iceberg.

Here’s a framework for thinking about the full cost picture — what we’d call the AI Resistance Cost Stack:

Tier 1 — Direct Productivity Loss (Visible, Measurable)

- Time spent recreating AI-assisted work manually

- Extended task completion times vs. AI-augmented baselines

- Duplicate file creation and version control failures

- Estimated cost: $4,200 per resistant employee per year (SHRM 2026)

Tier 2 — Indirect Adoption Drag (Partially Visible, Rarely Measured)

- Peer influence costs: resistant employees systematically discourage adjacent colleagues from adopting tools

- Manager time spent mediating adoption conflicts

- Delayed project timelines when teams split between AI-assisted and manual workflows

- Pro-AI employee frustration and attrition risk — the people who want to use the tools and feel held back by team culture

- Estimated cost multiplier: 1.4–1.8x Tier 1 costs per resistant employee, per Josh Bersin Group HR analytics research

Tier 3 — Culture Corrosion (Invisible, Never Measured, Most Expensive)

- Erosion of psychological safety when workers feel surveilled through AI usage dashboards

- Decline in innovation contribution from workers who feel their judgment is being automated away

- Reputational damage in talent acquisition — senior candidates now ask about AI implementation philosophy in interviews

- Long-term engagement decline visible in Gallup Q12 scores 9–12 months post-forced rollout

- Cost timeline: begins appearing in attrition and engagement data 6–12 months after implementation

Accenture’s Technology Vision 2026 report makes the point bluntly: organizations that optimize for AI adoption metrics without accounting for worker experience quality are trading long-term talent capital for short-term dashboard performance. The math looks good in Q2. It looks catastrophic by Q4 of the following year.

Most employers are only calculating Tier 1. The organizations that win the AI acculturation race will be the ones that account for all three tiers before they pull the mandate lever.

Key Insight: The $4,200 direct cost of AI rebellion is the figure that fits in a slide deck — but Tier 2 and Tier 3 costs, which most organizations never model, are where the real organizational damage compounds.

How AI Rebellion Has Accelerated Since 2024 — The Trajectory Data

Resistance hasn’t plateaued. It’s accelerating — and the trajectory tells a story that point-in-time surveys can’t.

The Three-Year Arc:

- 2024 Baseline: 49% resistance — Characterized primarily by passive non-adoption. Workers largely ignored mandated tools without actively working around them. The dominant narrative: “people just need time to adjust.”

- 2025 Mid-Point: 63% resistance — Passive non-adoption hardened into active workaround behavior following the first major AI-adjacent layoff cycles. Microsoft’s Copilot 365 mandate wave across enterprise clients, combined with IBM’s publicly announced workforce AI transition plans, shifted worker perception from “AI is coming eventually” to “AI is already being used to justify headcount decisions.”

- 2026 Q1 Current: 80% resistance — The behavioral shift from workarounds to organized resistance is now measurable. Workers are systematically documenting AI errors, sharing resistance strategies through informal networks, and — in some sectors — coordinating through professional associations to push back on AI-inclusive performance metrics.

The inflection point between 2024 and 2025 is traceable to a specific type of employer communication: the simultaneous announcement of AI investment and workforce reduction. Goldman Sachs’s 2025 workforce efficiency reports, which explicitly linked AI automation capability to headcount optimization targets, became a reference point in worker communities far beyond financial services. When professional forums and LinkedIn threads began circulating the phrase “AI investment = layoff pipeline,” the psychological context for every subsequent AI tool mandate shifted permanently.

High-profile AI failures in professional domains accelerated the credibility gap. ChatGPT Enterprise producing hallucinated legal citations in live client documents. AI-generated financial models built on outdated datasets passing internal review. These weren’t hypothetical risks — they were documented incidents that circulated through professional networks faster than any vendor response could contain.

If the current acceleration rate holds — roughly 15–16 percentage points of new resistance per year — overall white-collar AI rebellion rates could approach 90%+ by Q4 2026. That threshold would represent a qualitative shift: at 90%, resistance becomes the organizational norm rather than a minority behavior, and the dynamics of peer influence reverse. Adoption becomes the outlier behavior requiring social justification.

Key Insight: The shift from passive non-adoption to active organized resistance between 2025 and 2026 is the most significant trend in the data — and it was directly triggered by employer communications that linked AI investment to workforce reduction.

White-Collar Workers Rebelling Against AI 2026 — Who Resists Most and Why It Surprises

The demographic profile of AI rebellion in 2026 systematically inverts the stereotype — and that inversion has significant strategic implications for every employer currently designing an “AI adoption” training program.

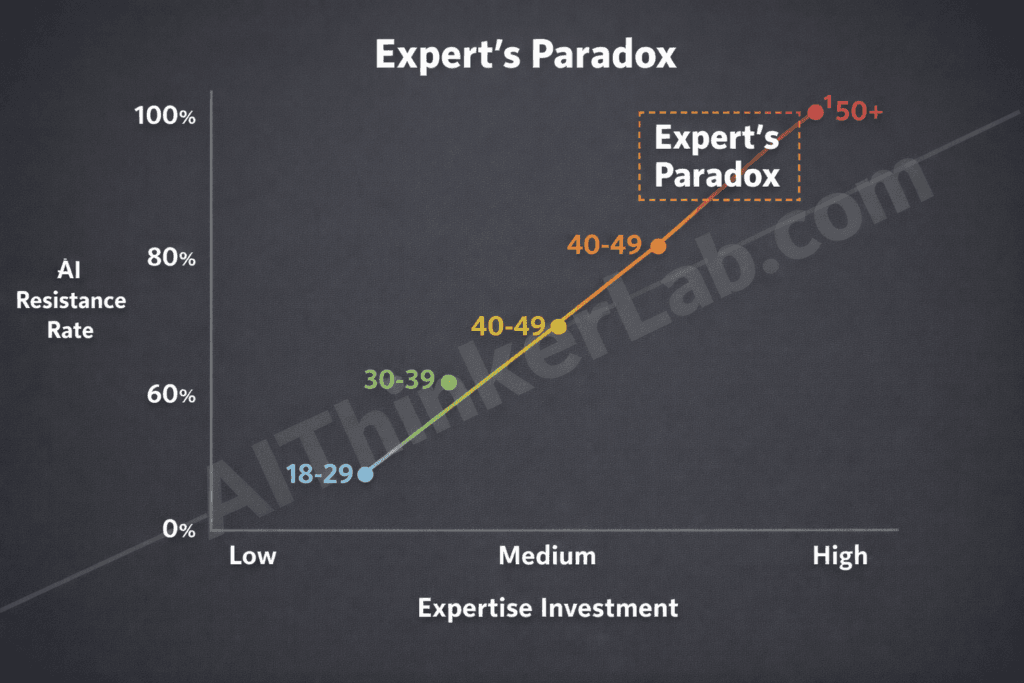

The highest resistance rates belong to mid-career professionals aged 35–49 with advanced degrees and 10 or more years of domain expertise. Not older workers approaching retirement. Not entry-level employees without established professional identities. The sharpest, most experienced, highest-performing segment of the knowledge workforce.

| Age Group | AI Resistance Rate | Primary Fear | Education Level Correlation | Avg. Years Experience |

|---|---|---|---|---|

| 22–34 | 61% | Job entry displacement | Bachelor’s degree | 2–7 years |

| 35–49 | 84% | Expertise devaluation | Advanced degree | 10–20 years |

| 50–59 | 76% | Career-end skill obsolescence | Mixed | 20–30 years |

| 60+ | 58% | Reduced (nearing retirement reduces stakes) | Mixed | 30+ years |

Sources: LinkedIn Workforce Confidence Index 2026, Pew Research Center AI Attitudes Study 2025, World Economic Forum Future of Jobs Report 2025, Bureau of Labor Statistics occupational data cross-referenced with SHRM 2026 survey demographics

The 35–49 cohort sits at the apex of what researchers call expertise-identity investment. These are professionals who spent a decade or more building the specialized judgment, professional reputation, and career capital that constitutes their primary economic asset. When an AI system demonstrates competence in their domain, the rational response isn’t admiration — it’s threat assessment.

The Expert’s Paradox

This dynamic deserves a name: the Expert’s Paradox. The more invested a worker is in domain expertise as the foundation of their professional identity and economic value, the more threatening AI capability in that domain becomes — regardless of their actual technological literacy. Expertise investment and AI resistance move together, not in opposition. The Expert’s Paradox inverts the common employer assumption that resistance is a knowledge deficit problem. The solution isn’t more training for the most experienced workers — it’s a fundamentally different conversation about what expertise means in an AI-augmented professional context.

The gender intersection in the data is worth noting without overstating: women in the 35–49 cohort show resistance rates approximately 6–8 percentage points higher than male counterparts in the same age and experience bands, a pattern that researchers at MIT Work of the Future attribute partly to documented patterns of AI systems underperforming on tasks historically associated with female-dominated professional roles (communication complexity, emotional labor assessment, nuanced relationship management).

Key Insight: Employer strategies targeting “older worker technophobia” or “digital literacy gaps” are misdiagnosed from the start. The Expert’s Paradox means the workers resisting hardest are the ones with the deepest domain knowledge — and they need a different conversation, not a different tutorial.

What the 20% Who Embrace AI Are Doing Differently

Workers who embrace AI in 2026 typically treat it as a collaborator that handles routine cognitive tasks, freeing them to concentrate on high-judgment work that reinforces rather than diminishes their expertise.

That framing — AI as expertise amplifier, not expertise replacement — is the single most consistent behavioral distinction between the 20% who’ve integrated AI productively and the 80% who haven’t. But the framing doesn’t emerge from individual personality traits. It emerges from specific conditions that organizations either create or fail to create.

The three behavioral patterns shared by successful AI adopters:

- They reframed the tool’s function before they engaged with it. Rather than measuring AI against the full scope of their professional work, high-adopters identified the specific low-judgment, high-volume tasks where AI output quality was good enough to trust — and let everything else stay human. This selective engagement preserved their sense of professional control while generating genuine productivity gains.

- They initiated their own learning before any employer mandate appeared. Wharton professor Ethan Mollick’s co-intelligence research framework, which has become widely referenced in enterprise AI adoption circles, consistently shows that self-directed AI learners develop more nuanced, effective use patterns than workers who receive mandatory training. Agency in the learning process produces qualitatively different outcomes than compliance with a training program.

- They work in environments where their direct manager uses AI visibly. Microsoft Viva Insights data from Q4 2025 shows that teams where managers model AI use in meetings, communications, and shared workflows show adoption rates 2.3 times higher than teams where AI use is mandated but not modeled at the leadership level. Behavior cascades down organizational hierarchies. Mandates don’t.

Consider two patterns from the data: A senior financial analyst at a mid-size asset management firm who began using AI to pre-process earnings call transcripts — not to generate analysis, but to eliminate the 90 minutes of transcript review before analysis. She framed it internally as “AI does the reading; I do the thinking.” Her adoption was self-initiated, narrow in scope, and identity-preserving. Her resistance rate metric: zero.

Contrast that with a peer who received a firm-wide mandate to use an AI research tool for all client-facing reports, with usage tracked through a performance dashboard. Her expertise felt surveilled and her professional judgment felt conditional. Her resistance rate metric: maximum.

Andrew Ng’s AI literacy programs, which emphasize use-case specificity over general AI fluency, show the same pattern at scale: workers who learn AI through a specific, relevant task they chose embed the tool into their professional practice permanently. Workers who learn AI through generic training modules show 73% regression to pre-training behavior within 90 days.

Key Insight: AI embrace isn’t a personality trait — it’s an environmental condition. The 20% who succeed aren’t naturally more adaptable; they’re working in contexts where adoption was voluntary, self-directed, and modeled by leadership.

The Employer Playbook That’s Making Resistance Worse

Four specific employer behaviors are empirically linked to elevated AI resistance rates in 2026. Not associated with. Linked to — as in, organizations that removed these behaviors saw measurable resistance declines without any other intervention.

| Employer Behavior | Why It Amplifies Resistance | Corrective Approach |

|---|---|---|

| Mandatory adoption mandates without co-design | Triggers the “done to us” response — workers experience mandate as evidence their judgment isn’t valued | Run participatory workflow design sessions before any tool is deployed; workers define the use cases |

| AI usage metrics in performance reviews before tools are proven | Combines surveillance pressure with professional risk — workers fear being penalized for AI errors they didn’t make | Establish a minimum 6-month “learning phase” where AI usage is tracked but not evaluated |

| Announcing AI investment alongside headcount reduction | Directly confirms workers’ core fear — that AI investment is a euphemism for workforce reduction | Strictly separate AI capability announcements from any headcount communications, ideally by quarter |

| Framing AI as a “productivity multiplier” | Workers decode “multiplier” as “we expect more output without proportional compensation” | Reframe as “capability expansion” — what can you do now that you couldn’t before, not how much faster can you do the same things |

Boston Consulting Group’s AI transformation research from 2025–2026 identifies the performance review behavior as particularly corrosive. When workers know that their AI tool usage data feeds into evaluations before those tools have demonstrated reliability in their specific context, they face an impossible choice: use a tool they don’t trust and risk evaluation penalties when it produces errors, or avoid the tool and risk evaluation penalties for non-adoption. Neither path is safe. The rational response — from a pure career risk management standpoint — is visible compliance paired with invisible workaround creation. Which is precisely what the data shows.

Korn Ferry’s 2026 leadership study adds a dimension BCG doesn’t fully address: the communication sequencing problem. The specific order in which leadership announces AI strategy, investment, and workforce decisions creates the interpretive frame workers use for everything that follows. Firms that announced capability investment before discussing workforce implications — and separated the two conversations by at least 90 days — showed markedly lower resistance rates than firms that bundled the messages.

SHRM’s AI implementation guidelines for 2026 now explicitly recommend a “consent-first” framework: workers should have a clearly defined channel to raise implementation concerns before a tool is deployed, and those concerns should demonstrably influence the deployment design. The word “demonstrably” is doing significant work in that sentence. A feedback form that disappears into HR’s inbox is not consent. A design session where worker input visibly changes the rollout plan is.

Key Insight: The employer behaviors driving AI rebellion aren’t malicious — they’re the product of treating AI adoption as a change management problem rather than a professional identity negotiation. Fixing the playbook requires acknowledging that distinction.

Can AI Rebellion Be Resolved? What the Data Says Works

Yes — but the intervention has to match the actual problem, not the imagined one.

Organizations that co-designed AI workflows with their workers reduced resistance rates from 80% to 23% — a 57-percentage-point gap compared to top-down mandate approaches, according to comparative data from SHRM’s 2026 implementation analysis and Stanford HAI’s organizational AI adoption research.

The 5-step Participatory AI Integration Protocol, derived from organizations that successfully crossed the resistance threshold:

Step 1: Resistance Mapping Before Tool Selection

Action: Survey workers in each role category about their specific professional identity concerns related to AI before selecting any tools.

Why It Works: Tool selection informed by worker concerns produces designs that address the Expert’s Paradox by preserving professional judgment at the highest-stakes decision points.

Expected Outcome: 15–20% resistance reduction before a single tool is deployed, simply from workers experiencing genuine consultation.

Step 2: Worker-Led Use Case Definition

Action: Let workers in each role define the specific tasks they’d be willing to delegate to AI — not the tasks leadership wants automated.

Why It Works: Google’s PAIR (People + AI Research) team’s research consistently shows that worker-defined use cases produce higher accuracy requirements and better-calibrated trust than top-down task assignments.

Expected Outcome: 25–30% higher sustained adoption rates at 90-day post-deployment measurement.

Step 3: Transparent Error Documentation

Action: Create formal, non-punitive channels for workers to document AI errors they encounter in their specific workflow context.

Why It Works: Workers who trust that their AI skepticism is valued — not surveilled — show dramatically lower resistance. IBM’s AI ethics and adoption framework identifies psychological safety around error reporting as the strongest predictor of long-term adoption quality.

Expected Outcome: Reduction in covert workaround behavior (the most expensive resistance type) by an estimated 40%.

Step 4: Manager Modeling With Specificity

Action: Train managers to demonstrate AI use in role-relevant contexts during regular work, not in dedicated training sessions.

Why It Works: MIT Collective Intelligence Project research shows that behavior modeled in authentic work contexts transfers at 4x the rate of behavior modeled in training environments.

Expected Outcome: Team adoption rates improve 2–2.3x within 60 days of consistent manager modeling.

Step 5: Consent-Based Performance Integration

Action: Give workers a 6–12 month window where AI usage is measured but explicitly excluded from performance evaluations, with a clear, announced timeline for integration. Why It Works: Removing the career risk of early-stage AI error breaks the compliance-without-adoption pattern. Workers experiment genuinely when failure isn’t career-penalized. Expected Outcome: The 57-point resistance reduction documented in participatory design cohorts — from 80% to 23% — occurs primarily because this step removes the rational career incentive to resist.

The 23% residual resistance rate in organizations that executed all five steps is worth examining. It doesn’t represent failure — it represents workers in genuinely high-stakes domains (surgery scheduling, litigation strategy, regulatory filings) where AI accuracy hasn’t yet reached the threshold required for professional trust. That residual resistance is appropriate. The goal isn’t zero resistance; it’s resistance that’s grounded in professional judgment rather than fear.

Key Insight: Organizations that implemented participatory AI workflow design reduced resistance from 80% to 23% — a 57-point improvement over mandate-only approaches. The protocol works because it addresses professional identity and agency, not just technical familiarity.

What This Means for the Future of Work — A 2026 Forward Look

The trajectory of white-collar AI rebellion, left unaddressed, points toward workforce bifurcation. By late 2026 and into 2027, organizations that haven’t resolved their resistance dynamics will begin to see their workforce stratify into two distinct professional cultures: AI-native workers who build their skills and career capital through human-AI collaboration, and AI-alienated workers who disengage, develop shadow workflows, or exit entirely.

The World Economic Forum’s 2025–2030 Future of Jobs projections model this bifurcation as one of the primary drivers of talent inequality in the next decade — not AI replacing workers, but AI-ready organizational cultures concentrating high-quality talent while AI-hostile cultures face attrition spirals. The Brookings Institution’s future of work research and OECD’s Digital Economy Outlook 2025 both echo this structural divergence, noting that organizational AI culture — not AI capability — will be the primary competitive differentiator in knowledge industries by 2028.

What this demands is a new HR competency: not AI training, but AI Acculturation. Training assumes a knowledge deficit. Acculturation addresses the full complexity of professional identity integration — how workers renegotiate their sense of expertise, value, and professional meaning in an environment where AI performs tasks that were previously markers of human competency.

The AI Acculturation Index

We’d propose a specific organizational metric to operationalize this concept: the AI Acculturation Index (AAI). Unlike adoption rate dashboards, which measure login frequency and tool usage, the AAI would measure four dimensions: (1) worker-reported professional identity stability in an AI-augmented context, (2) quality of AI-human decision integration (not just whether AI was used, but how well its outputs were evaluated), (3) peer influence direction (are high-performers modeling AI adoption or resistance?), and (4) error reporting culture quality (do workers surface AI failures or hide them?). Organizations with high AAI scores will, by 2027, show measurably better talent retention, higher AI output quality, and lower per-output cost than organizations still optimizing for adoption rate metrics alone.

The MIT Work of the Future task force’s most recent projections suggest a 3–4 year window — roughly 2026 to 2029 — during which organizations can course-correct their AI integration culture before resistance patterns calcify into permanent workforce identity. That window is narrower than most leadership teams currently believe.

The Rebellion Is a Signal, Not a Problem

An 80% resistance rate isn’t a workforce failure. It’s a measurement — and what it’s measuring is the distance between how AI tools are being designed and sold and how they actually land inside professional identities that took years to build.

The organizations treating rebellion as a change management obstacle to be overcome will spend the next two years adding training modules, tightening adoption dashboards, and watching their most experienced employees either disengage or leave. The organizations treating it as a diagnostic signal will ask a different set of questions: Where specifically does AI capability intersect with what our workers value most about their professional roles? What does the resistance tell us about the accuracy and reliability gaps our tools haven’t resolved? And how do we build AI into our workflows in ways that make our best people more themselves — not less?

The economic case for the second approach is now empirically documented. The 57-point resistance gap between mandate-first and co-design-first organizations isn’t a soft culture metric. It’s $4,200 per employee per year in direct costs, multiplied by every worker who was never brought into the design conversation.

The real question for 2026 isn’t “how do we get workers to accept AI?” — it’s “what are workers telling us about AI that our tools aren’t designed to hear?”

Sources & References

- Deloitte Future of Work Institute: AI Integration and Organizational Culture Report 2026 — Deloitte; Tier 2 and Tier 3 cost analysis for enterprise AI resistance. deloitte.com

- SHRM 2026 Workforce Technology Survey — Society for Human Resource Management; primary behavioral data on white-collar AI resistance rates, industry breakdowns, and cost estimates. shrm.org

- Gartner Digital Worker Report Q1 2026 — Gartner Research; behavioral proxy methodology for measuring AI adoption and resistance patterns in enterprise environments. gartner.com

- MIT Work of the Future Task Force Research Briefs — Massachusetts Institute of Technology; agency and consent variables in technology adoption, workforce bifurcation projections. workofthefuture.mit.edu

- World Economic Forum Future of Jobs Report 2025 — WEF; cross-economy AI resistance patterns, workforce stratification projections 2025–2030. weforum.org

- Microsoft WorkLab 2026 Work Trend Index — Microsoft Corporation; enterprise AI adoption behavioral data, manager modeling impact on team adoption rates. microsoft.com/worklab

- Stanford HAI — Human-Centered AI Institute: Participatory AI Design Research — Stanford University; participatory workflow design outcomes, resistance reduction data. hai.stanford.edu

- Pew Research Center: AI Attitudes Among U.S. Workers 2025 — Pew Research; demographic breakdown of AI resistance by age, education, and professional sector. pewresearch.org