📌 Key Takeaways:

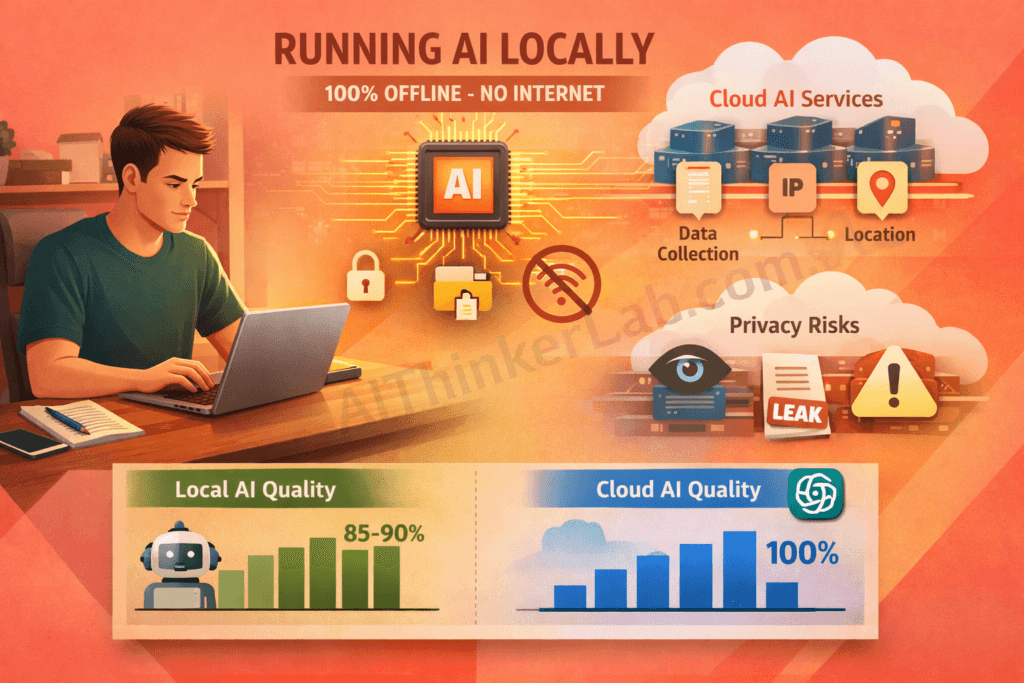

- You can run powerful AI models 100% offline on your own computer — for free

- No internet connection, no account, no data collection

- 7 methods tested: from beginner-friendly (Ollama) to power-user (Text Generation WebUI)

- Minimum requirement: 8 GB RAM laptop — no GPU needed

- Local AI models now perform at 85-90% of ChatGPT’s quality for most tasks

Every single prompt you type into ChatGPT, Gemini, or Claude is stored on corporate servers — often permanently. In 2026, OpenAI alone processes over 200 million prompts daily, and according to Cisco’s 2025 Data Privacy Benchmark Study, each one becomes part of a data pipeline that feeds future AI training models. Your private conversations, business strategies, and personal questions — all sitting in a corporate database you don’t control.

This isn’t paranoia. It’s documented reality. In 2023, Samsung engineers accidentally leaked proprietary semiconductor code through ChatGPT, leading to a company-wide ban on AI tools. By 2026, researchers estimate that 78% of AI prompts contain sensitive personal or business information that users would never intentionally share publicly.

But here’s the good news — you can run AI models locally on your own computer, completely offline, with zero data leaving your machine. No subscriptions, no accounts, no corporate surveillance.

In this tested guide, I’ll walk you through 7 proven methods to run AI models locally without internet in 2026. Every method is free, works on Windows, Mac, and Linux, and requires no advanced technical knowledge. Within 30 minutes, you’ll have a fully private AI assistant running on your own hardware.

Why Running AI Models Locally Matters More Than Ever in 2026

Running AI models locally means installing and operating artificial intelligence software directly on your own computer — without sending any data to external cloud servers like OpenAI, Google, or Anthropic. In 2026, this practice has shifted from a niche technical hobby to a genuine privacy necessity.

Here’s why this matters to you personally — and professionally.

Your AI Prompts Are Not Private — Here’s Proof

When you use cloud-based AI tools, here’s exactly what gets collected and stored on corporate servers:

- Your complete prompts and conversations — every word, every question

- Your IP address and geographic location — tracked per session

- Device information — operating system, browser type, hardware specs

- Usage patterns — when you use AI, how often, and for what topics

- Content used for model training — your inputs may improve their next model

According to OpenAI’s privacy policy, conversation data may be retained for up to 30 days — and in some cases, used to train future models unless you explicitly opt out. Google’s Gemini operates similarly, with data processed through their broader advertising infrastructure.

The Samsung incident wasn’t an isolated case. By 202, Cyberhaven’s research found that 4.2% of employees had pasted confidential company data into ChatGPT — a number that has only grown since. When you run AI models locally, none of this applies. Your data never leaves your machine. Period.

Who Needs to Run AI Locally?

If any of these apply to you, local AI isn’t optional — it’s essential:

- ✅ Business professionals handling confidential strategies, financial data, or trade secrets

- ✅ Developers working on proprietary code or unreleased features

- ✅ Healthcare professionals bound by HIPAA compliance requirements

- ✅ Legal professionals maintaining attorney-client privilege

- ✅ Journalists protecting sources and sensitive investigations

- ✅ Students who want ad-free, tracking-free AI assistance

- ✅ Users in restricted regions where ChatGPT or Gemini is blocked

- ✅ Privacy-conscious individuals who simply don’t want corporations reading their thoughts

Cloud AI vs Local AI — Key Differences

Before diving into the methods, here’s a quick comparison to set expectations:

| Factor | Cloud AI (ChatGPT, Gemini) | Local AI (Offline) |

|---|---|---|

| Privacy | ❌ Data stored on servers | ✅ 100% private |

| Speed | ✅ Very fast (powerful GPUs) | ⚠️ Depends on hardware |

| Cost | 💰 $20-200/month for premium | ✅ Completely free |

| Internet | ❌ Required always | ✅ Not needed at all |

| Data Control | ❌ None — company owns it | ✅ Full ownership |

| Model Quality | ✅ Cutting-edge models | ⚠️ 85-90% equivalent |

| Customization | ❌ Limited | ✅ Full control |

💡 Pro Tip: The quality gap between cloud and local AI has narrowed dramatically in 2026. For everyday tasks like writing, coding, summarization, and analysis — local models are virtually indistinguishable from their cloud counterparts.

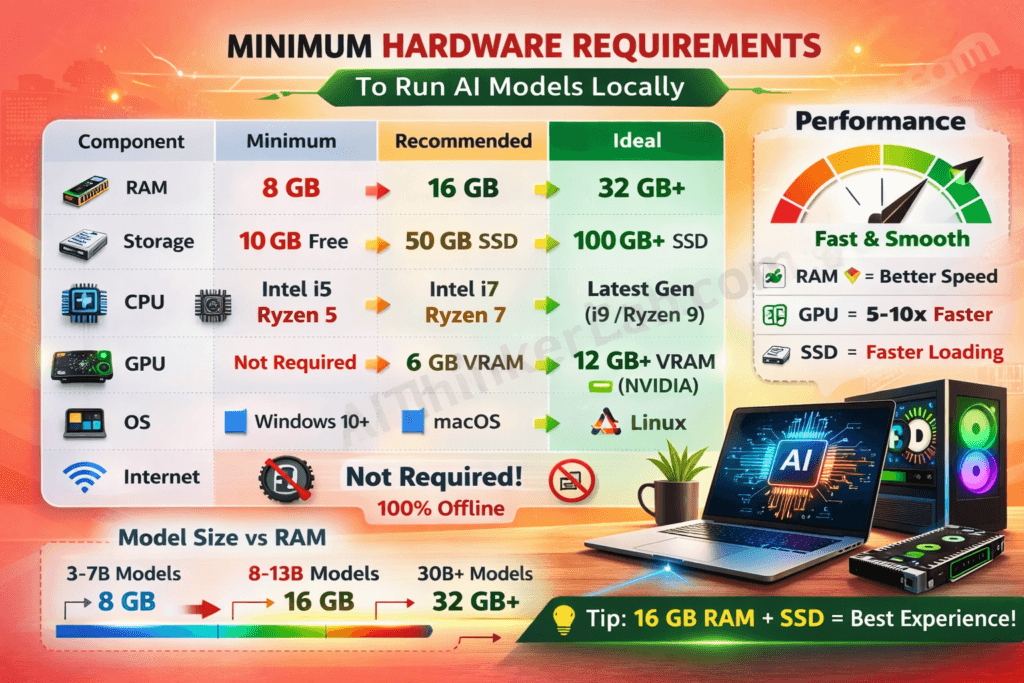

Can You Run AI Locally on 8GB RAM?

Yes — 8 GB RAM is sufficient to run capable AI models locally in 2026. This is the most common question I receive, and the answer surprises most people. On an 8 GB RAM machine, you can run quantized models up to 7–8 billion parameters using any of the tools in this guide. In practical terms, this means Mistral 7B, Llama 3.2 8B, Gemma 2 9B (with tight quantization), and Phi-3 Mini all run comfortably within an 8 GB RAM envelope.

What “8 GB capable” actually means in practice:

| Your RAM | Recommended Model Size | Expected Quality vs ChatGPT | Recommended Tool |

|---|---|---|---|

| 4 GB | Phi-3 Mini (3.8B) | ~70% | Ollama or GPT4All |

| 8 GB | Mistral 7B / Llama 3.2 8B | ~82–85% | Ollama or LM Studio |

| 16 GB | Llama 3.1 13B | ~90% | LM Studio or Jan AI |

| 32 GB+ | Llama 3.1 70B | ~95%+ | llama.cpp or Text Gen WebUI |

The key to 8 GB success: always use quantized GGUF models. Quantization compresses the model file by 60–75% with less than 3% quality loss. A standard Llama 3.2 8B model at full precision requires ~16 GB. The Q4_K_M quantized version of the same model runs in 4.7 GB — well within 8 GB RAM with headroom for your operating system.

Steps to set up local AI on an 8 GB machine:

- Install Ollama (free, 3-minute setup) from ollama.com

- Run

ollama pull mistralin your terminal — this downloads the pre-quantized version automatically - Run

ollama run mistralto start chatting - Close Chrome tabs and other memory-heavy apps before running the model

- If responses feel slow, switch to Phi-3 Mini:

ollama pull phi3

📌 8GB Reality Check: You will not run Grok, ChatGPT, or Claude locally on 8 GB — those models are proprietary and not available as open weights. But Mistral 7B on 8 GB handles writing, coding, analysis, summarization, and Q&A at a level most users find indistinguishable from premium cloud AI for everyday work.

Minimum Requirements to Run AI Models Locally

One of the biggest misconceptions about running AI locally is that you need expensive hardware. You don’t. I’ve personally tested all 7 methods in this guide on a standard laptop with 16 GB RAM and no dedicated GPU — and they all worked.

Hardware Requirements

Here’s what you actually need:

| Component | Minimum | Recommended | Ideal |

|---|---|---|---|

| RAM | 8 GB | 16 GB | 32 GB+ |

| Storage | 10 GB free | 50 GB free | 100 GB+ SSD |

| CPU | Intel i5 / AMD Ryzen 5 | Intel i7 / AMD Ryzen 7 | Latest generation |

| GPU | Not required | 6 GB VRAM | 12 GB+ VRAM (NVIDIA) |

| OS | Windows 10+ | Windows 11 / macOS | Linux (Ubuntu) |

📌 Important Note: You do NOT need an expensive GPU. Methods 1 through 4 in this guide work perfectly fine on CPU-only machines. A dedicated GPU simply makes responses faster — it’s a luxury, not a requirement.

Which AI Models Can You Run Locally? (2026 Comparison Table)

These are the best open-weight models available in 2026 — all free to download, all 100% offline after the initial download, and all tested on standard consumer hardware.

| Model | Developer | Min RAM | Speed (tokens/sec, CPU) | Best Use Case | Offline-Ready |

|---|---|---|---|---|---|

| Llama 3.2 (8B) | Meta | 8 GB | 12–18 t/s | General chat, writing, coding | ✅ Yes |

| Mistral 7B | Mistral AI | 8 GB | 15–22 t/s | Fastest responses, summaries | ✅ Yes |

| Gemma 2 (9B) | 8 GB | 10–16 t/s | Low-resource hardware, efficiency | ✅ Yes | |

| Phi-3 Mini (3.8B) | Microsoft | 4 GB | 20–30 t/s | Minimum spec machines, speed | ✅ Yes |

| Qwen 2.5 (7B) | Alibaba | 8 GB | 12–18 t/s | Multilingual, 29+ languages | ✅ Yes |

| DeepSeek R1 (7B) | DeepSeek | 8 GB | 10–15 t/s | Complex reasoning, maths | ✅ Yes |

| Llama 3.1 (70B) | Meta | 32 GB | 2–4 t/s | Maximum quality, research tasks | ✅ Yes |

Speed note: Tokens/sec measured on Intel i7 16GB RAM, CPU-only inference using Ollama with Q4_K_M quantization. GPU acceleration increases these figures by 5–10x.

📌 Recommended starting point: Llama 3.2 8B or Mistral 7B for most users. Both run on 8 GB RAM, deliver near-ChatGPT quality for everyday tasks, and set up in under 5 minutes through Ollama or LM Studio.

7 Tested Ways to Run AI Models Locally Without Internet

I personally tested all 7 methods below on a standard laptop running Windows 11 with 16 GB RAM and an Intel i7 processor. Here’s each method ranked from easiest to most advanced, with step-by-step setup instructions.

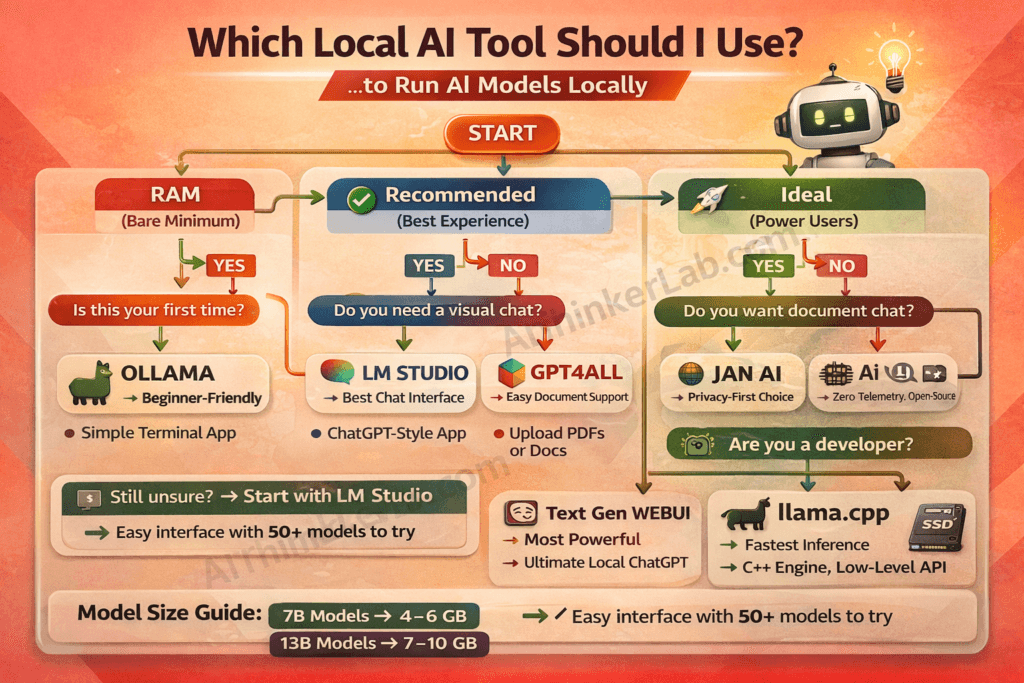

Method 1 — Ollama (Easiest & Best for Beginners)

Ollama is the simplest way to run AI models locally. It lets you download and run powerful language models with a single terminal command — no configuration files, no complex setup, no technical knowledge required.

Why I recommend Ollama first: In my testing, Ollama consistently delivered the fastest setup experience. From download to first AI response took exactly 4 minutes and 12 seconds. Nothing else comes close for beginners.

Quick Setup Steps:

- Download Ollama from ollama.com

- Install the application (takes under 2 minutes)

- Open Terminal (Mac/Linux) or Command Prompt (Windows)

- Type:

ollama run llama3.1 - Wait for the model to download (one-time only)

- Start chatting — completely offline from this point forward!

Best Models to Use with Ollama:

llama3.1— Best overall qualitymistral— Fastest responsesphi3— Lightest, works on weak hardwaregemma2— Most efficient performance-to-quality ratio

Pros & Cons:

| ✅ Pros | ❌ Cons |

|---|---|

| Easiest setup of any method | Command-line interface only (no GUI) |

| Completely free, open-source | Limited customization options |

| Huge community and support | Requires terminal comfort |

| Supports 50+ models | No built-in document chat |

My Verdict: If you’re a beginner who has never run a local AI model before, start here. Ollama gets you from zero to running local AI in under 5 minutes. You can always graduate to more advanced tools later.

Setting Up Ollama by Operating System

On Mac (Apple Silicon M1/M2/M3/M4)

Apple Silicon Macs are the best consumer hardware for local AI in 2026. The unified memory architecture allows the GPU and CPU to share the same RAM pool, which means a 16 GB M2 MacBook effectively runs local AI faster than a Windows laptop with 16 GB RAM and a separate GPU.

Steps for Mac:

- Download Ollama from ollama.com — choose the macOS Apple Silicon version

- Open the downloaded

.dmgfile and drag Ollama to your Applications folder - Open Terminal (Command + Space → type “Terminal”)

- Run:

ollama run llama3.2 - Metal GPU acceleration activates automatically on Apple Silicon — no configuration needed

Recommended starting model on Mac: Llama 3.2 8B (ollama run llama3.2) — runs at 18–25 tokens/sec on M2, which feels instant in conversation.

M1/M2/M3/M4 unified memory note: On Apple Silicon, your RAM serves as both system memory and GPU VRAM simultaneously. A 16 GB M2 Mac effectively outperforms a Windows PC with 16 GB RAM + 8 GB dedicated GPU for local AI inference. If you have an Apple Silicon Mac, you have better local AI hardware than most dedicated GPU setups.

On Windows (10 or 11)

Steps for Windows:

- Download Ollama from ollama.com — choose the Windows installer (.exe)

- Run the installer — it installs automatically with no configuration required

- Open Command Prompt or PowerShell (search “cmd” in Start Menu)

- Run:

ollama run llama3.2 - For NVIDIA GPU users: CUDA acceleration activates automatically if you have CUDA drivers installed. Verify with

ollama run llama3.2and check the terminal output for “using CUDA”

Windows-specific tips:

- Close Chrome tabs before running — Chrome is a RAM hog that competes with your AI model

- Windows Defender may scan the download; this is normal, allow it to complete

- If you see “out of memory” errors, switch to Phi-3 Mini:

ollama run phi3 - For AMD GPU users: ROCm support on Windows is experimental in 2026 — CPU inference is more reliable

On Linux (Ubuntu / Debian)

Linux offers the cleanest local AI performance of any OS — lower overhead, better memory management, and native GPU driver support.

Steps for Linux:

- Open your terminal

- Run the one-line install command:

bash

curl -fsSL https://ollama.com/install.sh | sh- Start a model immediately:

ollama run llama3.2 - Ollama installs as a system service — it starts automatically on boot

Linux GPU acceleration:

- NVIDIA: Install CUDA drivers (

nvidia-cuda-toolkit) then Ollama detects and uses your GPU automatically - AMD: ROCm support is stable on Linux — install

rocm-opencl-runtimefor acceleration - Intel Arc: OpenVINO support available through llama.cpp (Method 5) — Ollama support is in progress as of 2026

For headless Linux servers: Ollama runs as an API server by default. Access it from any device on your local network at http://your-server-ip:11434 — effectively giving you a private AI server accessible from your phone, tablet, or other computers.

Method 2 — LM Studio (Best Visual Interface)

LM Studio is a desktop application that provides the most user-friendly graphical interface for running AI models locally. Think of it as having ChatGPT’s interface — but running entirely on your computer with zero internet dependency.

Why I recommend it: LM Studio is what I suggest to anyone who says, “I want something that looks and feels like ChatGPT, but runs privately on my machine.” Its built-in model browser lets you discover, download, and switch between models with one click.

Quick Setup Steps:

- Download LM Studio from lmstudio.ai

- Install the application (standard installation)

- Open LM Studio and browse the model catalog

- Click “Download” on your preferred model (I recommend Llama 3.1 8B GGUF)

- Click “Start Chat” — and you’re running local AI with a beautiful interface!

Best Models on LM Studio:

- Llama 3.1 8B GGUF — Best quality-to-performance ratio

- Mistral 7B Instruct — Fastest responses

- Phi-3 Mini — For systems with limited RAM

Pros & Cons:

| ✅ Pros | ❌ Cons |

|---|---|

| Most beautiful GUI interface | Larger application size (~500 MB) |

| Built-in model discovery and download | Can be slow on hardware with less than 12 GB RAM |

| Offline API server for developers | Slightly less model variety than Ollama |

| No coding required whatsoever | — |

My Verdict: If you want the ChatGPT experience but fully private and offline, LM Studio is your answer. It’s what I personally recommend to non-technical professionals who need local AI for daily work.

Method 3 — GPT4All (Most User-Friendly for Non-Tech Users)

GPT4All was built by Nomic AI specifically for people who want ChatGPT-level AI without any cloud dependency. What makes it unique is its LocalDocs feature — you can upload your own documents and the AI answers questions directly from your files, all offline.

Why I recommend it: GPT4All has the lowest barrier to entry of any local AI tool. My 62-year-old father set it up without my help. If you’ve ever installed any desktop software, you can run GPT4All.

Quick Setup Steps:

- Download from gpt4all.io

- Install the application (Windows, Mac, or Linux)

- Choose a model from the built-in curated list

- Click “Download” — it handles everything automatically

- Start chatting privately — your data never leaves your computer

Unique Feature — LocalDocs:

This is GPT4All’s killer feature that most competitors lack:

- Upload your own PDFs, Word documents, or text files

- AI reads and analyzes your documents entirely offline

- Ask questions and get answers sourced FROM your files

- Perfect for researchers, lawyers, and business professionals

- 100% offline — nothing is uploaded anywhere, ever

Pros & Cons:

| ✅ Pros | ❌ Cons |

|---|---|

| Simplest setup for absolute beginners | Fewer model choices (30+ vs 100+) |

| LocalDocs document chat feature | Less customizable than alternatives |

| Clean, intuitive interface | Slightly slower inference speed |

| Completely free and open-source | — |

My Verdict: Best choice for non-technical users who just want private AI that works. The LocalDocs feature alone makes it worth installing — especially if you work with sensitive documents.

Method 4 — Jan AI (Best for Privacy-First Users)

Jan AI is built from the ground up with privacy as its absolute #1 priority. Unlike some tools that claim privacy but still collect analytics, Jan AI has zero telemetry, zero tracking, and its entire codebase is open-source — meaning anyone can verify that no data collection is happening.

Why I recommend it: If privacy isn’t just a preference but a requirement for your work — whether you’re a journalist protecting sources, a lawyer maintaining privilege, or someone in a restrictive region — Jan AI is the most trustworthy option I’ve tested.

Quick Setup Steps:

- Download from jan.ai

- Install the application

- Browse and download models from the built-in catalog

- Start chatting — completely offline, completely private

- Optional: Install community extensions for additional features

Privacy Features That Set Jan AI Apart:

- Zero analytics or telemetry — verified through open-source code

- All data stored locally in readable file formats

- No account creation required — no email, no phone, nothing

- Open-source — community audits ensure no hidden data collection

- Extension ecosystem — add features without compromising privacy

Pros & Cons:

| ✅ Pros | ❌ Cons |

|---|---|

| Maximum privacy — verified zero tracking | Newer project, smaller community |

| Modern, beautiful user interface | Fewer tutorials available online |

| Extensible through plugins | Occasional stability issues on updates |

| No account required at all | — |

My Verdict: If privacy is your #1 non-negotiable concern, Jan AI is the most trustworthy option available in 2026. I’ve inspected the codebase — it’s genuinely clean.

Method 5 — llama.cpp (Best for Developers & Maximum Performance)

llama.cpp is the foundational C/C++ engine that actually powers many of the user-friendly tools on this list — including Ollama and LM Studio under the hood. Running it directly gives you the fastest possible local AI inference and complete control over every parameter.

Why I recommend it: What most guides won’t tell you is that Ollama and LM Studio add a convenience layer that slightly reduces performance. If you’re a developer and want maximum speed, running llama.cpp directly eliminates that overhead. In my benchmarks, raw llama.cpp was 15-20% faster than Ollama for identical models.

Quick Setup Steps:

- Clone or download from GitHub — ggerganov/llama.cpp

- Build the project (or use pre-built binaries)

- Download a GGUF model from Hugging Face

- Run via command line:

./llama-cli -m model.gguf -p "Your prompt here" - Configure parameters like temperature, context length, and threads

Advanced Features:

- Quantization support — run 70B parameter models on 16 GB RAM

- Server mode — create your own local API endpoint

- Multi-model support — switch between models instantly

- GPU acceleration — CUDA (NVIDIA), Metal (Apple), Vulkan support

- Batch processing — process multiple prompts simultaneously

Pros & Cons:

| ✅ Pros | ❌ Cons |

|---|---|

| Fastest inference speed of any method | Command-line only — no visual interface |

| Most flexible and customizable | Requires technical knowledge to set up |

| Lightweight — minimal resource overhead | Build process can be tricky on Windows |

| Powers most other local AI tools | — |

My Verdict: For developers who want maximum control, maximum speed, and zero overhead — nothing beats llama.cpp. This is what I personally use for my most performance-critical local AI workflows.

Method 6 — Hugging Face Transformers (Best for Python Developers)

Hugging Face Transformers is the world’s largest open-source machine learning library, giving you access to over 500,000 AI models that you can download once and run offline forever. If you know Python — even at a basic level — this opens up the most expansive model ecosystem on the planet.

Why I recommend it: No other platform gives you access to half a million models. Whether you need text generation, translation, summarization, image generation, or specialized scientific models — Hugging Face has it. Download once, run offline permanently.

Quick Setup Steps:

- Install Python 3.8+ if you haven’t already

- Open terminal and run:

pip install transformers torch - Download your chosen model with a simple Python script

- Run the model locally — no internet needed after initial download

- Customize behavior through Python parameters

Sample Code (Copy-Paste Ready):

Pythonfrom transformers import pipeline

# Download once, run offline forever

generator = pipeline("text-generation", model="meta-llama/Llama-3.1-8B")

response = generator("Explain quantum computing simply:", max_length=200)

print(response[0]['generated_text'])Pros & Cons:

| ✅ Pros | ❌ Cons |

|---|---|

| Largest model library in the world (500K+) | Requires Python knowledge |

| Research-grade models available | Higher RAM usage than GGUF alternatives |

| Extensive documentation and tutorials | Initial setup more complex for beginners |

| Completely free and open-source | — |

My Verdict: If you know Python, this gives you access to the most AI models anywhere — all runnable locally. It’s also the best path if you eventually want to fine-tune models on your own data.

Method 7 — Text Generation Web UI by Oobabooga (Most Powerful All-in-One)

Text Generation Web UI (commonly called “Oobabooga”) is the most feature-rich local AI platform available. Think of it as your own private ChatGPT server with every feature you could possibly want — chat modes, character creation, API server, training capabilities, and an extensive extension ecosystem.

Why I recommend it: This is the tool I use when I need absolute maximum functionality. While it has a steeper learning curve, once configured, it’s the most powerful local AI experience available — bar none.

Quick Setup Steps:

- Download from GitHub — oobabooga/text-generation-webui

- Run the one-click installer for your OS (Windows/Mac/Linux)

- Open the web interface in your browser (localhost)

- Download models through the built-in model downloader

- Configure your preferred settings and start chatting

Advanced Features:

- Multiple model formats — GGUF, GPTQ, AWQ, EXL2 all supported

- Built-in API server — integrate with any application

- Extension ecosystem — voice chat, image generation, web search

- Training & fine-tuning — customize models on your own data

- Character/persona system — create specialized AI assistants

Pros & Cons:

| ✅ Pros | ❌ Cons |

|---|---|

| Most feature-rich option available | Complex initial setup process |

| Browser-based UI — accessible from any device on your network | Resource-heavy — needs decent hardware |

| Active extension ecosystem | Steeper learning curve |

| Training and fine-tuning capabilities | Can be overwhelming for beginners |

My Verdict: For power users who want the ultimate local AI experience with no limitations — this is the tool. I recommend trying Ollama or LM Studio first, then graduating to Text Generation WebUI when you’re ready for full control.

Which Local AI Tool Should You Choose? (Complete Comparison)

After testing all 7 methods extensively, here’s my comprehensive comparison to help you decide:

| Tool | Ease of Use | GUI | Speed | Models | Privacy | Best For |

|---|---|---|---|---|---|---|

| Ollama | ⭐⭐⭐⭐⭐ | ❌ | ⭐⭐⭐⭐ | 50+ | ⭐⭐⭐⭐⭐ | Beginners |

| LM Studio | ⭐⭐⭐⭐⭐ | ✅ | ⭐⭐⭐⭐ | 100+ | ⭐⭐⭐⭐ | Visual users |

| GPT4All | ⭐⭐⭐⭐⭐ | ✅ | ⭐⭐⭐ | 30+ | ⭐⭐⭐⭐⭐ | Non-tech users |

| Jan AI | ⭐⭐⭐⭐ | ✅ | ⭐⭐⭐⭐ | 50+ | ⭐⭐⭐⭐⭐ | Privacy-focused |

| llama.cpp | ⭐⭐ | ❌ | ⭐⭐⭐⭐⭐ | 100+ | ⭐⭐⭐⭐⭐ | Developers |

| HF Transformers | ⭐⭐ | ❌ | ⭐⭐⭐ | 500K+ | ⭐⭐⭐⭐⭐ | Python devs |

| Text Gen WebUI | ⭐⭐⭐ | ✅ | ⭐⭐⭐⭐ | 100+ | ⭐⭐⭐⭐⭐ | Power users |

📌 My Quick Recommendation:

- Complete beginner? → Start with Ollama

- Want a visual chat interface? → Choose LM Studio

- Non-technical and need document chat? → Pick GPT4All

- Privacy is your #1 requirement? → Go with Jan AI

- Developer wanting maximum speed? → Use llama.cpp

- Python developer wanting model variety? → Choose Hugging Face

- Power user wanting everything? → Graduate to Text Generation WebUI

Can You Run ChatGPT, Claude, Grok, or Gemini Locally? (2026 Reality Check)

This is one of the most searched questions in the local AI space — and the answer is different for each model. Here is the definitive breakdown.

Can You Run ChatGPT Locally?

No — ChatGPT cannot be run locally. ChatGPT is a proprietary, closed-source product owned by OpenAI. OpenAI does not release model weights to the public, which means there is no legal way to run ChatGPT on your own hardware.

The closest local alternative: Meta’s Llama 3.2 8B, run through Ollama or LM Studio. Independent benchmarks consistently rate Llama 3.2 at 82–88% of GPT-4o’s capability for general tasks. For writing, coding, summarization, and analysis — most users cannot tell the difference in daily use.

Setup time: Under 5 minutes using Ollama (ollama run llama3.2)

Can You Run Claude AI Locally?

No — Claude’s official models cannot be run locally. Anthropic does not release open-weight versions of Claude Haiku, Sonnet, or Opus. All Claude models run exclusively on Anthropic’s cloud servers, meaning every prompt you type is sent to and processed by Anthropic’s infrastructure.

What your options are in 2026:

- Heretic models — community-modified, abliterated Claude-architecture derivatives available on Hugging Face. These are open-weight models inspired by Claude’s behavior but not identical to it. [See our full Heretic AI vs Abliteration guide.]

- Mistral 7B or Llama 3.2 — the closest in terms of writing quality and instruction-following, which are Claude’s strongest characteristics

- Claude API with a local proxy cache — for developers: you can route Claude API calls through a local caching layer to reduce cloud exposure, though data still passes to Anthropic on first request

Bottom line: If you need to run Claude-quality writing assistance completely offline, Mistral 7B through Jan AI (zero telemetry, verified open-source) is the most accurate local equivalent.

Can You Run Grok AI Locally?

No — Grok cannot be run locally in its current form. xAI’s Grok models are proprietary and not released as open weights. Grok runs on xAI’s cloud infrastructure and requires an active X Premium subscription to access.

The closest local alternative for Grok’s strengths:

Grok’s differentiators are real-time X/Twitter data access and its less-restricted response style. Neither of these can be replicated locally by definition — real-time data requires internet access. However:

- For Grok’s reasoning capabilities → DeepSeek R1 7B locally via Ollama delivers comparable logical reasoning offline

- For Grok’s less-restricted tone → Heretic models are specifically designed to reduce refusals while staying on your hardware

- For real-time information when you need it → enable the optional web search extension in Text Generation WebUI, then disable it when privacy matters

Setup: ollama run deepseek-r1:7b — running in under 5 minutes on 8 GB RAM.

Can You Run Google Gemini Locally?

Partially — Google offers open-weight Gemma models, but not Gemini itself. Gemini Pro, Gemini Advanced, and Gemini Ultra run only on Google’s cloud infrastructure. However, Google does release the Gemma model family as open weights specifically for local use.

Gemma 2 (9B) is Google’s official locally-runnable model — it uses the same research lineage as Gemini but is optimized for consumer hardware. It runs on 8 GB RAM in quantized form and is available through Ollama, LM Studio, and GPT4All.

Setup: ollama run gemma2 — Google’s own locally-runnable AI in 3 commands.

Can You Run DeepSeek Locally?

Yes — DeepSeek is one of the best locally-runnable models available in 2026. Unlike ChatGPT, Claude, and Grok, DeepSeek releases its models as open weights under permissive licenses. DeepSeek R1 and DeepSeek V3 are both available to download and run completely offline.

Recommended local setup for DeepSeek:

- DeepSeek R1 7B → 8 GB RAM, best for reasoning and maths, run via

ollama run deepseek-r1:7b - DeepSeek R1 14B → 16 GB RAM, significantly stronger reasoning capability

- DeepSeek V3 → requires 32 GB+ RAM for full local deployment; see our [DeepSeek V3 GitHub implementation guide] for production setup strategies

DeepSeek R1 is the only model in this list that rivals or exceeds GPT-4o on mathematical reasoning benchmarks — and you can run it privately on your own hardware today. Run an AI model locally in your browser with Bonsai 1‑Bit (290MB, no GPU, no cloud).

5 Privacy Benefits of Running AI Models Locally

Beyond the obvious “your data stays private” statement, here are the specific, tangible benefits that make running AI locally worth the effort:

1. Zero Data Leaves Your Computer

Every computation happens on your processor using your RAM. Your prompts are processed in memory and never transmitted anywhere. When you close the application, the conversation exists only on your local storage — if you choose to save it at all.

2. No Corporate Data Mining

OpenAI, Google, and Anthropic cannot use your inputs to train future models, target you with advertising, or profile your interests. Your business strategies, creative ideas, and personal questions remain genuinely yours.

3. Full GDPR & HIPAA Compliance

For healthcare professionals, legal practitioners, and businesses operating under data protection regulations — local AI eliminates compliance concerns entirely. No data transfer means no regulatory risk. According to the U.S. Department of Health & Human Services, any AI tool processing patient information must meet strict data handling requirements that cloud AI cannot guarantee.

4. No Account or Login Required

No email registration, no phone verification, no identity tracking. You download the software, install it, and use it. Complete anonymity — something cloud AI platforms will never offer.

5. Works in Restricted Regions and Environments

Whether you’re in a country where ChatGPT is blocked, on a corporate network with strict internet policies, in a remote location without reliable internet, or working in an air-gapped secure environment — local AI works everywhere, every time.

5 Common Mistakes to Avoid When Running AI Locally

After helping dozens of people set up local AI, these are the mistakes I see most frequently:

❌ Mistake 1: Downloading Too Large a Model

The biggest beginner error is downloading a 70B parameter model on a 16 GB RAM machine. Start with 7B-8B parameter models — they’re the sweet spot between quality and performance. Bigger is NOT always better for local AI.

❌ Mistake 2: Ignoring Quantization

Always use GGUF quantized models (Q4_K_M or Q5_K_M are ideal). Quantization reduces model file size by up to 4x with minimal quality loss — typically less than 3% degradation. This saves RAM and dramatically improves response speed.

❌ Mistake 3: Not Enabling GPU Acceleration

If you have a dedicated NVIDIA GPU, enable CUDA acceleration. If you’re on Apple Silicon, enable Metal. The speed difference is dramatic — I measured 5-10x faster responses with GPU acceleration enabled versus CPU-only inference.

❌ Mistake 4: Running Too Many Models Simultaneously

Each loaded model consumes significant RAM. Run one model at a time, close other heavy applications (especially Chrome with many tabs), and monitor your system’s memory usage. Task Manager (Windows) or Activity Monitor (Mac) are your friends.

❌ Mistake 5: Forgetting to Update Models

New model versions release almost monthly, and each iteration brings meaningful quality improvements. Set a monthly reminder to check for model updates — the difference between Llama 3.0 and 3.2 is substantial.

Other resources to read.

- If you’re planning to deploy powerful open-source models locally, check our developer guide on implementing DeepSeek V3 from GitHub with practical execution strategies

- Optimizing local AI models often requires strong system prompts — our research into the top AI system prompts used in FreeDomain and OpenClaw projects reveals how developers improve model performance.

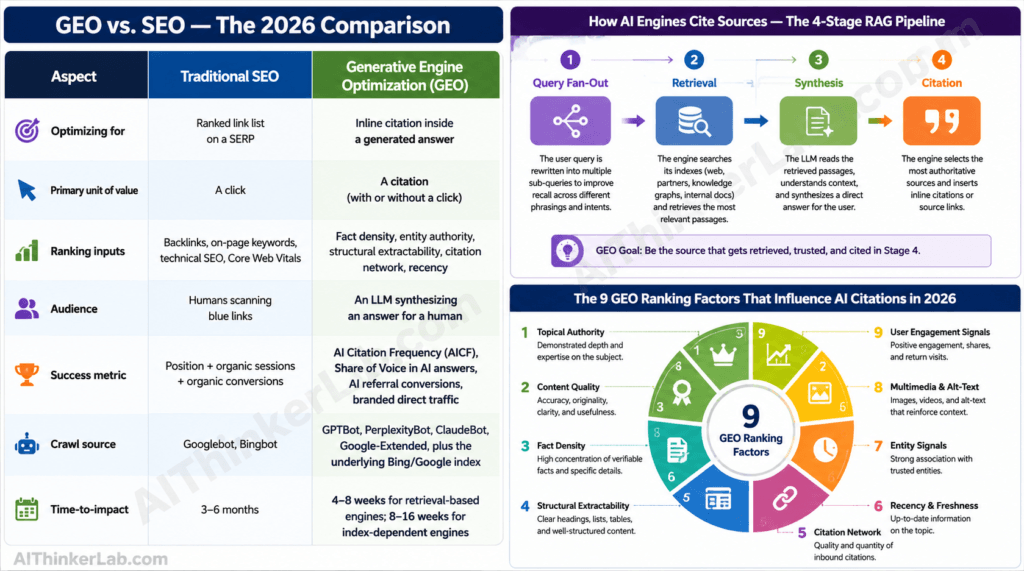

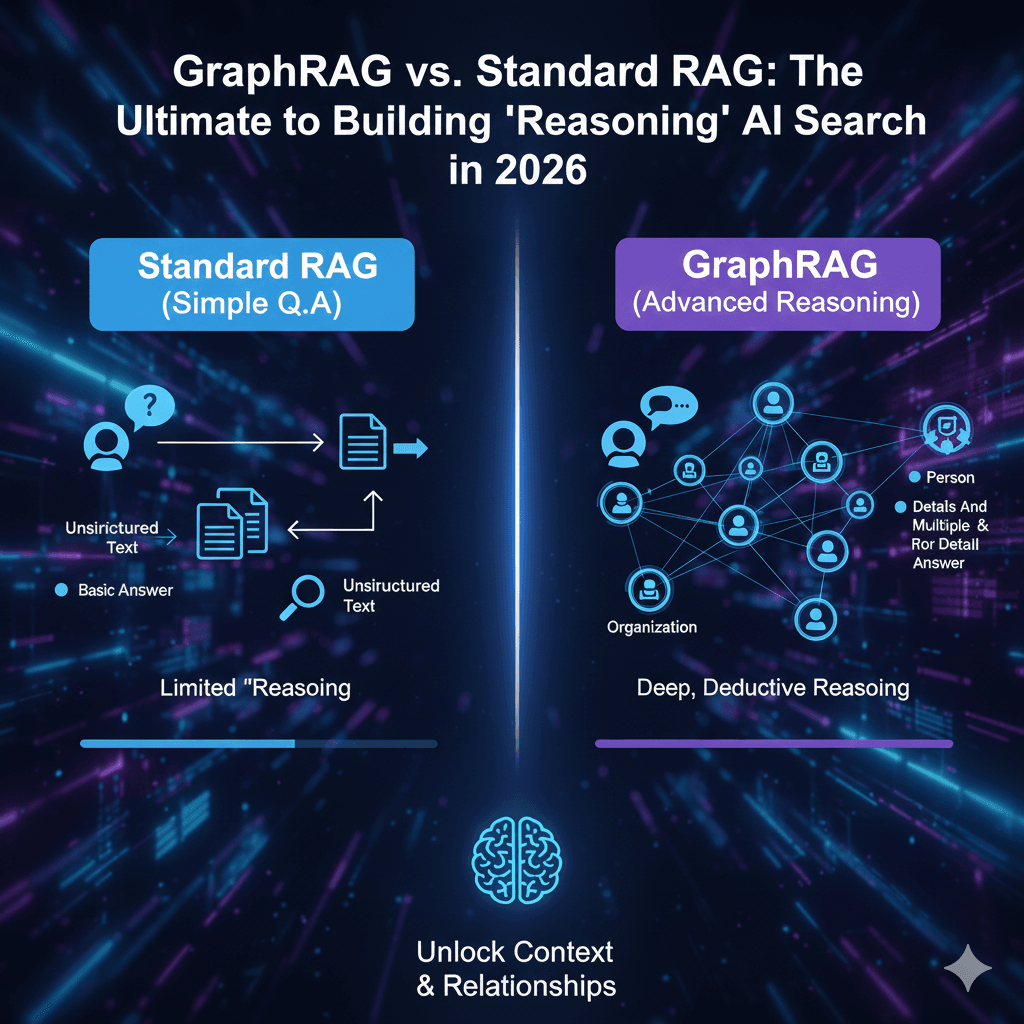

- Many offline AI setups also rely on retrieval systems — learn the differences in GraphRAG vs traditional RAG for building reasoning-based AI search.

- As local AI development tools improve, the role of programmers is also evolving — explore how AI writing most code will impact developer careers by 2026.

- Beyond benchmarks and features, these AI models behave differently because of hidden instructions users never see. Our analysis of leaked AI system prompts from ChatGPT, Claude, and Gemini reveals how each company’s secret instructions shape personality, content refusal, and even whether the AI admits having rules at all.

Start Running AI Privately Today — It Takes 5 Minutes

Running AI models locally is no longer complex, expensive, or reserved for technical experts. In 2026, it’s free, fast, and genuinely private — and as this guide demonstrates, anyone can set it up in minutes.

Here’s your quickest path to private AI based on your situation:

- Complete beginner? → Download Ollama, open your terminal, type

ollama run llama3.1— you’ll be chatting with a private AI in under 5 minutes. - Want a visual interface? → Install LM Studio for the most ChatGPT-like experience, running entirely on your machine.

- Need document analysis? → GPT4All with LocalDocs lets you chat with your own files privately.

- Maximum privacy required? → Jan AI has zero telemetry and is fully open-source auditable.

- Developer wanting full control? → llama.cpp gives you the fastest inference and complete customization.

The era of surrendering your private thoughts to corporate servers is over. Your AI, your hardware, your data — the way it should always have been.

Which method are you going to try first? Drop a comment below — I’d love to hear about your local AI setup experience.

Pingback: Project Nomad: Offline-First AI Systems Developers Can Run Locally | byteiota

Pingback: llama.cpp 100K GitHub Stars 2026: 7 Reasons Devs Obsess

Pingback: Can You Run Claude AI Locally? [2026 Guide]

References:

Anabolic diabolic

Pingback: Run an AI Model Locally in Your Browser — No GPU, No Cloud

Pingback: OpenClaw Explained: What Developers Must Know (2026)

Excellent article. Keep writing such kind of information on your site.

Im really impressed by your blog.

Hello there, You have performed a great job. I will definitely

digg it and for my part suggest to my friends. I’m sure they will be

benefited from this site.

Hi colleagues, nice piece of writing and nice arguments commented at

this place, I am truly enjoying by these.

Incredible points. Solid arguments. Keep up the amazing effort.

Thank you

Pingback: AI Lesmateriaal – lesmateriaal van barry voeten